Brief responses to the critiques:

Results don’t discuss encoding/representation of abstractions

Totally agree with this one, it's the main thing I've worked on over the past month and will probably be the main thing in the near future. I'd describe the previous results (i.e. ignoring encoding/representation) as characterizing the relationship between the high-level and the high-level.

Definitions depend on choice of variables

The local/causal structure of our universe gives a very strong preferred way to "slice it up"; I expect that's plenty sufficient for convergence of abstractions. For instance, it doesn't make sense to use variables which "rotate together" the states of five different local patches of spacetime which are not close to each other. (For instance, those five different local patches will generally not be rotated together by default in an evolving agent's sensory feed.)

That does still leave degrees of freedom in how we represent all the local patches, but those are exactly the degrees of freedom which don't matter for natural abstraction. (Under the minimal latent formulation: we can represent each individual variable or set-of-variables-which-we're-making-independent-of-some-other-stuff in a different way without changing anything informationally. Under the redundancy formulation: assume our resampling process allows simultaneous resampling of small sets of variables, to avoid the thing where there's two variables very tightly coupled but they're otherwise independent of everything else. With that modification in place, same argument as the minimal latent formulation applies.)

Theorems focus on infinite limits, but abstractions happen in finite regimes

Totally agree with this one too, and it has also been a major focus for me over the past couple months.

I'd also offer this as one defense of my relatively low level of formality to date: finite approximations are clearly the right way to go, and I didn't yet know the best way to handle finite approximations. I gave proof sketches at roughly the level of precision which I expected to generalize to the eventual "right" formalizations. (The more general principle here is to only add formality when it's the right formality, and not to prematurely add ad-hoc formulations just for the sake of making things more formal. If we don't yet know the full right formality, then we should sketch at the level we think we do know.)

Missing theoretical support for several key claims

Basically agree with this. In particular, I think the quoted block is indeed a place where I was a bit overexcited at the time and made too strong a claim. More generally, for a while I was thinking of "deterministic constraints" as basically implying "low-dimensional" in practice, based on intuitions from physics. But in hindsight, that's at least not externally-legibly true, and arguably not true in general at all.

Figuring out whether the Universality Hypothesis is true

... What we’re less convinced of is that the current theoretical approach is a good way to tackle this question. One worrying sign is that almost two years after the project announcement (and over three years after work on natural abstractions began), there still haven’t been major empirical tests, even though that was the original motivation for developing all of the theory. ... Of course sometimes experiments do require upfront theory work. But in this case, we think that e.g. empirical interpretability work is already making progress on the Universality Hypothesis, whereas we’re unsure whether the natural abstractions agenda is much closer to major empirical tests than it was two years ago.

See the section on "Low level of precision...". Also, You Are Not Measuring What You Think You Are Measuring is a very relevant principle here - I have lots of (not necessarily externally-legible) bits of evidence about a rough version of natural abstraction, but the details I'm still figuring out are (not coincidentally) exactly the details where it's hard to tell whether we're measuring the right thing.

Abstractions as a bottleneck for agent foundations: The high-level story for why abstractions seem important for formalizing e.g. values seems very plausible to us. It’s less clear to us whether they are necessary (or at least a good first step)

Yeah, I don't think this should be externally-legibly clear right now. I think people need to spend a lot of time trying and failing to tackle agent foundations problem themselves, repeatedly running into the need for a proper model of abstraction, in order for this to be clear.

Accelerating alignment research: The promise behind this motivation is that having a theory of natural abstractions will make it much easier to find robust formalizations of abstractions such as “agency”, “optimizer”, or “modularity”. ... To us, such an outcome seems unlikely, though it may still be worth pursuing

I probably put higher probability on success here then you do, but I don't think it should be legibly clear.

Interpretability: ... Figuring out the real-world meaning of internal network activations is one of the core themes of safety-motivated interpretability work. And reverse-engineering a network into “pseudocode” is not just some separate problem, it’s deeply intertwined. We typically understand the inputs of a network, so if we can figure out how the network transforms these inputs, that can let us test hypotheses for what the meaning of internal activations is.

An intuitive understanding of inputs plus a circuit is not, in general, sufficient to interpret the internal things computed by the circuit. Easy counterargument: neural nets are circuits, so if those two pieces were enough, we'd already be done; there would be no interpretability problem in the first place.

Existing work has managed to go from pseudocode/circuits to interpretation of inputs mainly by looking at cases where the circuits in question are very small and simple - e.g. edge detectors in Olah's early work, or the sinusoidal elements in Neel's work on modular addition. But this falls apart quickly as the circuits get bigger - e.g. later layers in vision nets, once we get past early things like edge and texture detectors.

Low level of precision and formalization

I mentioned earlier the heuristic of "only add formality when it's the right formality; don't prematurely add ad-hoc formulations just for the sake of making things more formal".

More generally, if you're used to academia, then bear in mind the incentives of academia push towards making one's work defensible to a much greater degree than is probably optimal for truth-seeking. Formalization is one part of this: in academia, the incentive is usually to add ad-hoc formalization in order to get a full formal proof rather than a sketch, even if the ad-hoc formalization added does not match reality well. On the experimental side, the incentive is usually on bulletproof results, rather than gaining lots of information. (... and that's the better case. In the worse case, the incentive is on jumping through certain hoops which are nominally about bulletproofing, but don't even do that job very well, like e.g. statistical significance.) And yes, defensibility does have value even for truth-seeking, but there are tradeoffs and I advise against anchoring too much on academia.

With that in mind: both my current work and most of my work to date is aimed more at truth-seeking than defensibility. I don't think I currently have all the right pieces, and I'm trying to get the right pieces quickly. For that purpose, it's important to make the stuff I think I understand as legible as possible so that others can help. I try to accurately convey my models and epistemic state. But it's not important to e.g. make it easy for others to point out mistakes in places where I didn't think the formality was right anway. If and when I have all the pieces, then I can worry about defensible proof.

That said, I agree with at least some parts of the critique. Being both precise and readable at the same time is hard, man.

Few experiments

As we briefly discussed earlier, we think it’s worrying that there haven’t been major experiments on the Natural Abstraction Hypothesis, given that John thinks of it as mostly an empirical claim. We would be excited to see more discussion on experiments that can be done right now to test (parts of) the natural abstractions agenda! We elaborate on a preliminary idea in the appendix (though it has a number of issues).

I do love your experiment ideas! The experiments I ran last summer had a similar flavor - relatively-simple checks on MNIST nets - though they were focused on the "information at a distance" lens rather than the redundancy or minimal latent lenses.

Anyway, similar answer here as the previous section: at this point I'm mainly trying to get to the right answers quickly, not trying to provide some impressive defensible proof. I run experiments insofar as they give me bits about what the right answers are.

Thanks for the responses! I think we qualitatively agree on a lot, just put emphasis on different things or land in different places on various axes. Responses to some of your points below:

The local/causal structure of our universe gives a very strong preferred way to "slice it up"; I expect that's plenty sufficient for convergence of abstractions. [...]

Let me try to put the argument into my own words: because of locality, any "reasonable" variable transformation can in some sense be split into "local transformations", each of which involve only a few variables. These local transformations aren't a problem because if we, say, resample variables at a time, then transforming variables doesn't affect redundant information.

I'm tentatively skeptical that we can split transformations up into these local components. E.g. to me it seems that describing some large number of particles by their center of mass and the distance vectors from the center of mass is a very reasonable description. But it sounds like you have a notion of "reasonable" in mind that's more specific then the set of all descriptions physicists might want to use.

I also don't see yet how exactly to make this work given local transformations---e.g. I think my version above doesn't quite work because if you're resampling a finite number of variables at a time, then I do think transforms involving fewer than variables can sometimes affect redundant information. I know you've talked before about resampling any finite number of variables in the context of a system with infinitely many variables, but I think we'll want a theory that can also handle finite systems. Another reason this seems tricky: if you compose lots of local transformations, for overlapping local neighborhoods, you get a transformation involving lots of variables. I don't currently see how to avoid that.

I'd also offer this as one defense of my relatively low level of formality to date: finite approximations are clearly the right way to go, and I didn't yet know the best way to handle finite approximations. I gave proof sketches at roughly the level of precision which I expected to generalize to the eventual "right" formalizations. (The more general principle here is to only add formality when it's the right formality, and not to prematurely add ad-hoc formulations just for the sake of making things more formal. If we don't yet know the full right formality, then we should sketch at the level we think we do know.)

Oh, I did not realize from your posts that this is how you were thinking about the results. I'm very sympathetic to the point that formalizing things that are ultimately the wrong setting doesn't help much (e.g. in our appendix, we recommend people focus on the conceptual open problems like finite regimes or encodings, rather than more formalization). We may disagree about how much progress the results to date represent regarding finite approximations. I'd say they contain conceptual ideas that may be important in a finite setting, but I also expect most of the work will lie in turning those ideas into non-trivial statements about finite settings. In contrast, most of your writing suggests to me that a large part of the theoretical work has been done (not sure to what extent this is a disagreement about the state of the theory or about communication).

Existing work has managed to go from pseudocode/circuits to interpretation of inputs mainly by looking at cases where the circuits in question are very small and simple - e.g. edge detectors in Olah's early work, or the sinusoidal elements in Neel's work on modular addition. But this falls apart quickly as the circuits get bigger - e.g. later layers in vision nets, once we get past early things like edge and texture detectors.

I totally agree with this FWIW, though we might disagree on some aspects of how to scale this to more realistic cases. I'm also very unsure whether I get how you concretely want to use a theory of abstractions for interpretability. My best story is something like: look for good abstractions in the model and then for each one, figure out what abstraction this is by looking at training examples that trigger the abstraction. If NAH is true, you can correctly figure out which abstraction you're dealing with from just a few examples. But the important bit is that you start with a part of the model that's actually a natural abstraction, which is why this approach doesn't work if you just look at examples that make a neuron fire, or similar ad-hoc ideas.

More generally, if you're used to academia, then bear in mind the incentives of academia push towards making one's work defensible to a much greater degree than is probably optimal for truth-seeking.

I agree with this. I've done stuff in some of my past papers that was just for defensibility and didn't make sense from a truth-seeking perspective. I absolutely think many people in academia would profit from updating in the direction you describe, if their goal is truth-seeking (which it should be if they want to do helpful alignment research!)

On the other hand, I'd guess the optimal amount of precision (for truth-seeking) is higher in my view than it is in yours. One crux might be that you seem to have a tighter association between precision and tackling the wrong questions than I do. I agree that obsessing too much about defensibility and precision will lead you to tackle the wrong questions, but I think this is feasible to avoid. (Though as I said, I think many people, especially in academia, don't successfully avoid this problem! Maybe the best quick fix for them would be to worry less about precision, but I'm not sure how much that would help.) And I think there's also an important failure mode where people constantly think about important problems but never get any concrete results that can actually be used for anything.

It also seems likely that different levels of precision are genuinely right for different people (e.g. I'm unsurprisingly much more confident about what the right level of precision is for me than about what it is for you). To be blunt, I would still guess the style of arguments and definitions in your posts only work well for very few people in the long run, but of course I'm aware you have lots of details in your head that aren't in your posts, and I'm also very much in favor of people just listening to their own research taste.

both my current work and most of my work to date is aimed more at truth-seeking than defensibility. I don't think I currently have all the right pieces, and I'm trying to get the right pieces quickly.

Yeah, to be clear I think this is the right call, I just think that more precision would be better for quickly arriving at useful true results (with the caveats above about different styles being good for different people, and the danger of overshooting).

Being both precise and readable at the same time is hard, man.

Yeah, definitely. And I think different trade-offs between precision and readability are genuinely best for different readers, which doesn't make it easier. (I think this is a good argument for separate distiller roles: if researchers have different styles, and can write best to readers with a similar style of thinking, then plausibly any piece of research should have a distillation written by someone with a different style, even if the original was already well written for a certain audience. It's probably not that extreme, I think often it's at least possible to find a good trade-off that works for most people, though hard).

We may disagree about how much progress the results to date represent regarding finite approximations. I'd say they contain conceptual ideas that may be important in a finite setting, but I also expect most of the work will lie in turning those ideas into non-trivial statements about finite settings. In contrast, most of your writing suggests to me that a large part of the theoretical work has been done (not sure to what extent this is a disagreement about the state of the theory or about communication).

Perhaps your instincts here are better than mine! Going to the finite case has indeed turned out to be more difficult than I expected at the time of writing most of the posts you reviewed.

(Self-promotion warning.) Alexander Gietelink Oldenziel pointed me toward this post after hearing me describe my physics research and noticing some potential similarities, especially with the Redundant Information Hypothesis. If you'll forgive me, I'd like to point to a few ideas in my field (many not associated with me!) that might be useful. Sorry in advance if these connections end up being too tenuous.

In short, I work on mathematically formalizing the intuitive idea of wavefunction branches, and a big part of my approach is based on finding variables that are special because they are redundantly recorded in many spatially disjoint systems. The redundancy aspects are inspired by some of the work done by Wojciech Zurek (my advisor) and collaborators on quantum Darwinism. (Don't read too much into the name; it's all about redundancy, not mutation.) Although I personally have concentrated on using redundancy to identify quantum variables that behave classically without necessarily being of interest to cognitive systems, the importance of redundancy for intuitively establishing "objectivity" among intelligent beings is a big motivation for Zurek.

Building on work by Brandao et al., Xiao-Liang Qi & Dan Ranard made use of the idea of "quantum Markov blankets" in formalizing certain aspects of quantum Darwinism. I think these are playing a very similar role to the (classical) Markov blankets discussed above.

In the section "Definitions depend on choice of variables" of the current post, the authors argue that Wentworth's construction depends on a choice of variables, and that without a preferred choice it's not clear that the ideas are robust. So it's maybe worth noting that a similar issue arises in the definition of wavefunction branches. The approach several researchers (including me) have been taking is to ground the preferred variables in spatial locality, which is about as fundamental a constraint as you can get in physics. More specifically, the idea is that the wavefunction branche decomposition should be invariant under arbitrary local operations ("unitaries") on each patch of space, but not invariant under operations that mix up different spatial regions.

Another basic physics idea that might be relevant is hydrodynamic variables and the relevant transport phenomena. Indeed, Wentworth brings up several special cases (e.g., temperature, center-of-mass momentum, pressure), and he correctly notes that their important role can be traced back to their local conservation (in time, not just under re-sampling). However, while very-non-exhaustively browsing through his other posts on LW it seemed as if he didn't bring up what is often considered their most important practical feature: predictability. Basically, the idea is this: Out of the set of all possible variables one might use to describe a system, most of them cannot be used on their own to reliably predict forward time evolution because they depend on the many other variables in a non-Markovian way. But hydro variables have closed equations of motion, which can be deterministic or stochastic but at the least are Markovian. Furthermore, the rest of the variables in the system (i.e., all the chaotic microscopic degrees of freedom) are usually "as random as possible" -- and therefore unnecessary to simulate -- in the sense that it's infeasible to distinguish them from being in equilibrium (subject, of course, to the constraints implied by the values of the conserved quantities). This formalism is very broad, extending well beyond fluid dynamics despite the name "hydro".

Thanks for that overview and the references!

On hydrodynamic variables/predictability: I (like probably many others before me) rediscovered what sounds like a similar basic idea in a slightly different context, and my sense is that this is somewhat different from what John has in mind, though I'd guess there are connections. See here for some vague musings. When I talked to John about this, I think he said he's deliberately doing something different from the predictability-definition (though I might have misunderstood). He's definitely aware of similar ideas in a causality context, though it sounds like the physics version might contain additional ideas

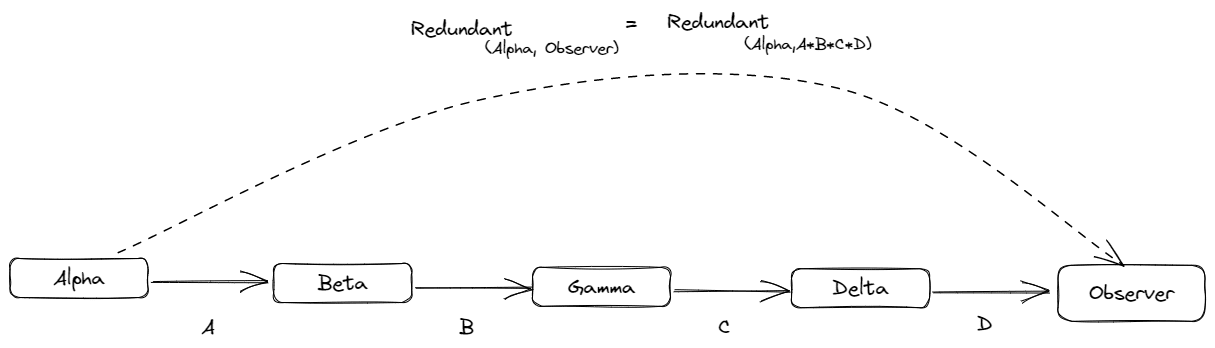

John has several lenses on natural abtractions:

the thing that felt closest to me to the Quantum Darwinism story that Jess was talking about as the 'redudant/ latent story, e.g. https://www.lesswrong.com/posts/N2JcFZ3LCCsnK2Fep/the-minimal-latents-approach-to-natural-abstractions and https://www.lesswrong.com/posts/dWQWzGCSFj6GTZHz7/natural-latents-the-math

Out of the set of all possible variables one might use to describe a system, most of them cannot be used on their own to reliably predict forward time evolution because they depend on the many other variables in a non-Markovian way. But hydro variables have closed equations of motion, which can be deterministic or stochastic but at the least are Markovian.

This idea sounds very similar to this—it definitely seems extendable beyond the context of physics:

We argue that they are both; more specifically, that the set of macrostates forms the unique maximal partition of phase space which 1) is consistent with our observations (a subjective fact about our ability to observe the system) and 2) obeys a Markov process (an objective fact about the system's dynamics).

This post is a great review of the Natural Abstractions research agenda, covering both its strengths and weaknesses. It provides a useful breakdown of the key claims, the mathematical results and the applications to alignment. There's also reasonable criticism.

To the weaknesses mentioned in the overview, I would also add that the agenda needs more engagement with learning theory. Since the claim is that all minds learn the same abstractions, it seems necessary to look into the process of learning, and see what kind of abstractions can or cannot be learned (both in terms of sample complexity and in terms of computational complexity).

Some thoughts about natural abstractions inspired by this post:

I think this was a very good summary/distillation and a good critique of work on natural abstractions; I'm less sure it has been particularly useful or impactful.

I'm quite proud of our breakdown into key claims; I think it's much clearer than any previous writing (and in particular makes it easier to notice which sub-claims are obviously true, which are daring, which are or aren't supported by theorems, ...). It also seems that John was mostly on board with it.

I still stand by our critiques. I think the gaps we point out are important and might not be obvious to readers at first. That said, I regret somewhat that we didn't focus more on communicating an overall feeling about work on natural abstractions, and our core disagreements. I had some brief back-and-forth with John in the comments, where it seemed like we didn't even disagree that much, but at the same time, I still think John's writing about the agenda was wildly more optimistic than my views, and I don't think we made that crisp enough.

My impression is that natural abstractions are discussed much less than they were when we wrote the post (and this is the main reason why I think the usefulness of our post has been limited). An important part of the reason I wanted to write this was that many junior AI safety researchers or people getting into AI safety research seemed excited about John's research on natural abstractions, but I felt that some of them had a rosy picture of how much progress there'd been/how promising the direction was. So writing a summary of the current status combined with a critique made a lot of sense, to both let others form an accurate picture of the agenda's progress while also making it easier for them to get started if they wanted to work on it. Since there's (I think) less attention on natural abstractions now, it's unsurprising that those goals are less important.

As for why there's been less focus on natural abstractions, my guess is a combination of at least:

It's also possible that many became more pessimistic about the agenda without public fanfare, or maybe my impression of relative popularity now vs then is just off.

I still think very high effort distillations and critiques can be a very good use of time (and writing this one still seems reasonable ex ante, though I'd focus more on nailing a few key points and less on being super comprehensive).

How does the redundancy definition of abstractions account for numbers, e.g., the number three? It doesn’t seem like “threeness” is redundantly encoded in, for example, the three objects on the floor of my room (rug, sweater, bottle of water) as rotation is in the gear example, since you wouldn’t be able to uncover information about “three” from any one object in particular.

I could imagine some definition based on redundancy capturing “threeness” by looking at a bunch of sets containing three things. But I think the reason the abstraction “three” feels a little strange on this account is that it is both highly natural (math!) but also can be highly “arbitrary,” e.g., “threeness” is wherever a mind can count three distinct objects (and those objects can be maximally unrelated!).

Perhaps counting the three objects on the floor of my room is a non-natural use case of the abstraction “three,” but if so, why? And where is the natural abstraction “three” in the world?

I find myself going back to this post again and again for explaing the Natural Abstraction Hypothesis. When this came out I was very happy as I finally had something I could share on John's work that made people understand it within one post.

I've only skimmed this, but my main confusions with the whole thing are still on a fairly fundamental level.

You spend some time saying what abstractions are, but when I see the hypothesis written down, most of my confusion is on what "cognitive systems" are and what one means by "most". Afaict it really is a kind of empirical question to do with "most cognitive systems". Do we have in mind something like 'animal brains and artificial neural networks'? If so then surely let's just say that and make the whole thing more concrete; so I suspect not....but in that case....what does it include? And how we will know if 'most' of them have some property? (At the moment, whenever I find evidence that two systems don't share an abstraction that they 'ought to' I can go "well the hypothesis is only most"...)

(My attempt at an explanation:)

In short, we care about the class of observers/agents that get redundant information in a similar way.

I think we can look at the specific dynamics of the systems described here to actually get a better perspective on whether the NAH should hold or not:

I had an insight about the implications of NAH which I believe is useful to communicate if true and useful to dispel if false; I don't think it has been explicitly mentioned before.

One of Eliezer's examples is "The AI must be able to make a cellularly identical but not molecularly identical duplicate of a strawberry." One of the difficulties is explaining to the AI what that means. This is a problem with communicating across different ontologies--the AI sees the world completely differently than we do. If NAH in a strong sense is true, then this problem goes away on its own as capabilities increase; that is, AGI will understand us when we communicate something that has a coherent natural interpretation, even without extra effort on our part to translate it to the AGI version of machine code.

Does that seem right?

Basically this. It has other directions, but I do think the NAH is trying to investigate how hard translating between ontologies are as capabilities scale up.

Tangentially related: recent discussion raising a seemingly surprising point about LLM's being lossless compression finders https://www.youtube.com/watch?v=dO4TPJkeaaU

representation of a variable for variable

Hm, I don't understand what is supposed to be here.

Y would be a target variable that one wants to predict, e.g. in supervised learning.

In other words, one wants to learn a model that predicts Y from X. As an intermediate step, one creates a representation T of X. T does not need to keep *all* information about X, but it needs to keep enough information to be able to extract Y from it. The remaining information about X is minimized.

Does this clarify it?

The LessWrong Review runs every year to select the posts that have most stood the test of time. This post is not yet eligible for review, but will be at the end of 2024. The top fifty or so posts are featured prominently on the site throughout the year.

Hopefully, the review is better than karma at judging enduring value. If we have accurate prediction markets on the review results, maybe we can have better incentives on LessWrong today. Will this post make the top fifty?

Relevant academic work, hopefully, will be interesting to someone wrt. NAH. From Fields et al. (2023):

We show in this paper that control flow in such systems can always be formally described as a tensor network, a factorization of some overall tensor (i.e., high-dimensional matrix) operator into multiple component tensor operators that are pairwise contracted on shared degrees of freedom [48]. In particular, we show that the factorization conditions that allow the construction of a TN are exactly the same as those that allow the identification of distinct, mutually conditionally independent (in quantum terms, decoherent), sets of data on the MB, and hence allow the identification of distinct “objects” or “features” in the environment. This equivalence allows the topological structures of TNs – many of which have been well-characterized in applications of the TN formalism to other domains [48] – to be employed as a classification of control structures in active inference systems; including cells, organisms, and multi-organism communities. It allows, in particular, a principled approach to the question of whether, and to what extent, a cognitive system can impose a decompositional or mereological (i.e., part-whole) structure on its environment. Such structures naturally invoke a notion of locality, and hence of geometry. The geometry of spacetime itself has been described as a particular TN – a multiscale entanglement renormalization ansatz (MERA) [49, 50, 51] – suggesting a deep link between control flow in systems capable of observing spacetime (i.e., capable of implementing internal representations of spacetime) and the deep structure of spacetime as a physical construct.

TL;DR: We distill John Wentworth’s Natural Abstractions agenda by summarizing its key claims: the Natural Abstraction Hypothesis—many cognitive systems learn to use similar abstractions—and the Redundant Information Hypothesis—a particular mathematical description of natural abstractions. We also formalize proofs for several of its theoretical results. Finally, we critique the agenda’s progress to date, alignment relevance, and current research methodology.

Author Contributions: Erik wrote a majority of the post and developed the breakdown into key claims. Leon formally proved the gKPD theorem and wrote most of the mathematical formalization section and appendix. Lawrence formally proved the Telephone theorem and wrote most of the related work section. All of us were involved in conceptual discussions and various small tasks.

Epistemic Status: We’re not John Wentworth, though we did confirm our understanding with him in person and shared a draft of this post with him beforehand.

Appendices: We have an additional appendix post and technical pdf containing further details and mathematical formalizations. We refer to them throughout the post at relevant places.

This post is long, and for many readers we recommend using the table of contents to skip to only the parts they are most interested in (e.g. the Key high-level claims to get a better sense for what the Natural Abstraction Hypothesis says, or our Discussion for readers already very familiar with natural abstractions who want to see our views). Our Conclusion is also a decent 2-min summary of the entire post.

Introduction

The Natural Abstraction Hypothesis (NAH) says that our universe abstracts well, in the sense that small high-level summaries of low-level systems exist, and that furthermore, these summaries are “natural”, in the sense that many different cognitive systems learn to use them. There are also additional claims about how these natural abstractions should be formalized. We thus split up the Natural Abstraction Hypothesis into two main components that are sometimes conflated:

Closely connected to the Natural Abstraction Hypothesis are several mathematical results as well as plans to apply natural abstractions to AI alignment. We’ll call all of these views together the natural abstractions agenda.

The natural abstractions agenda has been developed by John Wentworth over the last few years. The large number of posts on the subject, which often build on each other by each adding small pieces to the puzzle, can make it difficult to get a high-level overview of the key claims and results. Additionally, most of the mathematical definitions, theorems, and proofs are stated only informally, which makes it easy to mix up conjectures, proven claims, and conceptual intuitions if readers aren’t careful.

In this post, we

All except the last of these sections are our attempt to describe John’s views, not our own. That said, we attempt to explain things in the way that makes the most sense to us, which may differ from how John would phrase them somewhat. And while John met with us to clarify his thinking, it’s still possible we’re simply misunderstanding some of his views. The final section discusses our own views: we note some of our agreements but focus on the places where we disagree or see a need for additional work.

In the remainder of this introduction, we provide some high-level intuitions and motivation, and then survey existing distillations and critiques of the natural abstractions agenda. Readers who are already quite familiar with natural abstractions may wish to skip directly to the next section.

What do we mean by abstractions?

There are different perspectives on what abstractions are, but one feature is that they throw away a lot of unimportant information, turning a complex system into a smaller representation. This idea of throwing away irrelevant information is the key perspective for the natural abstractions agenda. Cognitive systems can use these abstractions to make accurate predictions about important aspects of the world.

Let’s look at an example (extended from one by John). A computer running a program can be modeled at many different levels of abstraction. On a very low level, lots of electrons are moving through the computer’s chips, but this representation is much too complicated to work with. Luckily, it turns out we can throw away almost all the information, and just track voltages at various points on the chips. In most cases, we can predict high-level phenomena with the voltages almost as well as with a model of all the electrons, even though we’re tracking vastly fewer variables. This continues to higher levels of abstraction: we can forget the exact voltages and just model the chip as an idealized logical circuit, and so on. Sometimes abstractions are leaky and this fails, but for good abstractions, those cases are rare.

Slightly more formally, an abstraction F is then a description or function that, when applied to a low-level system X, returns an abstract summary F(X).[1] F(X) can be thought of as throwing away lots of irrelevant information in X while keeping information that is important for making certain predictions.

Why expect abstractions to be natural?

Why should we expect abstractions to be natural, meaning that most cognitive systems will learn roughly the same abstractions?

First, note that not every abstraction works as well as the computer example we just gave. If we just throw away information in a random way, we will most likely end up with an abstraction that is missing some crucial pieces while also containing lots of useless details. In other words: some abstractions are much better than others.

Of course, which abstractions are useful does depend on which pieces of information are important, i.e. what we need to predict using our abstraction. But the second important idea is that most cognitive systems need to make predictions about similar things. Combined with the first point, that suggests they will use similar abstractions.

Why would different systems need to predict similar things in the environment? The reason is that distant pieces of the environment mostly don’t influence each other in ways that can feasibly be predicted. Imagine a mouse fleeing from a cat. The mouse doesn’t need to track how each of the cat’s hairs move, since these small effects are quickly washed out by noise and never affect the mouse (in a way the mouse could predict). On the other hand, the higher-level abstractions “position and direction of movement of the cat” have more stable effects and thus are important. The same would be true for many other goals than surviving by fleeing the cat.

In addition to these conceptual arguments, there is some empirical evidence in favor of natural abstractions. For example, humans often learn a concept used by other humans based on just one or a few examples, suggesting natural abstractions at least among humans. More interestingly, there are many cases of ML models discovering these human abstractions too (e.g. trees in GANs as John has discussed, or human chess concepts in AlphaZero).

It seems clear that abstractions are natural in some sense—that most possible abstractions are just not useful and won’t be learned by any reasonable cognitive system. It’s less clear just how much we should expect abstractions used by different systems to overlap. We will discuss the claims of the natural abstractions agenda about this more precisely later on.

Why study natural abstractions for alignment?

Why should natural abstractions have anything to do with AI alignment? As motivation for the rest of this post, we'll briefly explain some intuitions for this. We defer a full discussion until a later section.

One conceptualization of the alignment problem is to ensure that AI systems are “trying” to do what we “want” them to do. This raises two large conceptual questions:

One interpretation of “something” is a particular set of physical configurations of the universe. However, this is considerably too complicated to fit into our brain, and we usually care more about high-level structures like our families or status. But what are these high-level structures fundamentally, and how can we mathematically talk about them? Intuitively, these structures throw away lots of detailed information about the universe, and thus, they are abstractions. So finding a theory of abstractions may be important to make progress on the conceptual question of what we and ML systems care about.

This is admittedly only a vague motivation, and we will later discuss more specific things we might do with a theory of natural abstractions. For example, a definition of abstractions might help find abstractions in neural networks, thus speeding up interpretability, and figuring out whether the universality hypothesis is true has strategic implications.

Existing writing on the natural abstractions agenda

The Natural Abstraction Hypothesis: Implications and Evidence is the largest existing distillation of the natural abstractions agenda. It follows John in dividing the Natural Abstraction Hypothesis into Abstractability, Human-Compatibility, and Convergence, whereas we will propose our own fine-grained subclaims. In addition to summarizing the natural abstractions agenda, the “Implications and Evidence” post mainly discusses possible sources of evidence about the Natural Abstraction Hypothesis. A much shorter summary of John’s agenda, also touching on natural abstractions, can be found in What Everyone in Technical Alignment is Doing and Why. Finally, the Hebbian Natural Abstractions sequence aims to motivate the Natural Abstraction Hypothesis from a computational neuroscience perspective.

There have also been a few discussions and critiques related to the natural abstractions agenda. Charlie Steiner has speculated that there may be too many very similar natural abstractions to make them useful for alignment, or that AI systems may not learn enough natural abstractions, essentially questioning claims 1b and 1c in the list we will introduce below. Steve Byrnes has written about why the natural abstractions agenda doesn’t focus on the most important alignment bottlenecks. These critiques are largely disjoint from the ones we will discuss later.

John himself has of course written by far the most about the natural abstractions agenda. We give a brief overview of his relevant writing in the appendix to make it easier for newcomers to dive in.

Related work

The universality hypothesis—that many systems will learn convergent abstractions/representations—is a key question in the field of neural network interpretability, and accordingly has been studied a substantial amount. Moreover, the intuitions behind the natural abstractions agenda and the redundant information hypothesis are commonly shared across different fields, of which we can highlight but a few.

Machine learning

Representation Learning

In machine learning, the subfield of representation learning studies how to extract representations of the data that have good downstream performance. Approaches to representation learning include next-frame/next-token prediction, autoencoding, infill/denoising, contrastive learning, predicting important variables of the environment, and many others. It’s worth noting that representations aren’t always learned explicitly; for example, it’s a standard trick in reinforcement learning to add auxiliary prediction losses or do massive self-supervised pretraining. It’s worth noting that work in representation learning generally does not make claims as to universality of learned representations; instead, their focus is on learning representations that are useful for downstream tasks.

In particular, the field of disentangled representation learning shares many relevant tools and motivations to the redundant information hypothesis. In disentangled representation learning, we aim to learn representations that separate (that is, disentangle) parts of the world into disjoint parts.

The redundant information hypothesis is also especially related to information bottleneck methods, which aim to learn a good representation T of a variable X for variable Y by solving optimization problems of the form:

minp(t|x)I(X;T)−βI(T,Y)In particular, we think that the deterministic information bottleneck, which tries to find the random variable T with minimum entropy, is quite similar in motivation to the idea of finding abstractions as redundant information.

The universality hypothesis in machine learning

The question of whether different neural networks learn the same representations has been studied in machine learning under the names convergent learning and the universality hypothesis. Here, the evidence for the universality of representations is more mixed. On one hand, different convolutional neural networks often exhibit similar circuits, have high correlated neurons, often learn similar representations, and learn to classify examples in a similar order. Models at different scales seem to consistently have heads that implement induction-like behavior. In particular, the fact that we can often align the internal representations of neural networks (e.g. see this paper) suggests that the neural networks are in some sense learning the same features of the world.

On the other hand, there are also many papers that argue against strong versions of feature universality. For example, even in the original convergent learning paper (Li et al 2014), the authors find that several features are idiosyncratic and are not shared across different networks. McCoy, Min, and Linzen 2019 find that different training runs of BERT generalize differently on downstream tasks. Recently, Chughtai, Chan, and Nanda 2023 investigated universality on group composition tasks, and found that different networks learn different representations in different orders, even with the same architecture and data order.

MCMC and Gibbs sampling

As John mentions in his redundant information post, the resampling-based definition of redundant information he introduces there is equivalent to running a Markov Chain Monte Carlo (MCMC) process. More specifically, this is essentially Gibbs sampling.[2] Redundant information corresponds to long mixing times (at least informally). But the motivation is of course different: in MCMC, we are usually interested in having short mixing times, because that allows efficient sampling from the stationary distribution. In the context of John's post, we're instead interested in mixing times because redundant information is a cause of long (or even infinite) mixing times.

Information Decompositions and Redundancy

John told us that he is now also interested in “relative” redundant information: for n random variables X1,…,Xn, what information do they redundantly share about a target variable Y?

One well-known approach for this is partial information decomposition. For the special case of two source variables X1,X2 and one target variable Y, the idea is to find a decomposition of the mutual information I(X1,X2;Y) into:

The original paper also contains a concrete definition for redundant information, called Imin. Later, researchers studied further desirable axioms that a redundancy measure should satisfy. However, it was proven that they can't all be satisfied simultaneously, which led to a development of many more attempts to define redundant information.

John told us that he does not consider partial information decomposition useful for his purposes since it considers small systems (instead of systems in the limit of large n), for which he does not expect there exist formalizations of redundancy that have the properties we want.

Neuroscience

Neuroscience can provide evidence about “how natural” abstractions are between different species of animals. Jan Kirchner has written a short overview of some of the existing work in this field:

(Cognitive) Psychology

Similarities of representations between different individuals or cultures is an important topic in psychology (e.g. psychological universals—mental properties shared by all humans instead of just specific cultures). Also potentially interesting is research on basic-level categories—concepts at a level of abstraction that appears to be especially natural to humans. Of course similarities between human minds can only provide weak evidence in favor of universally convergent abstractions for all minds. Psychology might be more helpful to find evidence against the universality of certain abstractions.

Philosophy

Philosophy discusses natural kinds—categories that correspond to real structure in the world, as opposed to being human conventions. Whether natural kinds exist (and if so, which kinds are and are not natural) is a matter of debate.

The universality hypothesis is similar to a naturalist position: natural kinds exist, many of the categories we use are not arbitrary human conventions but rather follow the structure of nature. It's worth noting that in the universality hypothesis, human-made things can form natural abstractions too. For example, cars are probably a natural abstraction in the same way that trees are. Whether artifacts like cars can be natural kinds is disputed among philosophers.

Key high-level claims

Broadly speaking, the natural abstractions agenda makes two main claims that are sometimes conflated:

Throughout the rest of the piece, we use the term natural abstraction to refer to the general concept, and redundant information abstractions to refer to the mathematical construct.

In this section, we'll break those two high-level claims down into their subclaims. Many of those subclaims are about various sets of information and how they are related, so we summarize those in the figure below.

0. Abstractability: Our universe abstracts well

An important background motivation for this agenda is that our universe allows good abstractions at all. While almost all abstractions are leaky to some extent, there are many abstractions that work quite well even though they are vastly smaller than reality (recall the example of abstracting a circuit from electrons moving around to idealized logical computations).

Some version of this high-level claim is uncontentious, but it's an important part of the worldview underlying the natural abstractions agenda. Note that John has used the term “abstractability” to mean something a bit more specific, namely that good abstractions are connected to information relevant far away. We will discuss this as a separate claim later (Claim 2d).

1. The Universality Hypothesis: Most cognitive systems learn and use similar abstractions

1a. Most cognitive systems learn subsets of the same abstractions

Cognitive systems are much smaller than the universe, so they can’t track all the low-level information anyway—they will certainly have to abstract in some way.

A priori, you could imagine that basically “anything goes” when it comes to abstractions: every cognitive system throws away different parts of the available information. Humans abstract CPUs as logical circuits, but other systems use entirely different abstractions.

This claim says that’s not what happens: there is some relatively small set of information that a large class of cognitive systems learn a subset of. In other words, the vast majority of information is not represented in any of these cognitive systems.

As another example, consider a rotating gear. Different cognitive systems may track different subsets of its high-level properties, such as its angular position and velocity, its mass, or its temperature. But there is a lot of information that none of them track, such as the exact thermal motion of a specific atom inside the gear.

Precisely which cognitive systems are part of this large class is not yet clear. John's current hypothesis is "distributed systems produced by local selection pressures".

1b. The space of abstractions used by most cognitive systems is roughly discrete

The previous claim alone is not enough to give us crisp, “natural” abstractions. As a toy example, you could have a system that tracks a gear's rotational velocity ω and its temperature T, but you could also have one that only tracks the combined quantity ωα⋅Tβ for some real numbers α,β. Varying α and β smoothly would give a continuous family of abstractions, each keeping slightly different pieces of information.

According to this claim, there is instead a specific, approximately discrete set of abstractions that are actually used by most cognitive systems. These abstractions are what we call "natural abstractions". Rotational velocity and temperature are examples of natural abstractions of a gear, whereas arbitrary combinations of the two are not.

One caveat is that we realistically shouldn’t expect natural abstractions to be perfectly discrete. Sometimes, slightly different abstractions will be optimal for different cognitive systems, depending on their values and environment. So there will be some ambiguity around some natural abstractions. But the claim is that this ambiguity is very small, in particular small enough that different natural abstractions don’t just blend into each other. (See this comment thread for more discussion.)

1c. Most general cognitive systems can learn the same abstractions

The claims so far say that there is a reasonably small, discrete set of “natural abstractions”, which a large class of cognitive systems learn a subset of. This would still leave open the possibility that these subsets don’t overlap much, e.g. that an AGI might use natural abstractions we simply don’t understand.

Clearly, there are cases where an abstraction is learned by one system but not another one. For example, someone who has never seen snow won’t have formed the “snow” abstraction. However, if that person does see snow at some later point in their life, they’ll learn the concept from only very few examples. So they have the ability to learn this natural abstraction as soon as it becomes relevant in their environment.

This claim says that this ability to learn natural abstractions applies more broadly: general-purpose cognitive systems (like humans or AGI) can in principle learn all natural abstractions. If this is true, we should expect abstractions by future AGIs to not be “fundamentally alien” to us. One caveat is that larger cognitive systems may be able to track things in more detail than our cognition can deal with.

1d. Humans and ML models both use natural abstractions

This claim says that humans and ML models are part of the large class of cognitive systems that learn to use natural abstractions. Note that there is no claim to the converse: not all natural abstractions are used by humans. But given claim 1c, once we do encounter the thing described by some natural abstraction we currently don't use, we will pick up that natural abstraction too, unless it is too complex for our brain.

John calls the human part of this hypothesis Human-Compatibility. His writing doesn’t mention ML models as much, but the assumption that they will use natural abstractions is important for the connection of this agenda to AI alignment.

2. The Redundant Information Hypothesis: A mathematical description of natural abstractions

2a. Natural abstractions are functions of redundantly encoded information

Claim 1a says there is some small set of information that contains all natural abstractions, and claim 1b says that natural abstractions themselves are a discrete subset of this set of information. This claim describes the set of information from 1a: it is all the information that is encoded in a highly redundant way. Intuitively, this means you can get it from many different parts of a system.

An example (due to John) is the rotational velocity of a gear: you can estimate it based on any small patch of the gear by looking at the average velocity of all the atoms in that patch and the distance of the patch to the rotational axis. In contrast, the velocity of one single atom is not very redundantly encoded: you can't reconstruct it based on some other far-away patch of the gear.

This claim says that all natural abstractions are functions of redundant information, but it does not say that all functions of redundant information are natural abstractions. For example, since both angular velocity ω and temperature T of a gear are redundantly encoded, mixed quantities such as ωα⋅Tβ are functions of redundant information, but this does not make them natural abstractions.

2b. Redundant information can be formalized via resampling or minimal latents

The concept of redundant information as “information that can be obtained from many different pieces of the system” is a good intuitive starting point, but John has also given more specific definitions. Later, we will formalize these definitions a bit more, for now we only mean to give a high-level overview. Note that John told us that his confidence in this claim specifically is lower than in most of the other claims.

Originally, John defined redundant information as information that is conserved under a certain resampling process (essentially Gibbs sampling): given initial samples of variables X1,…,Xn, you repeatedly pick one of the variables at random and resample it conditioned on the samples of all the other variables. The information that you still have about the original variable values after resampling many times must have been redundant, i.e. contained in at least two variables. In practice, we probably don’t want such a loose definition of redundancy: what we care about is information that is highly redundant, i.e. present in many variables. This means we would resample several variables at a time.

In a later post, John proposed another potential formalization for natural abstractions, namely the minimal latent variable conditioned on which X1,…,Xn are all independent. He argues that these minimal latent variables only depend on the information conserved by resampling (see below for our summary of the argument).

2c. In our universe, most information is not redundant

If most of the information in our universe was encoded highly redundantly, then claim 2a (natural abstractions are functions of redundant information) wouldn't be surprising. The additional claim that most information is not redundant is what makes 2a interesting. This is a more formal version of the background claim 0 that “our universe abstracts well”.

2d. Locality, noise, and chaos are the key mechanisms for most information not being redundant

Claim 2c raises a question: why should most information be non-redundant? This claim says the reason is roughly as follows:

A closely related claim is that the information which is redundantly represented must have been transmitted very faithfully, i.e. close to deterministically. Conversely, information that is transmitted faithfully is redundant, since it is contained in every layer.

Key Mathematical Developments and Proofs

(This section is more mathematically involved than the rest of the post. If you like, you can skip to the next section and still follow most of the remaining content.)

In this section, we describe the key mathematical developments from the natural abstractions program and describe how they all relate to redundant information. We start by formulating the telephone theorem, which is related to abstractions as information "relevant at a distance". Afterward, we explain in more detail how redundant information can be defined as resampling-invariant information, and describe why information at a distance is expected to be a function of redundant information. We continue with the definition of abstraction as minimal latent variables and why they are also expected to be functions of redundant information. All of this together supports claims 2a and 2b from earlier.

Finally, we discuss the generalized Koopman-Pitman-Darmois theorem (KPD) and how it was originally conjectured to be connected to redundant information. Note that based on private communication with John, it is currently unclear how relevant generalized KPD is to abstractions.

This section is meant to strike a balance between formalization and ease of exposition, so we only give proof sketches here. The full definitions and proofs for the telephone theorem and generalized KPD can be found in our accompanying pdf. We will discuss on a more conceptual level how the results here fit together later.

Epistemic status: We have carefully formalized the proofs of the telephone theorem and the generalized KPD theorem, with only some regularity conditions to be further clarified for the latter. For the connection between redundant information and the telephone theorem, and also the minimal latents approach, we present our understanding of the original arguments but believe that there is more work to be done to have precisely formalized theorems and proofs. We note some of that work in the appendix.

The Telephone Theorem

An early result in the natural abstractions agenda was the telephone theorem, which was proven before the framework settled on redundant information. In this theorem, the abstractions are defined as limits of minimal sufficient statistics along a Markov chain, which we now explain in more detail:

A sufficient statistic of a random variable Y for the purpose of predicting X is, roughly speaking, a function f(Y) that contains all the available information for predicting X:

P(X∣Y)=P(X∣f(Y)).If X and Y are variables in the universe and very "distant" from each other, then there is usually not much predictable information available, which means that f(Y) can be "small" and might be thought of as an "abstraction".

Now, the telephone theorem describes how these summary statistics behave along a Markov chain when chosen to be "minimal". For more details, especially about the proof, see the accompanying pdf.

Theorem (The telephone theorem). For any Markov chain X0→X1→… of random variables Xt:Ω→Xi that are either discrete or absolutely continuous, there exists a sequence of measurable functions f1,f2,..., where ft:Xi→RX0(Ω), such that:

Concretely, we can pick ft(Xt):=P(X0∣Xt) as the minimal sufficient statistic.

Proof sketch. ft(Xt):=P(X0∣Xt) can be viewed as a random variable on Ω mapping ω∈Ω to the conditional probability distribution

P(X0∣Xt=Xt(ω))∈RX0(Ω).Then clearly, this satisfies the second property: if you know how to predict X0 from the (unknown) Xt(ω), then you do just as well in predicting X0 as if you know Xt(ω) itself:

P(X0∣Xt(ω))=P(X0∣P(X0∣Xt=Xt(ω)))=P(X0∣ft(Xt)=ft(Xt(ω)))For the first property, note that the mutual information I(X0;Xt) decreases across the Markov chain, but is also bounded from below by 0 and thus eventually converges to a limit information I∞. Thus, for any ϵ>0, we can find a T such that for all t≥T and k≥0 the differences in mutual information are bounded by ϵ:

ϵ>|I(X0;Xt)−I(X0;Xt+k)|=|I(X0;Xt,Xt+k)−I(X0;Xt+k)|=|I(X0;Xt∣Xt+k)|.In the second step, we used that X0→Xt→Xt+k forms a Markov chain, and the final step is the chain rule of mutual information. Now, the latter mutual information is just a KL divergence:

DKL(P(X0,Xt∣Xt+k) ∥ P(X0∣Xt+k)⋅P(Xt∣Xt+k))<ϵ.Thus, "approximately" (with the detailed arguments involving the correspondence between KL divergence and total variation distance) we have the following independence:

P(X0,Xt∣Xt+k)≈P(X0∣Xt+k)⋅P(Xt∣Xt+k).By the chain rule, we can also decompose the left conditional in a different way:

P(X0,Xt∣Xt+k)=P(X0∣Xt,Xt+k)⋅P(Xt∣Xt+k)=P(X0∣Xt)⋅P(Xt∣Xt+k),where we have again used the Markov chain X0→Xt→Xt+k in the last step. Equating the two expansions of the conditional and dividing by P(Xt∣Xt+k), we obtain

ft(Xt)=P(X0∣Xt)≈P(X0∣Xt+k)=ft+k(Xt+k).By being careful about the precise meaning of these approximations, one can then show that the sequence ft(Xt) indeed converges in probability. □

Abstractions as Redundant Information

The following is a semiformal summary of Abstractions as Redundant Information. We explain how to define redundant information as resampling-invariant information and why the abstractions f∞ from the telephone theorem are expected to be a function of redundant information.

More Details on Redundant information as resampling-invariant information

The setting is a collection X1,…,XN of random variables. The idea is that redundantly encoded information should be recoverable even when repeatedly resampling individual variables. This is, roughly, formalized as follows:

Let X0=X1,…,XN be the original collection of variables and denote by X1,X2,…,Xt,… collections of variables Xt1,…,XtN that iteratively emerge from the previous time step t−1 as follows: choose a resampling index i∈{1,…,N}, keep theN−1 variables Xt−1≠i fixed and resample the remaining variable Xt−1i conditioned on the fixed variables. The index i of the variable to be resampled is thereby (possibly randomly) changed for each time step t. As discussed in the related work section, this is essentially Gibbs sampling.

Let X∞ be the random variable this process converges to.[3] Then the amount of redundant information in X0 is defined to be the mutual information between X0 and X∞:

RedInfo(X0):=MI(X0;X∞).Ideally, one would also be able to mathematically construct an object that contains the redundant information. One option is to let F be a sufficient statistic of X0 for the purpose of predicting X∞:

P(X∞∣X0)=P(X∞ | F(X0)).Then one indeed obtains RedInfo(X0)=MI(F(X0);X∞). Concretely, one can choose F(X0):=P(X∞∣X0), which is a minimal sufficient statistic as explained in the above proof-sketch of the telephone theorem.

Telephone Abstractions are a Function of Redundant Information

Imagine that we "cluster together" some of the variables X0i into variables B1,B2,… that together form a Markov chain B1→B2→…. Each Bj contains possibly several of the variables X0i in a non-overlapping way and such that the Markov chain property holds. One example often used by John is that the variables Bj form a sequence of growing Markov blankets in a causal model of variables X0i. For all j<k, all the information in Bj then has to pass through all intermediate blankets to reach Bk, which results in the Markov chain property. Then from the telephone theorem one obtains an "abstract summary" of B1 given by a limit variable f∞.

Now, let F(X0) be the variable containing all the redundant information from earlier. Then the claim is that this contains f∞ for any choice of a Markov chain B1→B2→… above, i.e., f∞=G(F(X0)) for some suitable function G.

Theorem (Informal). We have f∞=G(F(X0)) for some function G that depends on the choice of the Markov chain B1→B2→…

Proof Sketch. Note that we did not formalize this proof sketch and thus can't be quite sure that this claim can be proven (see appendix for some initial notes). The original proof does not contain many more details than our sketch.

The idea is that F(X0) contains all information that is invariant under resampling. Thus, it is enough to show that f∞ is invariant under resampling as well. Crucially, if you resample a variable Xi, then this will either not be contained in any of the variables B1,B2,… at all, which leaves f∞ invariant, or it will be contained in only one variable Bj. But for T>j, the variable BT is kept fixed in the resampling and we have limT→∞fT(BT)=f∞ by the construction of f∞ detailed in the telephone theorem. Thus, f∞ remains invariant in this process. □

Minimal Latents as a Function of Redundant Information

Another approach is to define abstractions by a minimal latent variable, i.e., the "smallest" function Λ∗(X0) that makes all the variables in X0 conditionally independent:

P(X0∣Λ∗)=N∏i=1P(X0i∣Λ∗).To be the "smallest" of these functions means that for any other random variable Λ with the independence property, Λ∗ only contains information about X0 that is also in Λ, meaning one has the following Markov chain:

Λ∗→Λ→X0.How is Λ∗ connected to redundant information? Note that X0≠i is, for each i, also a variable making all the variables in X0 conditionally independent, and so Λ∗ fits due to its minimality (by definition) in a Markov chain as follows:

Λ∗→X0≠i→X0.But this means that Λ∗ will be preserved when resampling any one variable in X0, and thus, Λ∗ contains only redundant information of X0. Since F(X0) contains all redundant information of X0, we obtain that Λ∗=G(F(X0)) for some function G. This is an informal argument and we would like to see a more precise formalization of it.

The Generalized Koopman-Pitman-Darmois Theorem

This section describes the generalized Koopman-Pitman-Darmois theorem (gKPD) on a high level. The one-sentence summary is that if there is a low-dimensional sufficient statistic of a sparsely connected system X=X1,…,Xn,, then "most" of the variables in the distribution P(X) should be of the exponential family form. This would be nice since the exponential family has many desirable properties.

We will first formulate an almost formalized version of the theorem. The accompanying pdf contains more details on regularity conditions and the spaces the parameters and values "live" in. Afterward, we explain what the hope was for how this connects to redundant information, as described in more detail in Maxent and Abstractions. John has recently told us that the proof for this maxent connection that he hoped to work out according to his 2022 plan update is incorrect and that he currently has no further evidence for it to be true in the stated form.

An almost formal formulation of generalized KPD

We formulate this theorem in slightly more generality than in the original post to reveal the relevant underlying structure. This makes it clear that it applies to both Bayesian networks (already done by John) and Markov random fields (not written down by John, but an easy consequence of his proof strategy).

Let X=X1,…,Xn be a collection of continuous random variables. Assume that its joint probability distribution factorizes when conditioning on the model parameters Θ, e.g. as a Bayesian network or Markov random field. Formally, we assume there is a finite index set I and neighbor sets Ni⊆{1,…,n} for i∈I, together with potential functions ψi>0, such that

P(X∣Θ)=∏i∈Iψi(XNi∣Θ).Here, XNi:=(Xj)j∈Ni.

This covers both the case of Bayesian networks and Markov random fields:

Assume that we also have a prior P(Θ) on model parameters. Using Bayes rule, we can then also define the posterior P(Θ∣X).

Now, assume that there is a sufficient statistic G of X with values in RD for D≪n. As before, to be a sufficient statistic means that it summarizes all the information contained in the data that is useful for predicting the model parameters:

P(Θ∣X)=P(Θ∣G(X)).The generalized KPD theorem says the following:

Theorem (generalized KPD (almost formal version)). There is:

such that the distribution P(X∣Θ) factorizes as follows:

P(X∣Θ)=1Z(Θ)⋅e[U(Θ)T∑i∉Egi(XNi)]⋅h(XN¯¯¯E)⋅∏i∈Eψi(XNi∣Θ).Thereby, ¯¯¯¯E:=I∖E and N¯¯¯¯E:=⋃i∈¯¯¯¯ENi. Z(Θ) is thereby a normalization constant determined by the requirement that the distribution integrates to 1.

Proof: see our pdf appendix.

The upshot of this theorem is as follows: from the existence of the low-dimensional sufficient statistic, one can deduce that P(X∣Θ) is roughly of exponential family form, with the factors ψi with i∈E being the "exceptions" that cannot be expressed in simpler form. If D≪n and if each Ni is also small, then it turns out that the number of exception variables |NE| is overall small compared to n, meaning the distribution may be easy to work with.

The Speculative Connection between gKPD and Redundancy

As stated earlier, Maxent and Abstractions tries to connect the generalized KPD theorem to redundancy, and the plan update 2022 is hopeful about a proof. According to a private conversation with John, the proof turned out to be wrong. Let us briefly summarize this:

Let X factorize according to a sparse Bayesian network. Then, by replacing X with X∞, Θ with X0 and G(X∞) with the resampling-invariant information F(X∞) in the setting of the generalized KPD theorem, one can hope that:

With these properties, one could apply generalized KPD. The second property relies on the proposed factorization theorem whose proof is, according to John, incorrect. He told us that he currently believes that not only the proof of the maxent form is incorrect, but that there is an 80% chance of the whole statement being wrong.

How is the natural abstractions agenda relevant to alignment?

We’ve discussed the key claims of the natural abstractions agenda and the existing theoretical results. Now, we turn to the bigger picture and attempt to connect the claims and results we discussed to the overall research plan. This section represents our understanding of John’s views and there are places where we disagree—we will discuss those in the next section.

Four reasons to work on natural abstractions

We briefly discussed why natural abstractions might be important for alignment research in the Introduction. In this section, we will describe the connection in more detail and break it down into four components.

An important caveat: part of John's motivation is simply that abstractions seem to be a core bottleneck to various problems in alignment, and that connections beyond the four we list could appear in the future. So you can view the motivations we describe as the current key examples for the centrality of abstractions to alignment.

1. The Universality Hypothesis being true or false has strategic implications for alignment

If the Universality Hypothesis is true, and in particular if humans and AI systems both learn similar abstractions, this would make alignment easier in important ways. It would also have implications about which problems should be the focus of alignment research.

In an especially fortunate world, human values could themselves be natural abstractions learned by most AI systems, which would mean that even very simple hacky alignment schemes might work. More generally, if human values are represented in a simple way in most advanced AI systems, alignment mainly means pointing the AI at these values (for example by retargeting the search). On the other hand, if human values aren’t part of the AI’s ontology by default, viewing alignment as just “pointing” the AI at the right concept is a less appropriate framing.

Even if human values themselves turn out not to be natural abstractions, the Universality Hypothesis being true would still be useful for alignment. AIs would at least have simple internal representations of many human concepts, which should make approaches like interpretability much more likely to succeed. Conversely, if the Universality Hypothesis is false and we don’t expect AI systems to share human concepts by default, then we may for example want to put more effort into making AI use human concepts.

2. Defining abstractions is a bottleneck for agent foundations

When trying to define what it means for an “agent” to have “values”, we quickly run into questions involving abstractions. John has written a fictional dialogue about this: we might for example try to formalize “having values” via utility functions—but then what are the inputs to these utility functions? Clearly, human values are not directly a function of quantum wavefunctions—we value higher-level things like apples or music. So to formally talk about values, we need some account of what “higher-level things” are, i.e. we need to think about abstractions.

3. A formalization of abstractions would accelerate alignment research

For many central concepts in alignment, we currently don’t have robust definitions (“agency”, “search”, “modularity”, …). It seems plausible these concepts are themselves natural abstractions. If so, a formalization of natural abstractions could speed up the process of finding good formalizations for these elusive concepts. If we had a clear notion of what counts as a “good definition”, we could easily check any proposed definition of “agency” etc.—this would give us a clear and generally agreed upon paradigm for evaluating research.

This could be helpful to both agent foundations research (e.g. defining agency) and to more empirical approaches (e.g. a good definition of modularity could help understand neural networks).

Many of these abstractions in alignment seem closer to mathematical abstractions. These are not directly covered by the current work on natural abstractions. However, we might hope that ideas will transfer. Additionally, if mathematical abstractions are instantiated, they might become (“physical”) natural abstractions. For example, the Fibonacci sequence is clearly a mathematical concept, but it also occurs very often in nature so you might use it simply to compactly describe our world. Similarly, perhaps modularity is a natural abstraction when describing different neural networks.

4. Interpretability

In John’s view, the main challenge in interpretability is robustly identifying which things in the real world the internals of a network correspond to (for example that a given neuron robustly detects trees and nothing else). Current mechanistic interpretability research tries to find readable “pseudocode” for a network but doesn’t have the right approach to find these correspondences according to John:

A theory of abstractions would address this problem: natural abstractions are exactly about figuring out a good mathematical description for high-level interpretable structures in the environment. Additionally, knowing the “type signature” of abstractions would make it easier to find crisp abstractions inside neural networks: we would know more precisely what we are looking for.

We don’t have a good understanding of parts of this perspective (or disagree with our understanding of it)—we will discuss that more in the Discussion section.

How existing results fit into the larger plan

John developed the theoretical results we discussed above, such as the Telephone theorem, in the context of his plan to empirically test the natural abstraction hypothesis. Quoting him: