Ok, I take your bet for 2030. I win, you give me $1000. You win, I give you $3000. Want to propose an arbiter? (since someone else also took the bet, I'll get just half the bet, their $500 vs my $1500)

Shouldn't it be: 'They pay you $1,000 now, and in 3 years, you pay them back plus $3,000' (as per Bryan Caplan's discussion in the latest 80k podcast episode)? The money won't do anyone much good if they receive in it a FOOM scenario.

Since my goal is to convince people that I take my beliefs seriously, and this amount of money is not actually going to change much about how I conduct the next three years of my life, I'm not worried about the details. Also, I'm not betting that there will be a FOOM scenario by the conclusion of the bet, just that we'll have made frightening progress towards one.

For people reading this post in the future, I'd like to note that I have written a somewhat long comment describing my mixed feelings about this post, since posting it. You can find my comment here. But I'll also repeat it below for completeness:

The first thing I'd like to say is that we intended this post as a bet, and only a bet, and yet some people seem to be treating it as if we had made an argument. Personally, I am uncomfortable with the suggestion that our post was "misleading" because we did not present an affirmative case for our views.

I agree that LessWrong culture benefits from arguments as well as bets, but it seems a bit weird to demand that every bet come with an argument attached. A norm that all bets must come with arguments would seem to substantially damper the incentives to make bets, because then each time people must spend what will likely be many hours painstakingly outlining their views on the subject.

That said, I do want to reply to people who say that our post was misleading on other grounds. Some said that we should have made different bets, or at different odds. In response, I can only say that coming up with good concrete bets about AI timelines is actua...

I might disagree with you epistemically but... what do I have to win if AGI happens before 2030 and I win the bet? I don't think either of us will still care about our bet after that happens. Doesn't this just run into all the standard problems of predicting doomsday?

Edit: Oh, I also just saw you meant 3:1 odds in your favor. That's... weird, since it doesn't even disagree with the OP? Why would the OP take the bet that you propose, given they only assign ~30% probability to this outcome?

Bryan Caplan and Eliezer are resolving their Doomsday bet by having Bryan Caplan pay Eliezer upfront and if the doomsday scenario does not happen by Jan 1 2030, Eliezer will give Bryan his payout. It's a pretty method for betting on doomsday.

Why would the OP take the bet that you propose, given they only assign ~30% probability to this outcome?

The conditions we offered fall well short of AGI, so it seems reasonable that the author would assign way more than 30% to this outcome. Furthermore, we offered a 1:1 bet for January 1st 2026.

Edit: The OP also says, "Crying wolf isn't really a thing here; the societal impact of these capabilities is undeniable and you will not lose credibility even if 3 years from now these systems haven't yet FOOMed, because the big changes will be obvious and you'll have predicted that right." which seems to imply that we will likely obtain very impressive capabilities within 3 years. In my opinion, this statement is directly challenged by our 1:1 bet.

Hmm, I guess it's just really not obvious that your proposed bet here disagrees with the OP. I think I roughly believe both the things that the OP says, and also wouldn't take your bet. It still feels like a fine bet to offer, but I am confused why it's phrased so much in contrast to the OP. If you are confident we are not going to see large dangerous capability gains in the next 5-7 years, I think I would much prefer you make a bet that tries to offer corresponding odds and the specific capability gains (though that runs a bit into "betting on doomsday" problems)

If you are confident we are not going to see large dangerous capability gains in the next 5-7 years, I think I would much prefer you make a bet that tries to offer corresponding odds and the specific capability gains (though that runs a bit into "betting on doomsday" problems)

What are the "specific cabability gains" you are referring to? I don't see any specific claims in the post we are responding to. By contrast, we listed 7 concrete tasks that appear trivial to perform if we are AGI-levels of capability, and very easy if we are only a few steps from AGI. I'd be genuinely baffled if you think AGI can be imminent at the same time we still don't have good self-driving cars, robots that can wash dishes, or AI capable of doing well on mathematics word problems. This view would seem to imply that we will get AGI pretty much out of nowhere.

If you expect the apocalypse to happen by a given date, you should rationally value having money then much less than the market(if the market doesn't expect the apocalypse). So you can simulate a bet by having an apocalypse-expecter take a high-interest-rate loan from an apocalypse-denier, paying the loan back(if the world survives) at the date of the purported apocalypse(h/t daniel filan).

I want to state here that I regret my previous post, and have retracted it, primarily because it was not constructive and I think this post does an excellent job of calling out what a specific constructive dialogue looks like.

Of the above, the only ones that seem likely to me in the world I was imagining are MMLU and APPS - I'm much less familiar with the two math competitions, which seem like the other plausible ones.

I think I'll take you up on the 2026 version at 1:1 odds.

Is it really constructive? This post presents no arguments for why they believe what they believe which should serve very little to convince others of long timelines. Moreover it proposes a bet from an assymetric position that is very undesirable for short-timeliners to take, since money is worth nothing to the dead, and even in the weird world where they win the bet and are still alive to settle it, they have locked their money for 8 years for a measly 33% return - less than expected by simply say, putting it in index funds. Believing in longer timelines gives you the privilege of signalling epistemic virtue by offering bets like this from a calm, unbothered position, while people sounding the alarm sound desperate and hasty, but there is no point in being calm when a meteor is coming towards you, and we are much better served by using our money to do something now rather than locking it in a long term bet.

Not only that, the decision from mods to push this to the frontpage is questionable since it served as a karma boost to this post that the other didn't have, possibly giving the impression of higher support than it actually has.

A retrospective on this bet:

Having thought about each of these milestones more carefully, and having already updated towards short timelines months ago, I think it was really bad in hindsight to make this bet, even on medium-to-long timeline views. Honestly, I'm surprised more people didn't want to bet us, since anyone familiar with the relevant benchmarks probably could have noticed that we were making quite poor predictions.

I'll explain what I mean by going through each of these milestones individually,

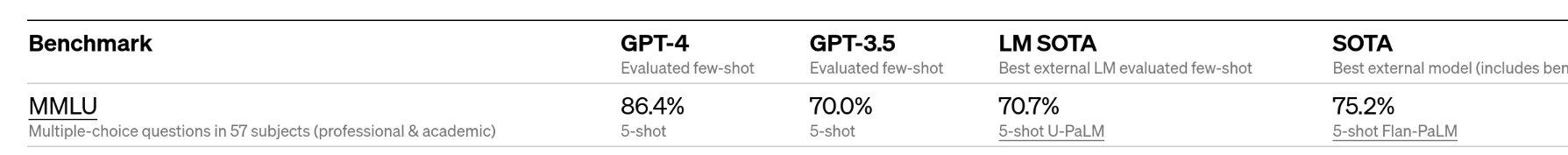

- "A model/ensemble of models achieves >80% on all tasks in the MMLU benchmark"

- The trend on this benchmark suggests that we will reach >90% performance within a few years. You can get 25% on this benchmark by guessing randomly (previously I thought it was 20%), so a score of 80% would not even indicate high competency at any given task.

- "A credible estimate reveals that an AI lab deployed EITHER >10^30 FLOPs OR hardware that would cost $1bn if purchased through competitive cloud computing vendors at the time on a training run to develop a single ML model (excluding autonomous driving efforts)"

- The trend was for compute to double every six months. Plugging in the relevant numbe

I am also willing to take your bet for 2030.

I would propose one additional condition: If there evidence of a deliberate or coordinated slowdown on AGI development by the major labs, then the bet is voided. I don't expect there will be such a slowdown, but I'd rather not be invested in it not happening.

I think this post is epistemically weak (which does not mean I disagree with you):

- Your post pushes the claim that “It's time for EA leadership to pull the short-timelines fire alarm.” wouldn't be wise. Problems in the discourse: (1) "pulling the short-timelines fire alarm" isn't well-defined in the first place, (2) there is a huge inferential gap between "AGI won't come before 2030" and "EA shouldn't pull the short-timelines fire alarm" (which could mean sth like e.g. EA should start planning to start a Manhattan project for aligning AGI in the next few years.), and (3) your statement "we are concerned about a view of the type expounded in the post causing EA leadership to try something hasty and ill-considered" that slightly addresses that inferential gap is just a bad rhetorical method where you interpret what the other said in a very extreme and bad way, although the other person actually didn't mean that, and you are definitely not seriously considering the pros and cons of taking more initiative. (Though of course it's not really clear what "taking more initiative" means, and critiquing the other post (which IMO was epistemically very bad) would be totally right.)

- You're not gi

Personal update:

The recent breakthrough on the MATH dataset has made me update substantially in the direction of thinking I’ll lose the bet. I’m now at about 50% chance of winning by 2026, and 25% chance of winning by 2030.

That said, I want others to know that, for the record, my update mostly reflects that I now think MATH is a relatively easy dataset, and my overall AGI median only advanced by a few years.

Previously, I relied quite heavily on statements that people had made about MATH, including the authors of the original paper, who indicated it was a difficult dataset full of high school “competition-level” math word problems. However, two days ago I downloaded the dataset and took a look at the problems myself (as opposed to the cherry-picked problems I saw people blog about), and I now understand that a large chunk of the dataset includes simple plug-and-chug and evaluation problems—some of them so simple that Wolfram Alpha can perform them. What’s more: the previous state of the art model, which was touted as achieving only 6.9%, was simply a fine-tuned version of GPT-2 (they didn’t fine-tune anything larger), which makes it very unsurprising that the prior SOTA was so low.

I...

I'll happily emulate Matthew Barnett's and Tamay's bet for any interested counter-bettors, at pretty much any volume with substantially better odds (for you.) I have a lot of vouches and willing to use a middleman/mediator if necessary. The best way to contact me is on discord at PTB kao#2111

Mod note: there's some weirdness about this post being frontpage, and the post it's responding to being on personal blog. I'm not 100% sure of my preferred call, but, the previous post seemed to primarily be arguing a community-centric-political point, and this one seems more to be making a straightforward epistemic claim. (I haven't checked in with other moderators about their thoughts on either post)

I frontpaged it because I am very excited about bets on timelines/takeoff speeds. I do think the title and framing about what EA leadership should do is not really a good fit for frontpage, and (for frontpage) I would much prefer a post title that's something like "A Concrete Bet Offer To Those With Short-Timelines".

I would much prefer a post title that's something like "A Concrete Bet Offer To Those With Short-Timelines".

Thanks, I more-or-less adopted this exact title. I hope that makes things look a bit better.

If the aim is for non-takeup of this bet to provide evidence against short timelines, I think you'd need to change the odds significantly: conditional on short timelines, future money is worth very little. Better to have an extra $1000 in a world where it's still useful than in one where it may already be too late.

Update: We have taken the bet with 2 people.

First: we have taken the 1:1 bet for January 1st 2026 with Tomás B. at our $500 to his $500.

Second: we have taken the 3:1 bet for January 1st 2030 with Nathan Helm-Burger at our $500 to his $1500

Personal note

Just as a personal note (I'm not speaking for Tamay here), I expect to lose the 2030 bet with >50% probability. I took it because it has positive EV on my view, though not as much as I believed when I first drafted the bet. I also disagree with comments here that state that these bets imply that I have short timelines. I think there's a huge gap between AI performing well on benchmarks, and AI having a large economic splash in the real world.

Here, we mostly focused on benchmarks because I think these metrics are fairly neutral markers between takeoff views. By this I mean that I expect fast-takeoff folks to think that AI will do well on benchmarks before we get to AGI, even if they think AI will have roughly zero economic impact before then. Since I wanted my bet to be applicable to people without slow-takeoff views, we went with benchmarks.

I think this would be more informative for the community if we had answers to the following questions here:

- What are the AI states of the art on these problems?

- How have the SoTAs changed over time?

- What is human performance on these problems (top human performance, average, any other statistics, the whole distribution, etc., whichever seems most useful)?

(Anyone can answer, and feel free to provide only partial information. I'm guessing the authors have a lot of this info handy already.)

Some questions/clarifications about the bet terms:

A robot that can, from beginning to end, reliably wash dishes, take them out of an ordinary dishwasher and stack them into a cabinet, without breaking any dishes, and at a comparable speed to humans (<120% the average time)

The dishwasher is the one actually washing the dishes right, not the robot? The robot just needs to load the dishwasher, run it, and then unload it fast enough and without breaks?

Tesla’s full-self-driving capability makes fewer than one major mistake per 100,000 miles

Can we modify this...

Interesting MATH update: https://ai.googleblog.com/2022/06/minerva-solving-quantitative-reasoning.html

Matthew, Tamay: Refreshing post, with actual hard data and benchmarks. Thanks for that.

My predictions:

- A model/ensemble of models achieves >80% on all tasks in the MMLU benchmark

No in 2026, no in 2030. Mainly due to the fact that we don't have much structured data and incentives to solve some of the categories. A powerful unsupervised AI would be needed to clear those categories, or more time.

- A credible estimate reveals that an AI lab deployed EITHER >10^30 FLOPs OR hardware that would cost $1bn if purchased through competitive cloud computing ve

I'll just note that several of these bets don't work as well if I expect discontinuous &/or inconsistently distributed progress. As was observed on many of individual tasks in PaLM: https://twitter.com/LiamFedus/status/1511023424449114112 (obscured by % performance & by the top-level benchmark averaging 24 subtasks that spike at different levels of scaling)

I might expect performance just prior to AGI to be something like 99% 40% 98% 80% on 4 subtasks, where parts of the network developed (by gd) for certain subtasks enable more general capabilities

A model/ensemble of models will achieve >90% on the MATH dataset using a no-calculator rule

Curious to hear if/how you would update your credence in this being achieved by 2026 or 2030 after seeing the 50%+ accuracy from Google's Minerva. Your prediction seemed reasonable to me at the time, and this rapid progress seems like a piece of evidence favoring shorter timelines.

A model/ensemble of models will achieve >90% on the MATH dataset using a no-calculator rule

A "no calculator rule". If the model is just a giant neural network, it is pretty clear what this means. (Although unclear why you should care, real world neural nets are allowed to use calculators). Over the general space of all AI techniques, its unclear what this means.

...A robot that can, from beginning to end, reliably wash dishes, take them out of an ordinary dishwasher and stack them into a cabinet, without breaking any dishes, and at a comparable speed t

I would like to bet against you here, but it seems like others have beat me to the punch. Are you planning to distribute your $1000 on offer across all comers by some date, or did I simply miss the boat?

Just noting that I think you're arguing strongly against what is at most a weak man argument. (And given that the author retracted the post, it might just be a straw-man.)

Super excited to see the offers to bet, though.

Just noting that I think you're arguing strongly against what is at most a weak man argument. (And given that the author retracted the post, it might just be a straw-man.)

Before we wrote the post, the OP had something like 140 karma. Also, it was only retracted after we posted.

"We (Tamay Besiroglu and I) think this claim is strongly overstated, and disagree with the suggestion that “It's time for EA leadership to pull the short-timelines fire alarm.” This post received a fair amount of attention, and we are concerned about a view of the type expounded in the post causing EA leadership to try something hasty and ill-considered."

What harm do you think will come if this happens and what do you think should be done instead?

Significant evidence for data contamination of MATH benchmark: https://arxiv.org/abs/2402.19450

for the record I think all of those are going to happen by 2024 and I'm surprised you're willing to bet otherwise. other people already took the bet. but the improvements from geometric deep learning, conservation laws, diffusion models, 3D understanding, and recursive feedback on chip design are all moving very fast. embodiment is likely to be solved suddenly when the underlying models are structured correctly. I maintain my assertion from previous discussion that compute is the only limitation and that the deep learning community has now demonstrated that compute is the only thing stopping them. deep learning is certainly bumping up against a wall, but just like every other wall it has run into, it's just going to go around.

Reading the comments, it seems like the idea you’re presenting of giving concrete bets on timelines is a great one, but the details of implementation can definitely be improved, so that making such a bet is meaningful for an AI pessimist.

I haven't look deeply at what the % on the ML benchmarks actually mean. On the one hand it would be a bit weird to me if in 2030 we still have not made enough progress on them, given the current rate. On the other hand, I trust the authors in that it should be AGI-ish to pass those benchmarks, and then I don't want to bet money on something far into the future if money might not matter as much then. (Also, without considering money mattering less or the fact that the money might not be delivered in 2030 etc., I think anyone taking the 2026 bet should take...

We will use inflation-adjusted 2022 US dollars.

Be aware that current inflation estimates are potentially distorted. It may be worth mentioning exactly what inflation estimate to use, lest you end up in a situation where this is true in some but not all estimates.

- A robot that can, from beginning to end, reliably wash dishes, take them out of an ordinary dishwasher and stack them into a cabinet, without breaking any dishes, and at a comparable speed to humans (<120% the average time)

Speed and ordinary dishwasher are pretty crucial here, right? Boston Dynamics claimed they could do this back in 2016, but much slower than the average human.

I agree with the need for "skin in the game", for most the same reasons as you, and I think the AI Alignment field is falling prey to the unilateralist's curse here.

Does the MATH dataset have the worst scaling laws of all these tasks? (and math/logic tasks in general?)

Hmm, two points:

First, $1000 is basically nothing these days, so no skin in the game. Something more leveraged would show that you are at least mildly serious.

Second, none of your benchmarks are FOOMy. I would go for something like "At least one ML/AI company has an AI writing essential algorithms" (possibly validated by humans before being deployed).

Should be pointed out that $1000 is no skin in the game to you. To some people I know, $1000 would have been nearly lifesaving at certain points in their lives.

[Update 4 (12/23/2023): Tamay has now conceded.]

[Update 3 (3/16/2023): Matthew has now conceded.]

[Update 2 (11/4/2022): Matthew Barnett now thinks he will probably lose this bet. You can read a post about how he's updated his views here.]

[Update 1: we have taken this bet with two people, as detailed in a comment below.]

Recently, a post claimed,

We (Tamay Besiroglu and I) think this claim is strongly overstated, and disagree with the suggestion that “It's time for EA leadership to pull the short-timelines fire alarm.” This post received a fair amount of attention, and we are concerned about a view of the type expounded in the post causing EA leadership to try something hasty and ill-considered.

To counterbalance this view, we express our disagreement with the post. To substantiate and make concrete our disagreement, we are offering to bet up to $1000 against the idea that we are in the “crunch-time section of a short-timelines”.

In particular, we are willing to bet at at 1:1 odds that no more than one of the following events will occur by 2026-01-01, or alternatively, 3:1 odds (in our favor) that no more than one of the following events will occur by 2030-01-01.

Since we recognize that betting incentives can be weak over long time-horizons, we are also offering the option of employing Tamay’s recently described betting procedure in which we would enter a series of repeated 2-year contracts until the resolution date.

Specific criteria for bet resolution

For each task listed above, we offer the following concrete resolution criteria.

Some clarifications

For each benchmark, we will exclude results that employed some degree of cheating. Cheating includes cases in which the rules specified in the original benchmark paper are not followed, or cases where some of the test examples were included in the training set.