Something that seems fairly important is the ability to mark your own answer before seeing the others, to avoid anchoring. (I don't know that everyone should be forced to do this but it seems useful to at least have the option. I noticed myself getting heavily anchored by some of the current question's existing answers)

Another feature that we also launched at the same time as this: Metaculus embeds!

Just copy paste any link to a Metaculus question into the editor, and it will automatically expand into the preview above (you can always undo the transformation with CTRL+Z).

GreaterWrong will now display Metaculus embeds created via the LessWrong editor.

(You can’t create Metaculus embeds via GreaterWrong… yet.)

I wish I could have my user settings so that I didn't see everybody else's predictions before making my own.

Jim actually built that setting this afternoon! My guess is we will probably merge it sometime tomorrow, as long as we don't run into any more problems.

An update: We've set up a way to link your LessWrong account to your Elicit account. By default, all your LessWrong predictions will show up in Elicit's binary database but you can't add notes or filter for your predictions.

If you link your accounts, you can:

* Filter for and browse your LessWrong predictions on Elicit (you'll be able to see them by filtering for 'My predictions')

* See your calibration for LessWrong predictions you've made that have resolved

* Add notes to your LessWrong predictions on Elicit

* Predict on LessWrong questions in the Elicit app

If you want us to link your accounts, send me an email (amanda@ought.org) with your LessWrong username and your Elicit account email!

This is awesome, congrats!

I predict that having to go to elicit.com/binary and paste the URL here will be a major barrier to usage and that if there was a button/prompt in the LW text editor it'd significantly reduce that barrier. People have to a) remember that this is an option available to them, b) remember or be able to look up the URL they need to go to (probably by visiting this LW post), and c) be motivated enough to continue. That said, I also think it makes sense to have it work the way it currently does as a version one.

Also, I'm curious to know how difficult it is to get this integrated on other websites?

jacobjacob once again seems too pessimistic, posterior is very heavily that when habryka makes a 60% yes prediction for a decision he has (partial) control over about a functionality which the community has glommed onto thus far, the community is also justified in expressing ~60% belief that the feature ships. :)

Also, we aren't selecting from "most possible features"!

(That being said, I think this integration is awesome and kudos to everyone. Just keeping my priors sensible :)

I do not endorse this as a way to end parentheticals! Grrr!

This looks so good! Great work by both Ought and the LW mods.

Perhaps this can also gauge approval (from 0% (I hate it) to 100% (I love it)), or even function as a poll (first decile: research agenda 1 seems most promising; second decile: research area 2...)... or Ought we not do things like that?

I liked this post a lot. In general, I think that the rationalist project should focus a lot more on "doing things" than on writing things. Producing tools like this is a great example of "doing things". Other examples include starting meetups and group houses.

So, I liked this post a) for being an example of "doing things", but also b) for being what I consider to be a good example of "doing things". Consider that quote from Paul Graham about "live in the future and build what's missing". To me, this has gotta be a tool that exists in the future, and I appreciate the effort to make it happen.

Unfortunately, as I write this on 12/15/21, https://elicit.org/binary. is down. That makes me sad. It doesn't mean the people who worked on it did a bad job though. The analogy of a phase change in chemistry comes to mind.

If you are trying to melt an ice cube and you move the temperature from 10℉ to 31℉, you were really close, but you ultimately came up empty handed. But you can't just look at the fact that the ice cube is still solid and judge progress that way. I say that you need to look more closely at the change in temperature. I'm not sure how much movement in temperature happened here, b...

How does this differ from PredictionBook besides being a much more pleasing interface and actually used for reasonable things (and also the nice embedding)?

Oh, I guess I just explained how.

Really nice site I like it.

I notice myself checking back on this post because I want to submit more predictions. I think it'd be cool if there was a feed of predictions similar to Recent Discussion section on the LW home page.

I'm curious what this means for Ought. Is Ought planning on doing anything with the data, and if so, what are the current speculations about that? How will Elicit evolve if the primary user base is LessWrong?

I looked at some of the stuff about Elicit on the Ought website, but I don't see how LessWrong embeds obviously helps develop this vision.

(To be clear, I'm not complaining -- if Elicit integration is good for LW with no direct benefit for Ought that's not a problem! But I'm guessing Ought was motivated to work with LW on this due to some perceived benefit.)

Lots of uncertainty but a few ways this can connect to the long-term vision laid out in the blog post:

- We want to be useful for making forecasts broadly. If people want to make predictions on LW, we want to support that. We specifically want some people to make lots of predictions so that other people can reuse the predictions we house to answer new questions. The LW integration generates lots of predictions and funnels them into Elicit. It can also teach us how to make predicting easier in ways that might generalize beyond LW.

- It's unclear how exactly the LW community will use this integration but if they use it to decompose arguments or operationalize complex concepts, we can start to associate reasoning or argumentative context with predictions. It would be very cool if, given some paragraph of a LW post, we could predict what forecast should be embedded next, or how a certain claim should be operationalized into a prediction. Continuing the takeoffs debate and Non-Obstruction: A Simple Concept Motivating Corrigibility start to point at this.

- There are versions of this integration that could involve richer commenting in the LW editor.

- Mostly it was a quick experiment that both teams were pretty excited about :)

Cool feature!

Is there any info on implementing Elicit embeds on other sites? (Like, say, GreaterWrong? :) I looked on elicit.org and didn’t find anything.

EDIT: Same question re: Metaculus embeds…

This feature seems to be making the page wider and allowing horizontal scrolling on my mobile (iPhone) which degrades the post reading experience. I would prefer if the interface got shrunk down to fit the phone’s width.

Very cool, looking forward to using this!

How does this work with the alignmemt forum? It would be amazing if AFers predictions were tracked on AF, and all LWers predictions were tracked in the LW mirror.

Calling a feature that's about getting a numeric value between 1% and 99% prediction suggests it shouldn't be used for asking questions that aren't predictions. Is that a conscious choice?

Is there a way to see all the users who predicted within a single "bucket" using the LW UI? Right now when I hover over a bucket, it will show all users if the number of users is small enough, but it will show a small number of users followed by "..." if the number of users is too large. I'd like to be able to see all the users. (I know I can find the corresponding prediction on the Elicit website, but this is cumbersome.)

Great feature! By default, I'm not (yet) into forecasting. But I can see the point of this even just for feedback. I'll definitely try to integrate it in my posts!

It looks like people can change their predictions after they initially submit them. Is this history recorded somewhere, or just the current distribution?

Is there an option to have people "lock in" their answer? (Maybe they can still edit/delete for a short time after they submit or before a cutoff date/time)

Is there a way to see in one place all the predictions I've submitted an answer to?

Is it possible to hide the values of other predictors? I'm worried that seeing the values that others predict might influence future predictions in a way that's not helpful for feedback or accurate group predictions. (Especially since names are shown and humans tend to respect others' opinions.)

Is it possible to have answers given in dates on https://forecast.elicit.org/binary, like it it is for https://forecast.elicit.org/questions/LX1mQAQOO?

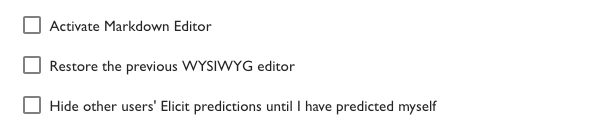

Note that for embedding Elicit predictions to work you must have the "Activate Markdown Editor" setting **turned off** in your [account settings](https://www.lesswrong.com/account).

I'm having trouble embedding forecasts. Does anyone know what I am doing wrong?

Elicit Prediction (https://forecast.elicit.org/binary/questions/el3utYd8Z)

Ought and LessWrong are excited to launch an embedded interactive prediction feature. You can now embed binary questions into LessWrong posts and comments. Hover over the widget to see other people’s predictions, and click to add your own.

Try it out

How to use this

Create a question

Troubleshooting: if the prediction box fails to appear and the link just shows up as text, go to you LW Settings, uncheck “Activate Markdown Editor”, and try again.

Make a prediction

Link your accounts

Motivation

We hope embedded predictions can prompt readers and authors to:

By working with LessWrong on this, Ought hopes to make forecasting easier and more prevalent. As we learn more about how people think about the future, we can use Elicit to automate larger parts of the workflow and thought process until we end up with end-to-end automated reasoning that people endorse. Check out our blog post to see demos and more context.

Some examples of how to use this