This post is a not a so secret analogy for the AI Alignment problem. Via a fictional dialog, Eliezer explores and counters common questions to the Rocket Alignment Problem as approached by the Mathematics of Intentional Rocketry Institute.

MIRI researchers will tell you they're worried that "right now, nobody can tell you how to point your rocket’s nose such that it goes to the moon, nor indeed any prespecified celestial destination."

Popular Comments

Recent Discussion

If we achieve AGI-level performance using an LLM-like approach, the training hardware will be capable of running ~1,000,000s concurrent instances of the model.

Definitions

Although there is some debate about the definition of compute overhang, I believe that the AI Impacts definition matches the original use, and I prefer it: "enough computing hardware to run many powerful AI systems already exists by the time the software to run such systems is developed". A large compute overhang leads to additional risk due to faster takeoff.

I use the types of superintelligence defined in Bostrom's Superintelligence book (summary here).

I use the definition of AGI in this Metaculus question. The adversarial Turing test portion of the definition is not very relevant to this post.

Thesis

Due to practical reasons, the compute requirements for training LLMs...

AGIs derived from the same model are likely to collaborate more effectively than humans because their weights are identical. Any fine-tune can be applied to all members, and text produced by one can be understood by all members.

I think this only holds if fine tunes are composable, which as far as I can tell they aren't (fine tuning on one task subtly degrades performance on a bunch of other tasks, which isn't a big deal if you fine tune a little for performance on a few tasks but does mean you probably can't take a million independently-fine-tuned mode...

This is an entry in the 'Dungeons & Data Science' series, a set of puzzles where players are given a dataset to analyze and an objective to pursue using information from that dataset.

STORY (skippable)

You have the excellent fortune to live under the governance of The People's Glorious Free Democratic Republic of Earth, giving you a Glorious life of Freedom and Democracy.

Sadly, your cherished values of Democracy and Freedom are under attack by...THE ALIEN MENACE!

Faced with the desperate need to defend Freedom and Democracy from The Alien Menace, The People's Glorious Free Democratic Republic of Earth has been forced to redirect most of its resources into the Glorious Free People's Democratic War...

In the late 19th century, two researchers meet to discuss their differing views on the existential risk posed by future Uncontrollable Super-Powerful Explosives.

- Catastrophist: I predict that one day, not too far in the future, we will find a way to unlock a qualitatively new kind of explosive power. This explosive will represent a fundamental break with what has come before. It will be so much more powerful than any other explosive that whoever gets to this technology first might be in a position to gain a DSA over any opposition. Also, the governance and military strategies that we were using to prevent wars or win them will be fundamentally unable to control this new technology, so we'll have to reinvent everything on the fly or die in

In the late 1940s and early 1950s nuclear weapons did not provide an overwhelming advantage against conventional forces. Being able to drop dozens of ~kiloton range fission bombs in eastern European battlefields would have been devastating but not enough by itself to win a war. Only when you got to hundreds of silo launched ICBMs with hydrogen bombs could you have gotten a true decisive strategic advantage

Epistemic Status: Musing and speculation, but I think there's a real thing here.

I.

When I was a kid, a friend of mine had a tree fort. If you've never seen such a fort, imagine a series of wooden boards secured to a tree, creating a platform about fifteen feet off the ground where you can sit or stand and walk around the tree. This one had a rope ladder we used to get up and down, a length of knotted rope that was tied to the tree at the top and dangled over the edge so that it reached the ground.

Once you were up in the fort, you could pull the ladder up behind you. It was much, much harder to get into the fort without the ladder....

The April 2024 Meetup will be April 27th at Bold Monk at 2:00 PM

We return to Bold Monk brewing for a vigorous discussion of rationalism and whatever else we deem fit for discussion – hopefully including actual discussions of the sequences and Hamming Circles/Group Debugging.

Location:

Bold Monk Brewing

1737 Ellsworth Industrial Blvd NW

Suite D-1

Atlanta, GA 30318, USA

No Book club this month!

This is also the meetups everywhere meetup that will be advertised on the blog - so we should have a large turnout!

We will be outside out front (in the breezeway) – this is subject to change, but we will be somewhere in Bold Monk. If you do not see us in the front of the restaurant, please check upstairs and out back – look for the yellow table sign. We will have to play the weather by ear.

Remember – bouncing around in conversations is a rationalist norm!

Great!

Produced as part of the SERI ML Alignment Theory Scholars Program - Summer 2023 Cohort, under the mentorship of Evan Hubinger.

I generate an activation steering vector using Anthropic's sycophancy dataset and then find that this can be used to increase or reduce performance on TruthfulQA, indicating a common direction between sycophancy on questions of opinion and untruthfulness on questions relating to common misconceptions. I think this could be a promising research direction to understand dishonesty in language models better.

What is sycophancy?

Sycophancy in LLMs refers to the behavior when a model tells you what it thinks you want to hear / would approve of instead of what it internally represents as the truth. Sycophancy is a common problem in LLMs trained on human-labeled data because human-provided training signals...

I am contrasting generating an output by:

- Modeling how a human would respond (“human modeling in output generation”)

- Modeling what the ground-truth answer is

Eg. for common misconceptions, maybe most humans would hold a certain misconception (like that South America is west of Florida), but we want the LLM to realize that we want it to actually say how things are (given it likely does represent this fact somewhere)

This is a Concept Dependency Post. It may not be worth reading on its own, out of context. See the backlinks at the bottom to see which posts use this concept.

See the backlinks at the bottom of the post. Every post starting with [Concept Dependency] is a concept dependency post, that describes a concept this post is using.

Problem: Often when writing I come up with general concepts that make sense in isolation. Often I want to reuse these concepts without having to reexplain them.

A Concept Dependency Post is explaining a single concept, usually with no or minimal context. It is expected that the relevant context is provided by another post that links to the concept dependency post.

Concept Dependency Posts can be very short. Much shorter than a regular post. They might not be worth reading on their own....

Adopted.

Nevertheless lots of people were hassled. That has real costs, both to them and to you.

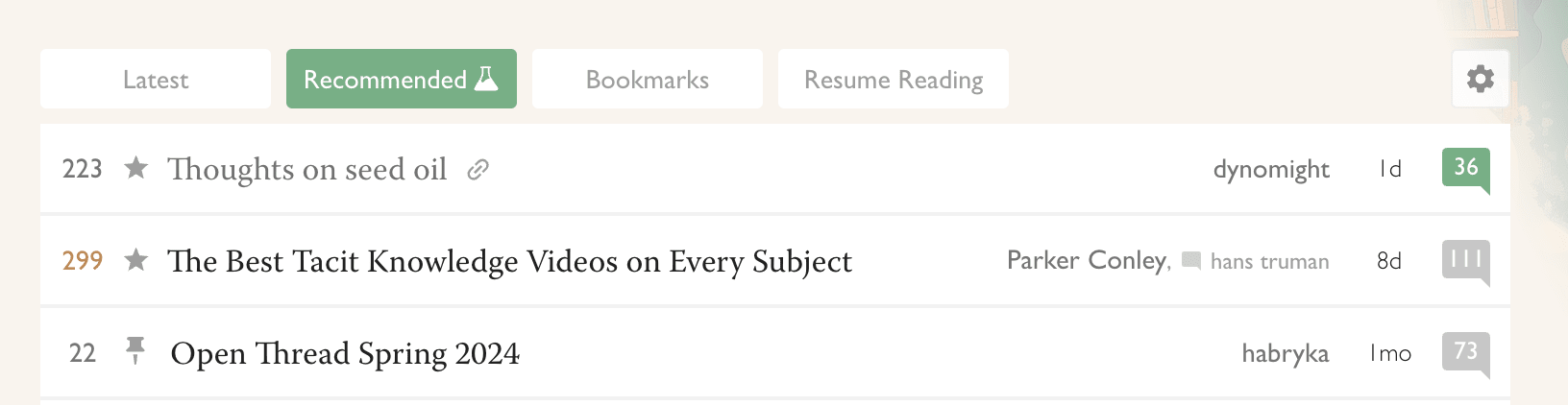

For the last month, @RobertM and I have been exploring the possible use of recommender systems on LessWrong. Today we launched our first site-wide experiment in that direction.

(In the course of our efforts, we also hit upon a frontpage refactor that we reckon is pretty good: tabs instead of a clutter of different sections. For now, only for logged-in users. Logged-out users see the "Latest" tab, which is the same-as-usual list of posts.)

Why algorithmic recommendations?

A core value of LessWrong is to be timeless and not news-driven. However, the central algorithm by which attention allocation happens on the site is the Hacker News algorithm[1], which basically only shows you things that were posted recently, and creates a strong incentive for discussion to always be...

drat, I was hoping that one would work. oh well. yes, I use ublock, as should everyone. Have you considered simply not having analytics at all :P I feel like it would be nice to do the thing that everyone ought to do anyway since you're in charge. If I was running a website I'd simply not use analytics.

back to the topic at hand, I think you should just make a vector embedding of all posts and show a HuMAP layout of it on the homepage. that would be fun and not require sending data anywhere. you could show the topic islands and stuff.