some lessons from ml research:

- any shocking or surprising result in your own experiment is 80% likely to be a bug until proven otherwise. your first thought should always be to comb for bugs.

- only after you have ruled out bugs do you get to actually think about how to fit your theory to the data, and even then, there might still be a hidden bug.

- most papers are terrible and don't replicate.

- most techniques that sound intuitively plausible don't work.

- most techniques only look good if you don't pick a strong enough baseline.

- an actually good idea can take many tries before it works.

- once you have good research intuitions, the most productive state to be in is to literally not think about what will go into the paper and just do experiments that satisfy your curiosity and. convince yourself that the thing is true. once you have that, running the final sweeps is really easy

- most people have no intuition whatsoever about their hardware and so will write code that is horribly inefficient. even learning a little bit about hardware fundamentals so you don't do anything obviously dumb is super valuable

- in a long and complex enough project, you will almost certainly have a bug that invalidates weeks

I agree and this is why research grant proposals often feel very fake to me. I generally just write up my current best idea / plan for what research to do, but I don't expect it actually pan out that way and it would be silly to try to stick rigidly to a plan.

i recently ran into to a vegan advocate tabling in a public space, and spoke briefly to them for the explicit purpose of better understanding what it feels like to be the target of advocacy on something i feel moderately sympathetic towards but not fully bought in on. (i find this kind of thing very valuable for noticing flaws in myself and improving; it's much harder to be perceptive of one's own actions otherwise). the part where i am genuinely quite plausibly persuadable of his position in theory is important; i think if i had talked to e.g flat earthers one might say my reaction is just because i'd already decided not to be persuaded. several interesting things i noticed (none of which should be surprising or novel, especially for someone less autistic than me, but as they say, intellectually knowing things is not the same as actual experience):

- this guy certainly knew more about e.g health impacts of veganism than i did, and i would not have been able to hold my own in an actual debate.

- in particular, it's really easy for actually-good-in-practice heuristics to come out as logical fallacies, especially when arguing with someone much more familiar with the object level details th

I claim that even if the openai contract is not meaningfully weaker safety wise, it is still bad for openai to publicly signal solidarity with ant but then sign with DoW.

suppose hypothetically the only difference between the openai and anthropic contracts is that the DoW wanted a snicker bar, and anthropic didn't want to give DoW the snickers bar. even then, it would be a huge dick move for openai to publicly signal solidarity, and then sign with DoW to give them the snickers bar.

OAI genuinely outplayed Anthropic here. The critical success world for OAI would be if OAI gets good PR from "solidarity", replaces Ant under the ~same terms, and there is enough uncertainty of Anthropic being a supply chain risk that eg Amazon stops providing them compute, basically killing the company.

Most of this is still on the table, because Anthropic was too concerned about appearing principled and was exploited by DoW and Altman.

I have a little stored thought which sometimes triggers, and it reads:

"If you find yourself being forced to choose between two or more extremely bad options that involve burning your values, your resources, or your life, the truth is that you lost around three moves ago and are living out the equivalent of a forced mate in chess. You've already lost, so stop playing and find a better game to spend time on if at all possible."

theory: a huge part of having a good social life is just taking social bids whenever they become available. examples of social bids both large and small include: deciding whether to join your friends on a roadtrip; getting to know someone you just met; getting to better know someone you bump into occasionally but usually never talk to; standing in line, seeing something amusing, and having the option to point this out to another stranger in line; saying something funny in a group conversation; following up over text with someone after meeting them; flirting; cold emailing someone on the internet; catching up with a friend.

there are a variety of reasons why we might end up not taking social bids. if you don't have the social ability to notice opportunities to take bids, you might miss bids that you could take. if you force yourself to take bids without the requisite social ability, and end up taking bids which you incorrectly believe to exist, you might act in ways that people find weird, and burn potential connections, or intrude on people. if you are really tired or low-bandwidth or depressed or stressed, you will not want to take bids, because taking bids requires quite a lot of ...

it's surprising just how much of cutting edge research (at least in ML) is dealing with really annoying and stupid bottlenecks. pesky details that seem like they shouldn't need attention. tools that in a good and just world would simply not break all the time.

i used to assume this was merely because i was inexperienced, and that surely eventually you learn to fix all the stupid problems, and then afterwards you can just spend all your time doing actual real research without constantly needing to context switch to fix stupid things.

however, i've started to think that as long as you're pushing yourself to do novel, cutting edge research (as opposed to carving out a niche and churning out formulaic papers), you will always spend most of your time fixing random stupid things. as you get more experienced, you get bigger things done faster, but the amount of stupidity is conserved. as they say in running- it doesn't get easier, you just get faster.

as a beginner, you might spend a large part of your research time trying to install CUDA or fighting with python threading. as an experienced researcher, you might spend that time instead diving deep into some complicated distributed trai...

Not only is this true in AI research, it’s true in all science and engineering research. You’re always up against the edge of technology, or it’s not research. And at the edge, you have to use lots of stuff just behind the edge. And one characteristic of stuff just behind the edge is that it doesn’t work without fiddling. And you have to build lots of tools that have little original content, but are needed to manipulate the thing you’re trying to build.

After decades of experience, I would say: any sensible researcher spends a substantial fraction of time trying to get stuff to work, or building prerequisites.

This is for engineering and science research. Maybe you’re doing mathematical or philosophical research; I don’t know what those are like.

a corollary is i think even once AI can automate the "google for the error and whack it until it works" loop, this is probably still quite far off from being able to fully automate frontier ML research, though it certainly will make research more pleasant

I think there are several reasons this division of labor is very minimal, at least in some places.

- You need way more of the ML engineering / fixing stuff skill than ML research. Like, vastly more. There are still a very small handful of people who specialize full time in thinking about research, but they are very few and often very senior. This is partly an artifact of modern ML putting way more emphasis on scale than academia.

- Communicating things between people is hard. It's actually really hard to convey all the context needed to do a task. If someone is good enough to just be told what to do without too much hassle, they're likely good enough to mostly figure out what to work on themselves.

- Convincing people to be excited about your idea is even harder. Everyone has their own pet idea, and you are the first engineer on any idea you have. If you're not a good engineer, you have a bit of a catch-22: you need promising results to get good engineers excited, but you need engineers to get results. I've heard of even very senior researchers finding it hard to get people to work on their ideas, so they just do it themselves.

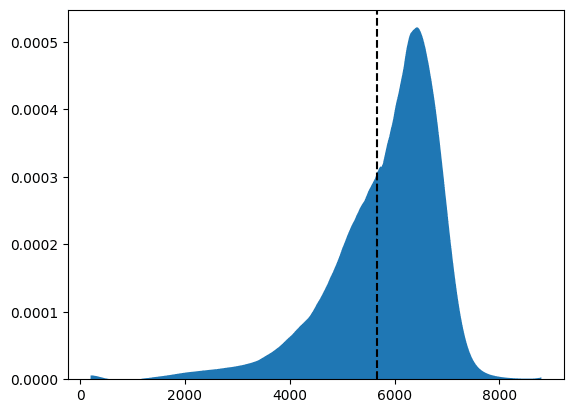

running the agi survey really reminded me just how brutal statistical significance is, and how unreliable anecdotes are. even setting aside sampling bias of anecdotes, the sheer sample size you need to answer a question like "do more people this year know what agi is than last year" is kind of depressing - you need like 400 samples for each year just to be 80% sure you'd notice a 10 percentage point increase even if it did exist, and even if there was no real effect you'd still think there was one 5% of the time. this makes me a lot more bearish on vibes in general.

thank you for this post. "bearish on vibes" is a great phrase. i am constantly hung up on the fact that it's not really possible to "know what normal people are like", "know what people are like generally", "know what the world is actually like", without significant amounts of effort.

i think this background fact taints like... most discussion of social and ethical issues.

like, suppose i anecdotally noticed a few people last year be visibly confused when i said the phrase AGI in normal conversation last year, and then this year i noticed that many fewer people were visibly confused by AGI. then, this would tell me almost nothing about whether name-recognition of AGI increased or decreased; at n=10, it is nearly impossible to say anything whatsoever.

in research, if you settle into a particular niche you can churn out papers much faster, because you can develop a very streamlined process for that particular kind of paper. you have the advantage of already working baseline code, context on the field, and a knowledge of the easiest way to get enough results to have an acceptable paper.

while these efficiency benefits of staying in a certain niche are certainly real, I think a lot of people end up in this position because of academic incentives - if your career depends on publishing lots of papers, then a recipe to get lots of easy papers with low risk is great. it's also great for the careers of your students, because if you hand down your streamlined process, then they can get a phd faster and more reliably.

however, I claim that this also reduces scientific value, and especially the probability of a really big breakthrough. big scientific advances require people to do risky bets that might not work out, and often the work doesn't look quite like anything anyone has done before.

as you get closer to the frontier of things that have ever been done, the road gets tougher and tougher. you end up spending more time building basic infra...

the modern world has many flaws, but I'm still deeply grateful for the modern era of unprecedented peace, prosperity, and freedom in the developed world. 99% of people reading these words have never had to worry about dying in a cholera epidemic, or malaria or smallpox or the plague, or childbirth, or in war, or from a famine, or due to a political purge. this is not true for other times in history, or other places in the world today.

(extremely unoriginal thought, but still important to acknowledge periodically because it's easy to take for granted. especially because it's much more common to complain about ways the world is broken than to acknowledge what has improved over time.)

I think it would be really bad for humanity to rush to build superintelligence before we solve the difficult problem of how to make it safe. But also I think it would be a horrible tragedy if humanity never ever built superintelligence. I hope we figure out how to thread this needle with wisdom.

I agree with this fwiw. Currently I think we are in way way more danger of rushing to build it too fast than of never building it at all, but if e.g. all the nations of the world had agreed to ban it, and in fact were banning AI research more generally, and the ban had held stable for decades and basically strangled the field, I'd be advocating for judicious relaxation of the regulations (same thing I advocate for nuclear power basically).

I am not really clear that I should be worried on the scale of decades? If we're doing a calculation of expected future years of a flourishing technologically mature civilization, slowing down for 1,000 years here in order to increase the chance of success by like 1 percentage point is totally worth it in expectation.

Given this, it seems plausible to me that one should rather spend 200 years trying to improve civilizational wisdom and decision-making rather than instead attempt to specifically just unlock regulation on AI (of course the specifics here are cruxy).

I agree that 200 years would be worth it if we actually thought that it would work. My concern is that it's not clear civilization would get better/moresane/etc. over the next century vs. worse. And relatedly, every decade that goes by, we eat another percentage point or three of x-risk from miscellaneous other sources (nuclear war, pandemics, etc.) which basically impose a time-discount factor on our calculations large enough to make a 200 year pause seem really dangerous and bad to me.

while I agree for smaller numbers like a few decades, I don't think I agree with a 1000 year pause.

I think (a) it's perfectly reasonable for people to be selfish and care about superintelligence happening during their lifetime (forget future people and discount factors thereof - almost every single person alive today cares ooms more about themselves than about some random person on the other side of the planet), (b) it's easy for "delay forever" people to basically pascal's mug you this way, as in nuclear power (c) it's unclear that humanity becomes monotonically more wise over time (as an unrealistic example, consider a world where we successfully create an international treaty to ensure ASI is safe, and then for some reason the entire world modern order collapses and the only actors left are random post-collapse states racing to build ASI. then it would have been better to build ASI in a functional pre-collapse world order than to delay. one could reasonably (though i personally don't) believe that the current world order is likely to fail in the coming decades and ASI is best built now than in the ensuing chaos)

i think it’s plausible humans/humanity should be carefully becoming ever more intelligent forever and not ever create any highly non-[human-descended] top thinker[1]

i also think it's confused to speak of superintelligence as some definite thing (like, to say "create superintelligence", as opposed to saying "create a superintelligence"), and probably confused to speak of safe fooming as a problem that could be "solved", as opposed to one needing to indefinitely continue to be thoughtful about how one should foom ↩︎

I decided to conduct an experiment at neurips this year: I randomly surveyed people walking around in the conference hall to ask whether they had heard of AGI

I found that out of 38 respondents, only 24 could tell me what AGI stands for (63%)

we live in a bubble

the specific thing i said to people was something like:

excuse me, can i ask you a question to help settle a bet? do you know what AGI stands for? [if they say yes] what does it stand for? [...] cool thanks for your time

i was careful not to say "what does AGI mean".

most people who didn't know just said "no" and didn't try to guess. a few said something like "artificial generative intelligence". one said "amazon general intelligence" (??). the people who answered incorrectly were obviously guessing / didn't seem very confident in the answer.

if they seemed confused by the question, i would often repeat and say something like "the acronym AGI" or something.

several people said yes but then started walking away the moment i asked what it stood for. this was kind of confusing and i didn't count those people.

when i was new to research, i wouldn't feel motivated to run any experiment that wouldn't make it into the paper. surely it's much more efficient to only run the experiments that people want to see in the paper, right?

now that i'm more experienced, i mostly think of experiments as something i do to convince myself that a claim is correct. once i get to that point, actually getting the final figures for the paper is the easy part. the hard part is finding something unobvious but true. with this mental frame, it feels very reasonable to run 20 experiments for every experiment that makes it into the paper.

random thoughts on analytical and emotional intelligence

one thing that I think the world needs more of is analyses into the nature of the mind by people who are both rigorous/analytically inclined, and also emotionally intelligent/integrated. much writing from the former fails to model large parts of the human mind, and much writing from the latter fails to create models of sufficient clarity and validity.

I think this underlies a lot of my instinctive dislike of humanities work. people who are emotionally perceptive but not rigorous and analytical tend to notice interesting things about the human experience, but then come up with very poor models that set off all of my bullshit sensors that are attuned to rigorous arguments. but I think it should be possible to have humanities work that is not like this.

(for clarity, from here out I will say analytical and emotional to refer to the axes which are independent of each other, and ABNE (analytically but not emotionally intelligent) and EBNA for the converse)

(I also want to clarify that I don't think of analytical as being in opposition to intuition, at least in the context of this post. something something Terence Tao's pos...

random brainstorming ideas for things the ideal sane discourse encouraging social media platform would have:

- have an LM look at the comment you're writing and real time give feedback on things like "are you sure you want to say that? people will interpret that as an attack and become more defensive, so your point will not be heard". addendum: if it notices you're really fuming and flame warring, literally gray out the text box for 2 minutes with a message like "take a deep breath. go for a walk. yelling never changes minds"

- have some threaded chat component bolted on (I have takes on best threading system). big problem is posts are fundamentally too high effort to be a way to think; people want to talk over chat (see success of discord). dialogues were ok but still too high effort and nobody wants to read the transcript. one stupid idea is have an LM look at the transcript and gently nudge people to write things up if the convo is interesting and to have UI affordances to make it low friction (eg a single button that instantly creates a new post and automatically invites everyone from the convo to edit, and auto populates the headers)

- inspired by the court system, the most autisticall

a thing i've noticed rat/autistic people do (including myself): one very easy way to trick our own calibration sensors is to add a bunch of caveats or considerations that make it feel like we've modeled all the uncertainty (or at least, more than other people who haven't). so one thing i see a lot is that people are self-aware that they have limitations, but then over-update on how much this awareness makes them calibrated. one telltale hint that i'm doing this myself is if i catch myself saying something because i want to demo my rigor and prove that i've considered some caveat that one might think i forgot to consider

i've heard others make a similar critique about this as a communication style which can mislead non-rats who are not familiar with the style, but i'm making a different claim here that one can trick oneself.

it seems that one often believes being self aware of a certain limitation is enough to correct for it sufficiently to at least be calibrated about how limited one is. a concrete example: part of being socially incompetent is not just being bad at taking social actions, but being bad at detecting social feedback on those actions. of course, many people are not even...

it's quite plausible (40% if I had to make up a number, but I stress this is completely made up) that someday there will be an AI winter or other slowdown, and the general vibe will snap from "AGI in 3 years" to "AGI in 50 years". when this happens it will become deeply unfashionable to continue believing that AGI is probably happening soonish (10-15 years), in the same way that suggesting that there might be a winter/slowdown is unfashionable today. however, I believe in these timelines roughly because I expect the road to AGI to involve both fast periods and slow bumpy periods. so unless there is some super surprising new evidence, I will probably only update moderately on timelines if/when this winter happens

also a lot of people will suggest that alignment people are discredited because they all believed AGI was 3 years away, because surely that's the only possible thing an alignment person could have believed. I plan on pointing to this and other statements similar in vibe that I've made over the past year or two as direct counter evidence against that

(I do think a lot of people will rightly lose credibility for having very short timelines, but I think this includes a big mix of capabilities and alignment people, and I think they will probably lose more credibility than is justified because the rest of the world will overupdate on the winter)

i find it funny that i know people in all 4 of the following quadrants:

- works on capabilities, and because international coordination seems hopeless, we need to race to build ASI first before the bad guys

- works on capabilities, and because international coordination seems possible, and all national leaders like to preserve the status quo, we need to build ASI before it gets banned

- works on safety, and because international coordination seems hopeless, we need to solve the technical problem before ASI kills everyone

- works on safety, and because international coordination seems possible, so we need to focus on regulation and policy before ASI kills everyone

bonus types of guy:

- works on capabilities because if we don't solve ASI soon then the AI hype all comes crashing down and creates a new AI winter

- works on capabilities because ASI is inevitable and it's cool to be part of the trajectory of history

- works on capabilities because there's this really cool idea that they've always dreamed of implementing

- works on capabilities because it's cool being able to say they contributed to something that is used by millions

- works on capabilities because the technical problems are really interesting and fun

- works on capabilities because alignment is a capabilities problem

- works on capabilities because they expect it to be super glorious to discover big breakthroughs in capabilities

Aren't these basically mostly "works on capabilities because of status + power"?

(E.g. if you only care about challenging technical problems, you'll just go do math)

I think most of the people involved like working with the smartest and most competent people alive today, on the hardest problems, in order to build a new general intelligence for the first time since the dawn of humanity, in exchange for massive amounts of money, prestige, fame, and power. This is what I refer to by 'glory'.

people around these parts often take their salary and divide it by their working hours to figure out how much to value their time. but I think this actually doesn't make that much sense (at least for research work), and often leads to bad decision making.

time is extremely non fungible; some time is a lot more valuable than other time. further, the relation of amount of time worked to amount earned/value produced is extremely nonlinear (sharp diminishing returns). a lot of value is produced in short flashes of insight that you can't just get more of by spending more time trying to get insight (but rather require other inputs like life experience/good conversations/mentorship/happiness). resting or having fun can help improve your mental health, which is especially important for positive tail outcomes.

given that the assumptions of fungibility and linearity are extremely violated, I think it makes about as much sense as dividing salary by number of keystrokes or number of slack messages.

concretely, one might forgo doing something fun because it seems like the opportunity cost is very high, but actually diminishing returns means one more hour on the margin is much less valuable than the average implies, and having fun improves productivity in ways not accounted for when just considering the intrinsic value one places on fun.

but actually diminishing returns means one more hour on the margin is much less valuable than the average implies

This importantly also goes in the other direction!

One dynamic I have noticed people often don't understand is that in a competitive market (especially in winner-takes-all-like situations) the marginal returns to focusing more on a single thing can be sharply increasing, not only decreasing.

In early-stage startups, having two people work 60 hours is almost always much more valuable than having three people work 40 hours. The costs of growing a team are very large, the costs of coordination go up very quickly, and so if you are at the core of an organization, whether you work 40 hours or 60 hours is the difference between being net-positive vs. being net-negative.

This is importantly quite orthogonal whether you should rest or have fun or whatever. While there might be at an aggregate level increasing marginal returns to more focus, it is also the case that in such leadership positions, the most important hours are much much more productive than the median hour, and so figuring out ways to get more of the most important hours (which often rely on peak cognitive performance and a non-conflicted motivational system) is even more leveraged than adding the marginal hour (but I think it's important to recognize both effects).

agree it goes in both directions. time when you hold critical context is worth more than time when you don't. it's probably at least sometimes a good strategy to alternate between working much more than sustainable and then recovering.

my main point is this is a very different style of reasoning than what people usually do when they talk about how much their time is worth.

every 4 years, the US has the opportunity to completely pivot its entire policy stance on a dime. this is more politically costly to do if you're a long-lasting autocratic leader, because it is embarrassing to contradict your previous policies. I wonder how much of a competitive advantage this is.

Autarchies, including China, seem more likely to reconfigure their entire economic and social systems overnight than democracies like the US, so this seems false.

I mean, the proximate cause of the 1989 protests was the death of the quite reformist general secretary Hu Yaobang. The new general secretary, Zhao Ziyang, was very sympathetic towards the protesters and wanted to negotiate with them, but then he lost a power struggle against Li Peng and Deng Xiaoping (who was in semi retirement but still held onto control of the military). Immediately afterwards, he was removed as general secretary and martial law was declared, leading to the massacre.

people generally talk about food preservatives in a negative way. certainly, some of them are not great for you. but I want to take a moment to appreciate how wonderful food preservatives (and refrigeration and pasteurization and canning) are as well. it's crazy how fast most normal food goes bad. like a loaf of real old fashioned bread will go stale after a day and then become moldy after a few more days. for almost all of human history, people just sort of lived with this, and if they wanted to make foods last they had to dry it out and/or drown it in salt or vinegar or alcohol. pickles and beef jerky are great, but it would suck if you had to eat them all the time.

one problem with taking ideas seriously is you can get pwned by virulent memes that are very good at hijacking your brain into believing them and propagating them further. they're subtly flawed, but the flaws are extremely difficult to reason through, so being very smart doesn't save you; in fact, it's easy to dig yourself in deeper. many ideologies and religions are like this.

it's unfortunately very hard to tell when this has happened to you. on the one hand, it feels like arguments just being obviously very compelling, so you'll notice nothing wrong if it happens to you. on the other hand, if you overcorrect and never take compelling arguments seriously, you become too stodgy and ignore anything novel that you should pay attention to. one idea for how to think about this better: imagine an oracle told you that there exists a magic phrase that you cannot distinguish from a very compelling argument. you don't really know when this magic phrase will pop up in life, if ever. but it might give you a little bit more pause the next time someone makes a really compelling argument for why you should give all your money to X.

I find it anthropologically fascinating how at this point neurips has become mostly a summoning ritual to bring all of the ML researchers to the same city at the same time.

nobody really goes to talks anymore - even the people in the hall are often just staring at their laptops or phones. the vast majority of posters are uninteresting, and the few good ones often have a huge crowd that makes it very difficult to ask the authors questions.

increasingly, the best parts of neurips are the parts outside of neurips proper. the various lunches, dinners, and parties hosted by AI companies and friend groups (and increasingly over the past few years, VCs) are core pillars of the social scene, and are where most of the socializing happens. there are so many that you can basically spend your entire neurips not going to neurips at all. at dinnertime, there are literally dozens of different events going on at the same time.

multiple unofficial workshops, entirely unaffiliated with neurips, will schedule themselves to be in town at the same time; they will often have a way higher density of interesting people and ideas.

if you stand around in the hallways and chat in a group long enough, event...

This is true of approximately every worthwhile conference and convention. In my entire life I've been to exactly one conference where the scheduled programming provided more than 10% of the event's value.

having the right mental narrative and expectation setting when you do something seems extremely important. the exact same object experience can be anywhere from amusing to irritating to deeply traumatic depending on your mental narrative. some examples:

- a minor inconvenience like missing your bus when you're not in a rush can be much more irritating if you're having a bad day and you have the narrative of "everything is going wrong for me today"

- something going wrong during travel can be a catastrophe if you're expecting the perfect vacation but it can even be a fond memory if you're just viewing it as an adventure (and a bonding experience if travelling with others)

- not getting to do something you wanted to do hurts a lot more if you feel like you made a deal with yourself that you'd get to do it in exchange for doing something else you didn't want to do; whereas you might not even really want the thing that much otherwise.

- expecting something to happen soon and having it gradually delayed further and further into the future is a lot more irritating than already expecting something to be delayed a lot.

tbc, the optimal decision is not always the narrative that is maximally happy with e...

when will we have sufficiently conclusive evidence for the long term safety of far-uvc that it's reasonable to push for its universal adoption in all public spaces without reservation? the safety issue seems like a much bigger deal than the cost issue for broad adoption; if it works safely, the economic case for installing far uvc in public spaces seems pretty solid - people being sick must be terrible for the economy! and they're only ever going to get cheaper.

in a world where far uvc is near universally deployed, we might be able to banish the common cold or the flu to the past, in the same way that cholera is basically no longer a problem in the developed world. this seems like a pretty big deal and I'd like to know when this glorious future is coming (and whether there's anything I can do to make it come sooner)!

(from eyeballing studies, it sounds like the cost of the cold+flu to the US economy is on the order of $100bn/yr, which passes basic Fermi estimate muster - given a $30tn/yr gdp, a few days per year of lost productivity due to cold/flu is easily hundreds of billions. even at the current price of far uvc, which is a huge overestimate of future tech at volume, the cost of...

execution is necessary for success, but direction is what sets apart merely impressive and truly great accomplishment. though being better at execution can make you better at direction, because it enables you to work on directions that others discard as impossible.

random half baked thoughts from a sleep deprived jet lagged mind: my guess is that the few largest principal components of variance of human intelligence are something like:

- a general factor that affects all cognitive abilities uniformly (this is a sum of a bazillion things. they could be something physiological like better cardiovascular function, or more efficient mitochondria or something; or maybe there's some pretty general learning/architecture hyperparameter akin to lr or aspect ratio that simply has better or worse configurations. each small change helps/hurts a little bit). having a better general factor makes you better at pattern recognition and prediction, which is the foundation of all intelligence. whether this is learning a policy or a world model, you need to be able to spot regularities in the world to exploit to have any hope of making good predictions.may

- a systematization factor (how much to be inclined towards using the machinery of pattern recognition towards finding and operating using explicit rules about the world, vs using that machinery implicitly and relying on intuition). this is the autist vs normie axis. importantly, it's not like normies are born with

why is ADHD also strongly correlated with systematization? it could just be worse self modelling - ADHD happens when your brain's model of its own priorities and motivations falls out of sync from your brain's actual priorities and motivations. if you're bad at understanding yourself, you will misunderstand your priorities, and also you will not be able to control your priorities, because you won't know what kinds of evidence will really persuade your brain to adopt a specific priority, and your brain will learn that it can't really trust you to assign it priorities to satisfy its motives (burnout).

why do stimulants help ADHD? well, they short circuit the part where your brain figures out what priorities to trust based on whether they achieve your true motives. if your brain has already learned that your self model is bad at picking actions that eventually pay off towards its true motives, it won't put its full effort behind those actions. if you can trick it by making every action feel like it's paying off, you can get it to go along.

honestly unclear whether this is good or bad. on the one hand, if your self model has fallen out of sync, this is pretty necessary to get things done, and could get you out of a bad feedback loop (ADHD is really bad for noticing that your self model has fallen horribly out of sync and acting effectively on it!). some would argue on naturalistic grounds that ideally the true long term solution is to use your brain's machinery the way it was always intended, by deeply understanding and accepting (and possibly modifying) your actual motives/priorities and having them steer your actions. the other option is to permanently circumvent your motivation system, to turn it into a rubber stamp for whatever decrees are handed down from the self model, which, forever unmoored from needing to model the self, is no longer an understanding of the self but rather an aspirational endpoint towards which the self is molded. I genuinely don't know which is better as an end goal.

timelines takes

- i've become more skeptical of rsi over time. here's my current best guess at what happens as we automate ai research.

- for the next several years, ai will provide a bigger and bigger efficiency multiplier to the workflow of a human ai researcher.

- ai assistants will probably not uniformly make researchers faster across the board, but rather make certain kinds of things way faster and other kinds of things only a little bit faster.

- in fact probably it will make some things 100x faster, a lot of things 2x faster, and then be literally useless for a lot of remaining things

- amdahl's law tells us that we will mostly be bottlenecked on the things that don't get sped up a ton. like if the thing that got sped up 100x was only 10% of the original thing, then you don't get more than a 1/(1 - 10%) speedup.

- i think the speedup is a bit more than amdahl's law implies. task X took up 10% of the time because there is diminishing returns to doing more X, and so you'd ideally do exactly the amount of X such that the marginal value of time spent on X is exactly in equilibrium with time spent on anything else. if you suddenly decrease the cost of X substantially, the equilibrium point shifts

- for the next several years, ai will provide a bigger and bigger efficiency multiplier to the workflow of a human ai researcher.

My current best guess median is that we'll see 6 OOMs of effective compute in the first year after full automation of AI R&D if this occurs in ~2029 using a 1e29 training run and compute is scaled up by a factor of 3.5x[1] over the course of this year[2]. This is around 5 years of progress at the current rate[3].

How big of a deal is 6 OOMs? I think it's a pretty big deal; I have a draft post discussing how much an OOM gets you (on top of full automation of AI R&D) that I should put out somewhat soon.

Further, my distribution over this is radically uncertain with a 25th percentile of 2.5 OOMs (2 years of progress) and a 75th percentile of 12 OOMs.

The short breakdown of the key claims is:

- Initial progress will be fast, perhaps ~15x faster algorithmic progress than humans.

- Progress will probably speed up before slowing down due to training smarter AIs that can accelerate progress even faster, and this being faster than returns diminish on software.

- We'll be quite far from the limits of software progress (perhaps median 12 OOMs) at the point when we first achieve full automation.

Here is a somewhat summarized and rough version of the argument (stealing heavily from some of Tom...

libraries abstract away the low level implementation details; you tell them what you want to get done and they make sure it happens. frameworks are the other way around. they abstract away the high level details; as long as you implement the low level details you're responsible for, you can assume the entire system works as intended.

a similar divide exists in human organizations and with managing up vs down. with managing up, you abstract away the details of your work and promise to solve some specific problem. with managing down, you abstract away the mission and promise that if a specific problem is solved, it will make progress towards the mission.

(of course, it's always best when everyone has state on everything. this is one reason why small teams are great. but if you have dozens of people, there is no way for everyone to have all the state, and so you have to do a lot of abstracting.)

when either abstraction leaks, it causes organizational problems -- micromanagement, or loss of trust in leadership.

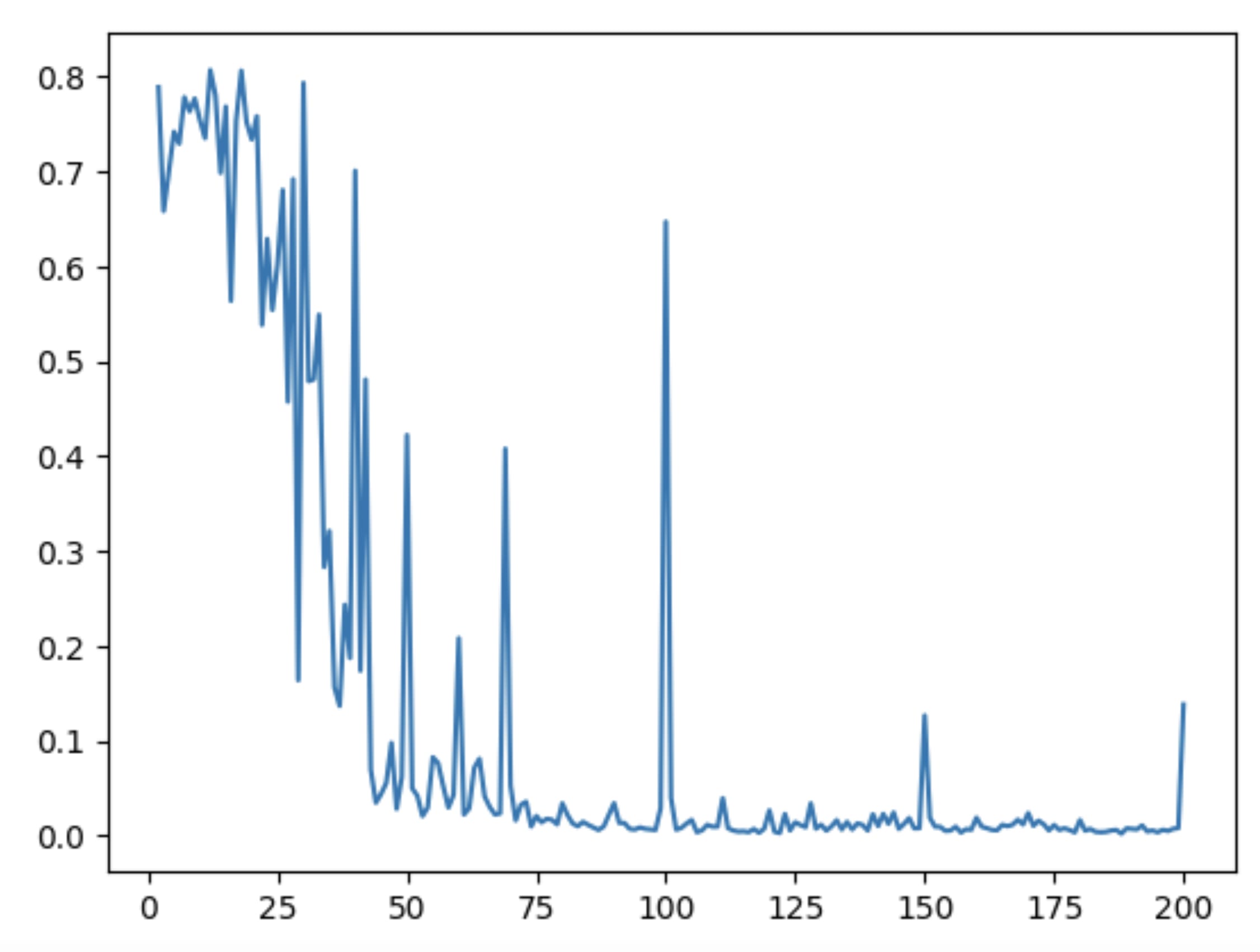

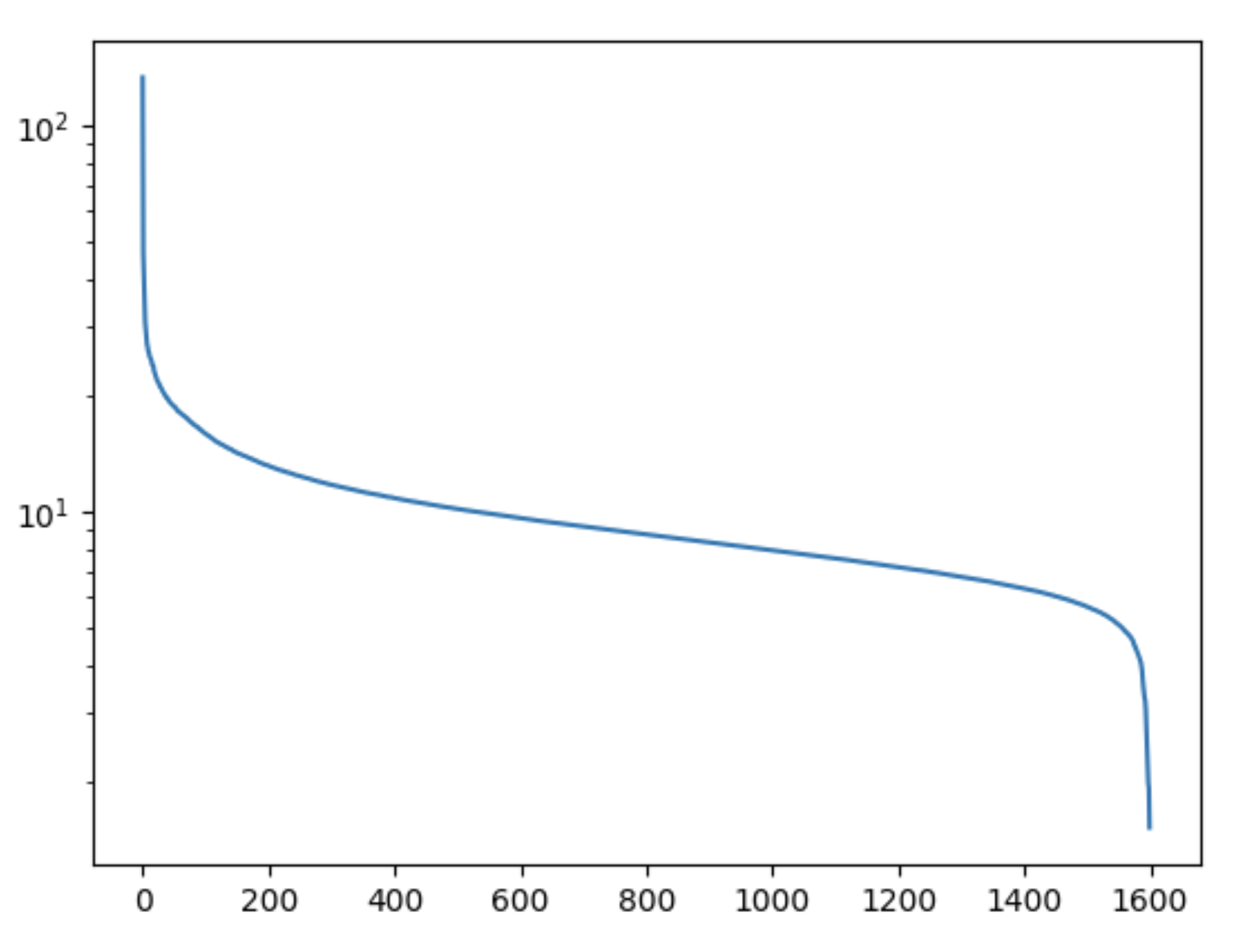

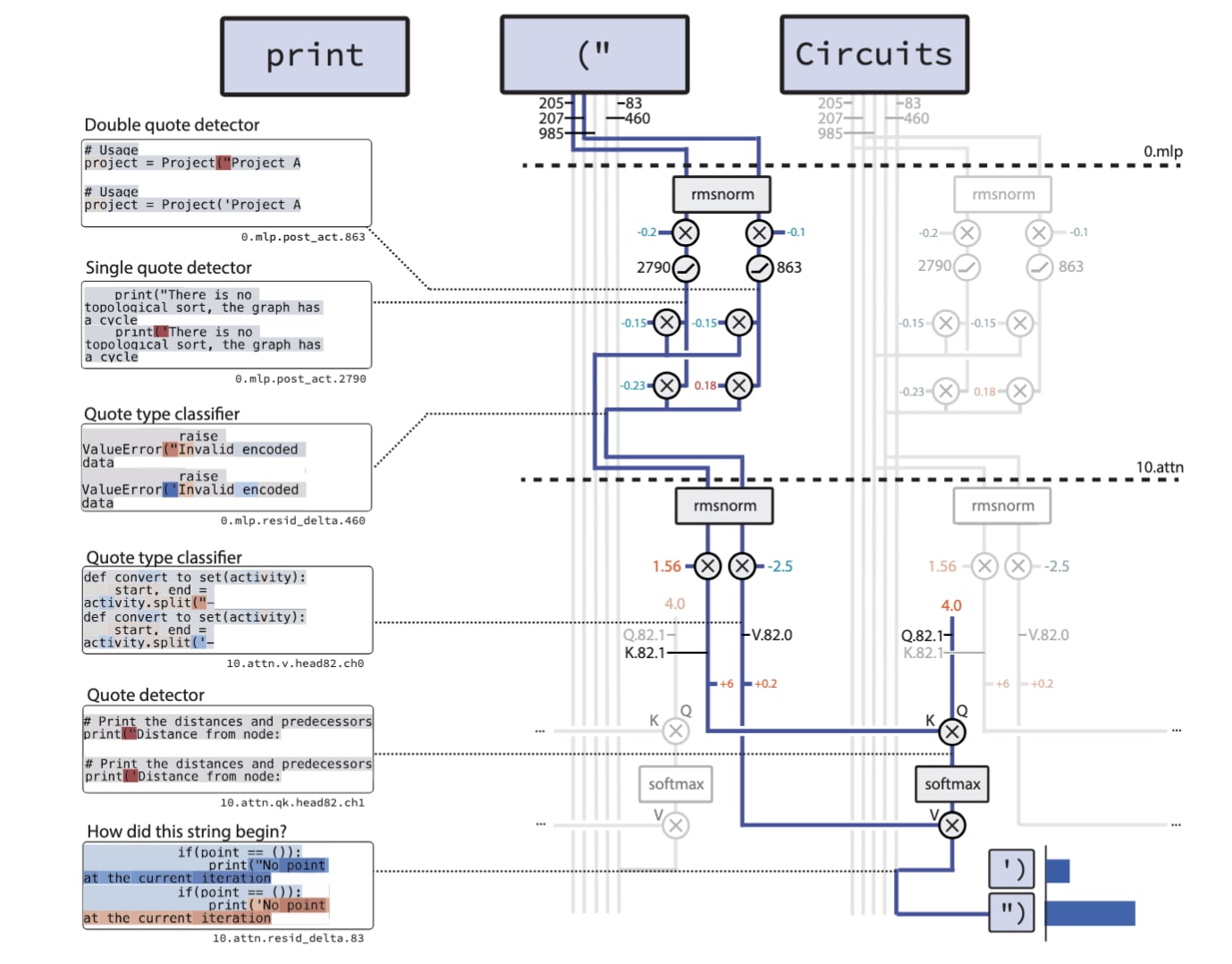

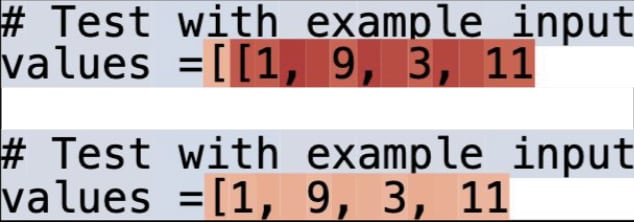

creating surprising adversarial attacks using our recent paper on circuit sparsity for interpretability

we train a model with sparse weights and isolate a tiny subset of the model (our "circuit") that does this bracket counting task where the model has to predict whether to output ] or ]]. It's simple enough that we can manually understand everything about it, every single weight and activation involved, and even ablate away everything else without destroying task performance.

(this diagram is for a slightly different task because i spent an embarassingly large number of hours making this figure and decided i never wanted to make another one ever again)

in particular, the model has a residual channel delta that activates twice as strongly when you're in a nested list. it does this by using the attention to take the mean over a [ channel, so if you have two [s then it activates twice as strongly. and then later on it thresholds this residual channel to only output ]] when your nesting depth channel is at the stronger level.

but wait. the mean over a channel? doesn't that mean you can make the context longer and "dilute" the value, until it falls below the threshold? then, suddenly, the ...

Aside: For me, this paper is potentially the most exciting interpretability result of the past several years (since SAEs). Scaling it to GPT-3 and beyond seems like a very promising direction. Great job!

I think people in these parts are not taking sufficiently seriously the idea that we might be in an AI bubble. this doesn't necessarily mean that AI isn't going to be a huge deal - just because there was a dot com bubble doesn't mean the Internet died - but it does very substantially affect the strategic calculus in many ways.

I would be utterly unsurprised to see an AI crash in the next 24 months, leading to another AI Winter. I lived through 1999 and Petfood.com and the Internet bubble pop. And I can pattern match.

But the Internet crash didn't last long. Google and Amazon survived just fine, Ruby on Rails was big within half a decade, and soon enough we were doing Web 2.0 and AJAX and all that fun stuff.

It's possible that current generation LLMs might hit a wall soon, for various architectural reasons that are obvious to many people but that I'm superstitiously averse to amplifying. If they do, that increases the chance of an AI Winter until the underlying research gets done.

But I have trouble imagining any series of events that buys us 10 more years. Bubble pops in tech are usually an early correction that wipes out a Precambrian Explosion of dumb money, and that ultimately concentrates resources into a few successful players.

some reasons why it matters

- all effects that route through longer timelines (allocating more to upskilling oneself and others, longer term bets, not expecting agi to look like current models, aggressiveness of distributing funds to alignment, etc)

- whether to pursue an aggressive (stock-heavy) or conservative (bond-heavy) investment strategy. if there is an ai bubble pop, it will likely bring the entire economy into a recession.

- how much money to save as runway; should you be taking advantage of the bubble to grab as much cash as possible before the music stops, or should you be trying to dispose of all of your money before the singularity makes it worthless?

- for lab employees: how much lab equity to sell/hold?

- how much to emphasize "agi soon" in public comms, or in conversations with policymakers? (during a bubble pop, having predicted agi soon will probably be even more negatively viewed than merely having been wrong about timelines with no pop)

- if there is a bubble and it pops, sentiment around agi will flip from inevitability to impossibility. many people will not be epistemically strong enough to resist the urge to conform. being aware of the hype cycle can help free yourself from it and avoid both over and under exuberance.

i think of the idealized platonic researcher as the person who has chosen ultimate (intellectual) freedom over all else. someone who really cares about some particular thing that nobody else does - maybe because they see the future before anyone else does, or maybe because they just really like understanding everything about ants or abstract mathematical objects or something. in exchange for the ultimate intellectual freedom, they give up vast amounts of money, status, power, etc.

one thing that makes me sad is that modern academia is, as far as I can tell, not this. when you opt out of the game of the Economy, in exchange for giving up real money, status, and power, what you get from Academia is another game of money, status, and power, with different rules, and much lower stakes, and also everyone is more petty about everything.

at the end of the day, what's even the point of all this? to me, it feels like sacrificing everything for nothing if you eschew money, status, and power, and then just write a terrible irreplicable p-hacked paper that reduces the net amount of human knowledge by adding noise and advances your career so you can do more terrible useless papers. at that point, why not just leave academia and go to industry and do something equally useless for human knowledge but get paid stacks of cash for it?

ofc there are people in academia who do good work but it often feels like the incentives force most work to be this kind of horrible slop.

I hear this a lot, and as a PhD student I definitely see some adverse incentives, but I basically just ignore them and do what I want. Maybe I’ll eventually get kicked out of the academic system, but it will take years, which is enough time to do obviously excellent work if I have that potential. Obviously excellent work seems to be sufficient to stay in academia. So the problem doesnt really seem that bad to me - the bottom 60% or so grift and play status games, but probably weren’t going to contribute much anyway, and the top 40% occasionally wastes time on status games because of the culture or because they have that type of personality, but often doesnt really need to.

I suspect that academia would be less like this if there weren't an oversupply of labor in academia. Like, there's this crazy situation where there are way more people who want to be professors than there are jobs for professors. So a bunch get filtered out in grad school, and a bunch more get filtered out in early stages of professorhood. So professors can't relax and research what they are actually curious about until fairly late in the game (e.g. tenure) because they are under so much competition to impress everyone around them with publications and whatnot.

Also, the person who's willing to mud-wrestle for twenty years to get a solid position so they can turn around and do real research is just much much rarer than the person who enjoys getting dirty.

academia is too broad of a term. most of math, physics, theoretical CS, paleontology, material sciences, engineering, and some branches of economics, biology, engineering, (computational) neuroscience, (computational) linguistics, statistics etc are doing well and overall reward intellectual freedom and deep work. in terms of people this is a small minority of total academics, probably <5%.

It is true that many subfields, or even entire domains of science are diseased disciplines. Most of the research is marginal, irrelevant, reinventing the wheel, trivial, tautological, p-hacked and often even fraudulent. One can point to the usual suspects in the humanities and the social sciences but disciplines where the majority of research is noise, nonsense or even net-negative plausibly also includes machine learning and (I'm told) medicine.

Is that disappointing? Perhaps. But this still describes hundred of thousands or millions of people all over the world pushing the frontier of knowledge.

maybe I should host an antechamber/arena house party: one chill cozy room with soothing music where no arguing is allowed and people are strongly encouraged to say kind things and reflect on things they're grateful for and whatnot, and another with harsh fluorescent lights and agitating music and a big whiteboard full of hot takes and the conversations all get transcribed by speech to text and posted on lesswrong in real time. and guests are given a heart rate monitor that beeps if their HR gets too high, forcing them to spend a few minutes in the chill room before returning to the arena

Arguments will be won by the attendees with the best cardio fitness (low resting HR) + mental discipline (less affected by agitating surroundings). This creates a natural incentive to exercise and meditate.

Inducing sexual arousal seems like a better equilibrium, as long as everyone consents. It has positive valence roughly proportional to ΔHR, solves gender ratio problems and incentivizes people to learn effective flirting.

on the one hand, it is a desirable feature of an intellectual community to be truth seeking, and while it can be deeply emotionally painful to part ways with deeply held beliefs, in the long run it's better to tear off the bandage. on the other hand, being emotionally hurt all the time by your community kind of fucking sucks, and isn't very good for long term emotional or epistemic health.

perhaps a middle ground is in order: intellectual communities should be partitioned into an arena, where every idea is to be exposed to the harsh light of truth, and an antechamber, where you can rest and be surrounded by positivity and develop ideas in a supportive environment.

both are necessary - we need a way to kill bad ideas, because an environment that refuses to discard bad ideas because they are emotionally load bearing is doomed to epistemic ruin. but also the best weird ideas often sound bad initially, and require a safe environment to develop; and we are all human, and our emotional well being and desire to belong to a community is essential. by visibly separating the two, we might be able to get the best of both worlds.

this is not a crazy idea - many other parts of society have analogous things. for example, people who play sports for fun with their friends compete to win while on the field, but this only brings them closer off the field.

I think one common criticism of LW ist it is too much of an arena, and not enough of an antechamber. perhaps this can be fixed somehow.

Orthogonally, cultural standards of emotional tone during debates are also important for how much emotional struggle is involved in changing one's ideas.

If the tone implies that you were foolish for holding your idea, it's going to be a lot more painful to let it go.

Lesswrong has a pretty good standard of not just civil but polite and supportive discourse. This seems actually pretty crucial for it being an environment in which people do regularly change their minds.

I don't like the term arena in your suggested division because it implies combat. Combat is emotionally intense, I'd rather have a metaphor that's more collaborative.

This doesn't eliminate the worth of having separate spaces for support and rigorous testing of ideas, but I think it's important to keep in mind whenever we're discussing group epistemics.

if anything, it seems more common that people dig into incorrect beliefs because of a sense of adversity against others

Consider cults (including milder things like weird "alternative" health advice groups etc.). Positivity and mutual support seem like a key element of their architecture, and adversity often primarily comes from peers rather than an outgroup. I'm not talking about isolated beliefs, content and motivations for those tend to be far more legible. A lot of belief memeplexes have either too few followers or aren't distinct enough from all the other nonsense to be explicitly labeled as cults or ideologies, or to be organized, but you generally can't argue their members out of alignment with the group (on relevant beliefs, considered altogether).

the point ... is to make it clear that when you are receiving kindness, you are not receiving updates towards truth

This is also a standard piece of anti-epistemic machinery of groups that reinforce some nonsense memplex among themselves with support and positivity. Support and positivity are great, but directing them to systematically taboo correctness-fixing activity is what I'm gesturing at, the sort of "kindness" that by its intent and nature tends to trade off against correctness.

I think one common criticism of LW ist it is too much of an arena, and not enough of an antechamber

And another common criticism is that it is too much the antechamber.

Proposed name: Butterfly Conservatory (https://www.lesswrong.com/posts/imnfJ9Ris7GgjkZbT/the-bughouse-effect-1#My_stag_is_best_stag)

it can be deeply emotionally painful to part ways with deeply held beliefs

This is not necessarily the case, not for everyone. Theories and their credences don't need to be cherished to be developed, or acted upon, they only need to be taken seriously. Plausibly this can be mitigated by keeping identity small, accepting only more legible things in the role of "beliefs" that can have this sort of psychological effect (so that they can be defeated through argument alone). Legible ideas cover a surprising amount of territory, there is no pragmatic need to treat anything else as "beliefs" in this sense, all the other things can remain ambient epistemic content detached from who you are. When more nebulous worldviews become part of one's identity, they become nearly impossible to dislodge (and possibly painful, with enough context and effort). They are still worth developing towards eventual legibility, and not practical to argue with (or properly explain).

Thus arguing legible beliefs should by their nature be less intrusive than arguing nebulous worldviews. And perhaps nebulous worldviews should be argued against being held as "beliefs" in the emotional sense in general, regardless of their apparent correctness, as a matter of epistemic hygiene. Ensuring by habit you are not going to be in the position where you have "beliefs" that would be painful to part ways with, and also can't be pinned down clearly enough to dispel.

there are very few people in the world who don't deeply emotionally hold quite a few important beliefs. having a small identity is difficult in practice, because having an identity is an important part of how nearly everyone navigates this complex and confusing world. I'm skeptical of anyone who claims to have completely eliminated all emotional attachment to all of their important decision-relevant beliefs.

but even assuming that you have somehow achieved perfect small identityness and emotional independence of all of your important beliefs and it all works out great for you, you must surely acknowledge that there are many people out there who have not. and probably they are more likely to achieve rationalist enlightenment if they are surrounded by people who are supportive but nudge gently towards truth seeking, rather than immediately coming in with a wrecking ball and demolishing emotionally load bearing pillars.

there are a lot of video games (and to a lesser extent movies, books, etc) that give the player an escapist fantasy of being hypercompetent. It's certainly an alluring promise: with only a few dozen hours of practice, you too could become a world class fighter or hacker or musician! But because becoming hypercompetent at anything is a lot of work, the game has to put its finger on the scale to deliver on this promise. Maybe flatter the user a bit, or let the player do cool things without the skill you'd actually need in real life.

It's easy to dismiss this kind of media as inaccurate escapism that distorts people's views of how complex these endeavors of skill really are. But it's actually a shockingly accurate simulation of what it feels like to actually be really good at something. As they say, being competent doesn't feel like being competent, it feels like the thing just being really easy.

reliability is surprisingly important. if I have a software tool that is 90% reliable, it's actually not that useful for automation, because I will spend way too much time manually fixing problems. this is especially a problem if I'm chaining multiple tools together in a script. I've been bit really hard by this because 90% feels pretty good if you run it a handful of times by hand, but then once you add it to your automated sweep or whatever it breaks and then you have to go in and manually fix things. and getting to 99% or 99.9% is really hard because things break in all sorts of weird ways.

I think this has lessons for AI - lack of reliability is one big reason I fail to get very much value out of AI tools. if my chatbot catastrophically hallucinates once every 10 queries, then I basically have to look up everything anyways to check. I think this is a major reason why cool demos often don't mean things that are practically useful - 90% reliable it's great for a demo (and also you can pick tasks that your AI is more reliable at, rather than tasks which are actually useful in practice). this is an informing factor for why my timelines are longer than some other people's

One nuance here is that a software tool that succeeds at its goal 90% of the time, and fails in an automatically detectable fashion the other 10% of the time is pretty useful for partial automation. Concretely, if you have a web scraper which performs a series of scripted clicks in hardcoded locations after hardcoded delays, and then extracts a value from the page from immediately after some known hardcoded text, that will frequently give you a ≥ 90% success rate of getting the piece of information you want while being much faster to code up than some real logic (especially if the site does anti-scraper stuff like randomizing css classes and DOM structure) and saving a bunch of work over doing it manually (because now you only have to manually extract info from the pages that your scraper failed to scrape).

even if scaling does eventually solve the reliability problem, it means that very plausibly people are overestimating how far along capabilities are, and how fast the rate of progress is, because the most impressive thing that can be done with 90% reliability plausibly advances faster than the most impressive thing that can be done with 99.9% reliability

i've noticed a life hyperparameter that affects learning quite substantially. i'd summarize it as "willingness to gloss over things that you're confused about when learning something". as an example, suppose you're modifying some code and it seems to work but also you see a warning from an unrelated part of the code that you didn't expect. you could either try to understand exactly why it happened, or just sort of ignore it.

reasons to set it low:

- each time your world model is confused, that's an opportunity to get a little bit of signal to improve your world model. if you ignore these signals you increase the length of your feedback loop, and make it take longer to recover from incorrect models of the world.

- in some domains, it's very common for unexpected results to actually be a hint at a much bigger problem. for example, many bugs in ML experiments cause results that are only slightly weird, but if you tug on the thread of understanding why your results are slightly weird, this can cause lots of your experiments to unravel. and doing so earlier rather than later can save a huge amount of time

- understanding things at least one level of abstraction down often lets you do things more

I'm very confused why purchasing power varies so dramatically internationally. like why are there countries where everyone has very low wages but everything is also really cheap so it balances out? prima facie, huge disparities like this should get evened out by arbitrage.

the simple explanation is that some labor can only be performed locally, labor mobility is limited (immigration laws, people don't like moving, etc), and transportation costs for goods exist (shipping and tariffs).

however, global shipping is ridiculously cheap. and the economy increasingly consists of white collar jobs which could in theory be done remotely. for example, it seems it mind boggling to me that a top tier SWE/RS in the bay area is worth 10-100x more than one in India or Vietnam. like sure, someone being in the same timezone is great, and Zoom sucks, and so on. but for that price delta surely you could pay people to live nocturnally, construct apartments with bright lights synced to Pacific Time, invest in much better video call technology like that Google Beam thingy, etc?

maybe one possibility is that labor mobility is not actually that low for the very toppest tier people, and so if someone is actual...

In what sense does it actually balance out? e.g. in India, unskilled labor is a lot cheaper, so lots of upper middle class people have servants. But the price of an iPhone in India is pretty similar to the price of an iPhone in the US.

So my impression is that the typical basket of goods and services that people consume in different places around the world at roughly equivalent / analogous relative economic classes actually does vary quite a bit. Anything with a labor component will naturally scale up and down for balance, but staples and stuff made in factories doesn't vary that much. In the US for example, labor of all types is very expensive, so people don't have servants, but most people can afford a pretty much endless supply of trinkets and gadgets.

I worked on a international team during my time at F5 and we had offices in Ireland, Poland, two timezones in the US, Australia and India. The assumption that we could teleconference our way out of geography was a laughable failure for one reason that your hypothetical "nocturnal white collar sweatshops" fails to address: Humans work to live, we don't live to work. Well, most of us that is, and the unbalanced folks (the 10x engineers as they are now called) who would work across timezones burned out dramatically (I was one of them). Why are silicon valley jobs so lucrative but also cost of living so high? Because the people there have children in schools, they socialize with people outside their work and they generally live a life not just work. So how does this play out in a workplace?

Engineering planning has to happen at some hour, it is naturally inconvenient for outliers (Poland is meeting at 7pm thinking about how they missed dinner with their kids, and the engineers from Delhi are up at 11:30pm likely sneaking a nap in before the meeting, and the team in Seattle is just finishing their morning coffee). This creates a situation where both sides of the distribution are ov...

I don't understand it either. I work in Germany with near-shoring colleagues in Slovakia, Serbia, Georgia etc. They are roughly 60% cheaper than German SWEs, generally just as competent, no time-zone problems whatsoever ... basically all the work even with German team members is fully remote so even that is not a difference. Only the need for English creates some minor friction. No idea how this state of affairs makes economic sense now or ever did.

don't worry too much about doing things right the first time. if the results are very promising, the cost of having to redo it won't hurt nearly as much as you think it will. but if you put it off because you don't know exactly how to do it right, then you might never get around to it.

it feels so narratively incongruous that san francisco would become the center for the most ambitious, and the likely future birthplace of agi.

san francisco feels like a city that wants to pretend to be a small quaint hippie town forever. it's a small splattering of civilization dropped amid a vast expanse of beautiful nature. frozen in amber, it's unclear if time even passes here - the lack of seasons makes it feel like a summer that never quite ended. after 9pm, everything closes and everyone goes to bed. and the dysfunction of the city government is never too far away, constantly reminding you of humanity's follies next to the perfection of nature.

on the other hand, nyc feels like the city. everything is happening right here, right now. all the money in the world flows through this one place. it's gritty and yet majestic at the same time. the most ambitious people in the world came here to build their fortunes, and live on in the names on the skyscrapers everywhere that house the employees who continue to keep their companies running. they are part of a surroundings that is entirely constructed by man - even the bits of nature are curated and parcelled out in manageable units. i...

Cali is the place to be for technology because Cali was the defense contractor hub, with the U.S. Navy choosing the bay area as its center for R&D during WWII and the Cold War. The hippie reputation came a lot later, after its status as the primary place to work in IT was thoroughly cemented, with both established infrastructure and the network effect keeping it that way.

HP, for instance, was founded in 1938.

It's not just SF but the SF Bay Area (Google, Nvidia, Meta etc), which is bigger and has more varied vibes than just SF.

in some way, bureaucracy design is the exact opposite of machine learning. while the goal of machine learning is to make clusters of computers that can think like humans, the goal of bureaucracy design is to make clusters of humans that can think like a computer

some thoughts on the short timeline agi lab worldview. this post is the result of taking capabilities people's world models and mashing them into alignment people's world models.

I think there are roughly two main likely stories for how AGI (defined as able to do any intellectual task as well as the best humans, specifically those tasks relevant for kicking off recursive self improvement) happens:

- AGI takes 5-15 years to build. current AI systems are kind of dumb, and plateau at some point. we need to invent some kind of new paradigm, or at least make a huge breakthrough, to achieve AGI. how easily aligned current systems are is not strongly indicative of how easily aligned future AGI is; current AI systems are missing the core of intelligence that is needed.

- AGI takes 2-4 years to build. current AI systems are really close and we just need more compute and schlep and minor algorithmic improvements. current AI systems aren't exactly aligned, but they're like pretty aligned, certainly they aren't all secretly plotting our downfall as we speak.

while I usually think about story 1, this post is about taking story 2 seriously.

it seems basically true that current AI systems are mostly align...

certainly if AI systems were only ever roughly this misaligned we'd be doing pretty well.

I think this is an important disagreement with the "alignment is hard" crowd. I particularly disagree with "certainly."

The question is "what exactly is the AI trying to do, and what happens if it magnified it's capabilities a millionfold and it and it's descendants were running openendedly?", and are any of the instances catastrophically bad?

Some things you might mean that are raising your position to "certainly" (whereas I'd say "most likely not, or, it's too dumb to even count as 'aligned' or 'misaligned'")

- "this ratio of 'do the thing you want' to 'sometimes do a thing you didn't want' is pretty acceptable."

- "this magnitude of 'worst case outcome' is not that bad." (this seems technically true, but, is only because the capability level is low)

- given this ratio of right/wrong responses, you think a smart alignment researcher who's paying attention can keep it in a corrigibility basin even as capability levels rise?

Were any of those what you meant? Or are you thinking about it an entirely different way?

I would naively expect, if you took LLM-agents current degree of alignment, and ran a lotta copies trying to help you with end-to-end alignment research with dialed up capabilities, at least a couple instances would end up trying to subtle sabotage you and/or escape.

my referral/vouching policy is i try my best to completely decouple my estimate of technical competence from how close a friend someone is. i have very good friends i would not write referrals for and i have written referrals for people i basically only know in a professional context. if i feel like it's impossible for me to disentangle, i will defer to someone i trust and have them make the decision. this leads to some awkward conversations, but if someone doesn't want to be friends with me because it won't lead to a referral, i don't want to be friends with them either.

Strong agree (except in that liking someone's company is evidence that they would be a pleasant co-worker, but that's generally not a high order bit). I find it very annoying that standard reference culture seems to often imply giving extremely positive references unless someone was truly awful, since it makes it much harder to get real info from references

how valuable are formalized frameworks for safety, risk assessment, etc in other (non-AI) fields?

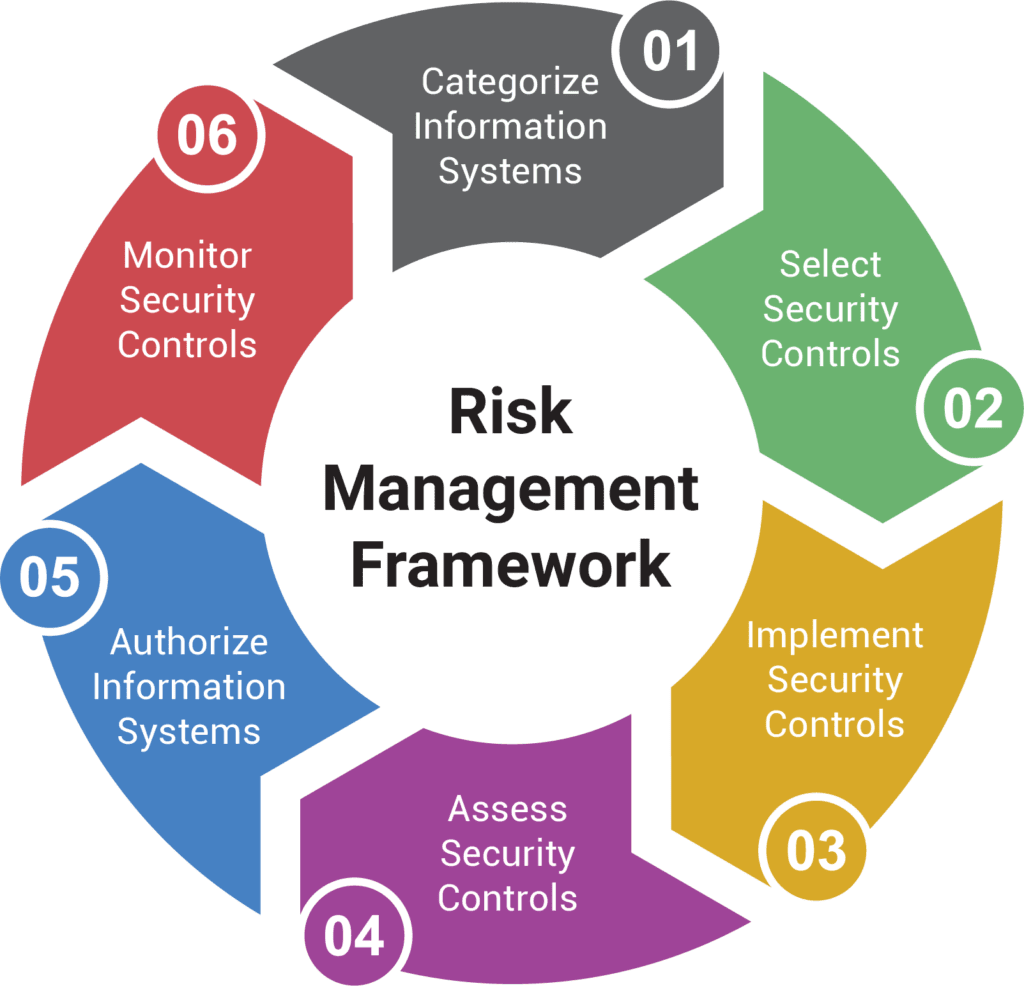

i'm temperamentally predisposed to find that my eyes glaze over whenever i see some kind of formalized risk management framework, which usually comes with a 2010s style powerpoint diagram - see below for a random example i grabbed from google images:

am i being unfair to these things? are they actually super effective for avoiding failures in other domains? or are they just useful for CYA and busywork and mostly just say common sense in an institutionally legible way?

one reason i care is because i feel some level of instinctive dislike for some AI safety/governance frameworks because they give me this vibe. but it's useful to figure out if i'm being unfairly judgemental, or if these really are slop.

(this is based on / expanded from a response I wrote to a tweet that was talking about how autistic people struggle in the world because the world follows unwritten rules that are more important than the written ones.)

I think most autistic people should invest more in understanding the unwritten rules. it can be cruel and unfair, but it's important to know how to interact with it. and it's actually a really interesting system to map out, with its own rhyme and reason.

it's entirely understandable that people feel burned by bad past experiences, and to have learned helplessness from bullying or other unfair treatment. this kind of thing leaves a scar and can make it feel viscerally hopeless.

but it still feels defeatist to just throw up one's hands and say "it's too complicated." yes, it's complicated and fuzzy and initially unintuitive and takes years to master. so is ML research. the point of being intelligent is that you are good at finding patterns and learning things, and there's nothing truly fundamentally different about the unwritten rules of social interaction.

I see people taking examples of weird unintuitive social rules all the time and, tbh, none of them are truly that com...

I don't know about other autists, but my primary problem with the neurotypical world isn't that I don't understand it, it that they don't understand me. It doesn't matter how well I can decode the social norms, if I can't also control my unvoluntary emotional expressions, and also do other things ranging from impossible to unpleasant.

I do understand social white lies. It's not that complicated. But I still find it unpleasant to speak them. When I was younger I got into trouble for literally being unable to utter words like "thanks" and "apology" when I did not mean them. (My native language does not have the ambiguous "sorry".) I am now able to tell white lies, but it makes me feel bad, in a way that has nothing to do with morals. The dissonance is just intrinsically hurtful to my sole, in a way that non-autistic people don't understand and typically don't respect.

Another common thing is that people assume that if I don't succeed in hiding my negative emotion this is an invitation/request for them to to try to help me, and then proceed to try to do that, even though they have zero skills, in this. And then they refuse to listen to anything I say, including not leaving me alon...

I think another reason why people procrastinate is that it makes each minute spent right before the deadline both obviously high value on net and resulting in immediate payoff. this makes the decision to put in effort in each moment really easy - obviously it makes sense to spend a minute working on something that will make a big impact on tomorrow. whereas each minute long before the deadline has longer time till payoff, and if you already put in a ton of work early on, then the minutes right before the deadline have lower marginal value because of diminishing returns. so this creates a perverse incentive to end-load the effort

one big problem with using LMs too much imo is that they are dumb and catastrophically wrong about things a lot, but they are very pleasant to talk to, project confidence and knowledgeability, and reply to messages faster than 99.99% of people. these things are more easily noticeable than subtle falsehood, and reinforce a reflex of asking the model more and more. it's very analogous to twitter soundbites vs reading long form writing and how that eroded epistemics.

hotter take: the extent to which one finds current LMs smart is probably correlated with how much one is swayed by good vibes from their interlocutor as opposed to the substance of the argument (ofc conditional on the model actually giving good vibes, which varies from person to person. I personally never liked chatgpt vibes until I wrote a big system prompt)

learning thread for taking notes on things as i learn them (in public so hopefully other people can get value out of it)

VAEs:

a normal autoencoder decodes single latents z to single images (or whatever other kind of data) x, and also encodes single images x to single latents z.

with VAEs, we want our decoder (p(x|z)) to take single latents z and output a distribution over x's. for simplicity we generally declare that this distribution is a gaussian with identity covariance, and we have our decoder output a single x value that is the mean of the gaussian.

because each x can be produced by multiple z's, to run this backwards you also need a distribution of z's for each single x. we call the ideal encoder p(z|x) - the thing that would perfectly invert our decoder p(x|z). unfortunately, we obviously don't have access to this thing. so we have to train an encoder network q(z|x) to approximate it. to make our encoder output a distribution, we have it output a mean vector and a stddev vector for a gaussian. at runtime we sample a random vector eps ~ N(0, 1) and multiply it by the mean and stddev vectors to get an N(mu, std).

to train this thing, we would like to optimize the following loss function:

-log p(x) + KL(q(z|x)||p(z|x))

where the terms optimize the likelihood (how good is the VAE at modelling dat...

ilya's AGI predictions circa 2017 (Musk v. Altman, Dkt. 379-40):

Within the next three years, robotics should be completely solved, AI should solve a long-standing unproven theorem, programming competitions should be won consistently by Als, and there should be convincing chatbots (though no one should pass the Turing test). In as little as four years, each overnight experiment will feasibly use so much compute compute that there's an actual chance of waking up to AGI, given the right algorithm - and figuring out the algorithm will actually happen within 2-4 further years of experimenting with this compute in a competitive multiagent simulation.

[...]

Each year, we'll need to exponentially increase our hardware spend, but we have reason to believe AGI can ultimately be built with less than $10B in hardware.

a take I've expressed a bunch irl but haven't written up yet: feature sparsity might be fundamentally the wrong thing for disentangling superposition; circuit sparsity might be more correct to optimize for. in particular, circuit sparsity doesn't have problems with feature splitting/absorption

traveling through Europe, looking out the window, and seeing the national flag flying next to the flag of the EU fills me with a strange feeling. this isn't an original thought at all, but still: it's really crazy that just 50 years ago Europe was divided by the iron curtain, and that people would have to go to insane lengths and risk their lives to get across that border; and that less than 100 years ago all of these countries were at war with each other, and had been at war on and off for centuries with ever shifting alliances and boundaries.

the most valuable part of a social event is often not the part that is ostensibly the most important, but rather the gaps between the main parts.

- at ML conferences, the headline keynotes and orals are usually the least useful part to go to; the random spontaneous hallway chats and dinners and afterparties are extremely valuable

- when doing an activity with friends, the activity itself is often of secondary importance. talking on the way to the activity, or in the gaps between doing the activity, carry a lot of the value

- at work, a lot of the best conversations happen outside of scheduled 1:1s and group meetings, but rather happen in spontaneous hallway or dinner groups

One of the directions im currently most excited about (modern control theory through algebraic analysis) I learned about while idly chitchatting with a colleague at lunch about old school cybernetics. We were both confused why it was such a big deal in the 50s and 60s then basically died.