This post is a not a so secret analogy for the AI Alignment problem. Via a fictional dialog, Eliezer explores and counters common questions to the Rocket Alignment Problem as approached by the Mathematics of Intentional Rocketry Institute.

MIRI researchers will tell you they're worried that "right now, nobody can tell you how to point your rocket’s nose such that it goes to the moon, nor indeed any prespecified celestial destination."

Popular Comments

Recent Discussion

(Half-baked work-in-progress. There might be a “version 2” of this post at some point, with fewer mistakes, and more neuroscience details, and nice illustrations and pedagogy etc. But it’s fun to chat and see if anyone has thoughts.)

1. Background

There’s a neuroscience problem that’s had me stumped since almost the very beginning of when I became interested in neuroscience at all (as a lens into AGI safety) back in 2019. But I think I might finally have “a foot in the door” towards a solution!

What is this problem? As described in my post Symbol Grounding and Human Social Instincts, I believe the following:

- (1) We can divide the brain into a “Learning Subsystem” (cortex, striatum, amygdala, cerebellum and a few other areas) on the one hand, and a “Steering Subsystem”

We've learned a lot about the visual system by looking at ways to force it to wrong conclusions, which we call optical illusions or visual art. Can we do a similar thing for this postulated social cognition system? For example, how do actors get us to have social feelings toward people who don't really exist? And what rules do movie directors follow to keep us from getting confused by cuts from one camera angle to another?

This is a linkpost for On Duct Tape and Fence Posts.

Eliezer writes about fence post security. When people think to themselves "in the current system, what's the weakest point?", and then dedicate their resources to shoring up the defenses at that point, not realizing that after the first small improvement in that area, there's likely now a new weakest point somewhere else.

Fence post security happens preemptively, when the designers of the system fixate on the most salient aspect(s) and don't consider the rest of the system. But this sort of fixation can also happen in retrospect, in which case it manifest a little differently but has similarly deleterious effects.

Consider a car that starts shaking whenever it's driven. It's uncomfortable, so the owner gets a pillow to put...

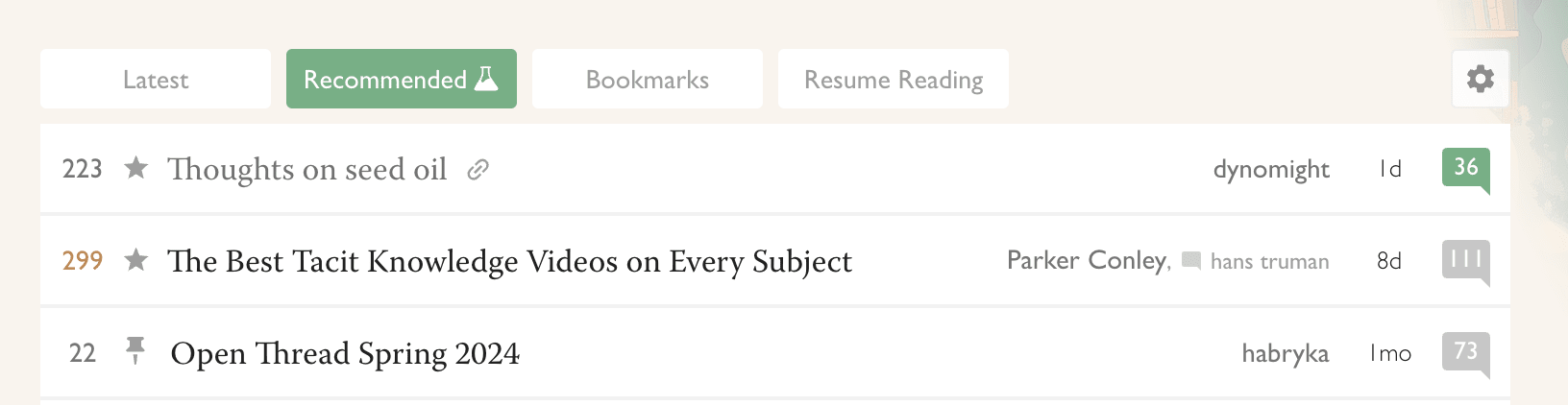

For the last month, @RobertM and I have been exploring the possible use of recommender systems on LessWrong. Today we launched our first site-wide experiment in that direction.

(In the course of our efforts, we also hit upon a frontpage refactor that we reckon is pretty good: tabs instead of a clutter of different sections. For now, only for logged-in users. Logged-out users see the "Latest" tab, which is the same-as-usual list of posts.)

Why algorithmic recommendations?

A core value of LessWrong is to be timeless and not news-driven. However, the central algorithm by which attention allocation happens on the site is the Hacker News algorithm[1], which basically only shows you things that were posted recently, and creates a strong incentive for discussion to always be...

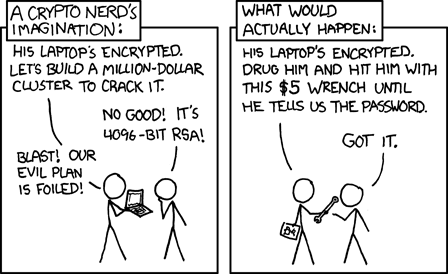

I would feel better about this if there was a high-infosec platform on which to discuss what is probably the most important topic in history (AI alignment). But noted.

- My current guess is that max good and max bad seem relatively balanced. (Perhaps max bad is 5x more bad/flop than max good in expectation.)

- There are two different (substantial) sources of value/disvalue: interactions with other civilizations (mostly acausal, maybe also aliens) and what the AI itself terminally values

- On interactions with other civilizations, I'm relatively optimistic that commitment races and threats don't destroy as much value as acausal trade generates on some general view like "actually going through with threats is a waste of resourc

TL;DR:

- Options traders think it's extremely unlikely that the stock market will appreciate more than 30 or 40 percent over the next two to three years, as it did over the last year. So they will sell you the option to buy current indexes for 30 or 40% above their currently traded value for very cheap.

- But slow takeoff, or expectations of one, would almost certainly cause the stock market to rise dramatically. Like many people here, I think institutional market makers are basically not pricing this in, and gravely underestimating volatility as a result, especially for large indexes like VTI which have never moved more than 50% in a single year.

- To take advantage of this, instead of buying individual tech stocks, I allocate a sizable chunk of my

Does buying shorter-term OTM derivatives each year not work here?

Specific examples would be nice. Not sure if I understand correctly, but I imagine something like this:

You always choose A over B. You have been doing it for such long time that you forgot why. Without reflecting about this directly, it just seems like there probably is a rational reason or something. But recently, either accidentally or by experiment, you chose B... and realized that experiencing B (or expecting to experience B) creates unpleasant emotions. So now you know that the emotions were the real cause of choosing A over B all that time.

(This is p...

About a year ago I decided to try using one of those apps where you tie your goals to some kind of financial penalty. The specific one I tried is Forfeit, which I liked the look of because it’s relatively simple, you set single tasks which you have to verify you have completed with a photo.

I’m generally pretty sceptical of productivity systems, tools for thought, mindset shifts, life hacks and so on. But this one I have found to be really shockingly effective, it has been about the biggest positive change to my life that I can remember. I feel like the category of things which benefit from careful planning and execution over time has completely opened up to me, whereas previously things like this would be largely down to the...

I like comments about other users' experiences for similar reasons why I like OP. I think maybe the ideal such comment would identify itself more clearly as an experience report, but I'd rather have the report than not.

We are trying our best to honor mana donations!

If you are inactive you have until the rest of the year to donate at the old rate. If you want to donate all your investments without having to sell each individually, we are offering you a loan to do that.

We removed the charity cap of $10k donations per month, which is going beyond what we previous communicated.

N.B. This is a chapter in a planned book about epistemology. Chapters are not necessarily released in order. If you read this, the most helpful comments would be on things you found confusing, things you felt were missing, threads that were hard to follow or seemed irrelevant, and otherwise mid to high level feedback about the content. When I publish I'll have an editor help me clean up the text further.

In the previous three chapters we broke apart our notions of truth and knowledge by uncovering the fundamental uncertainty contained within them. We then built back up a new understanding of how we're able to know the truth that accounts for our limited access to certainty. And while it's nice to have this better understanding, you might...

Author's note: This chapter took a really long time to write. Unlike previous chapters in the book, this one covers a lot more stuff in less detail, but I still needed to get the details right, so it took a long time to both figure out what I really wanted to say and to make sure I wasn't saying things that I wouldn't upon reflection regret having said because they were based on facts that I don't believe or I had simply gotten wrong.

It's likely still not the best version of this chapter it could be, but at this point I think I've made all the key points I wanted to make here, so I'm publishing the draft now and expect this one to need a lot of love from an editor later on.