Rationality: A-Z (or "The Sequences") is a series of blog posts by Eliezer Yudkowsky on human rationality and irrationality in cognitive science. It is an edited and reorganized version of posts published to Less Wrong and Overcoming Bias between 2006 and 2009. This collection serves as a long-form introduction to formative ideas behind Less Wrong, the Machine Intelligence Research Institute, the Center for Applied Rationality, and substantial parts of the effective altruist community. Each book also comes with an introduction by Rob Bensinger and a supplemental essay by Yudkowsky.

The first two books, Map and Territory and How to Actually Change Your Mind, are available on Amazon (printed and e-book version).

The entire collection is available as an e-book and audiobook. A number of alternative reading orders for the essays can be found here, and a compilation of all of Eliezer's blogposts up to 2010 can be found here.

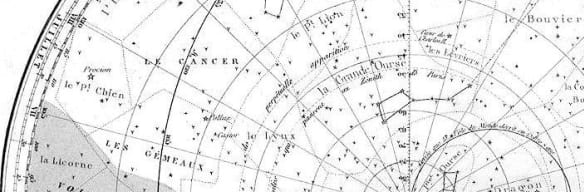

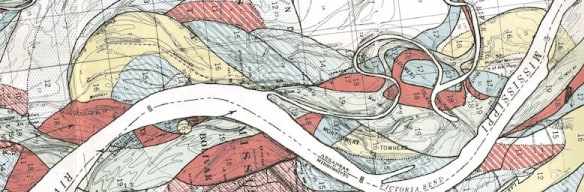

What is a belief, and what makes some beliefs work better than others? These four sequences explain the Bayesian notions of rationality, belief, and evidence. A running theme: the things we call “explanations” or “theories” may not always function like maps for navigating the world. As a result, we risk mixing up our mental maps with the other objects in our toolbox.

This truth thing seems pretty handy. Why, then, do we keep jumping to conclusions, digging our heels in, and recapitulating the same mistakes? Why are we so bad at acquiring accurate beliefs, and how can we do better? These seven sequences discuss motivated reasoning and confirmation bias, with a special focus on hard-to-spot species of self-deception and the trap of “using arguments as soldiers”.

Why haven’t we evolved to be more rational? Even taking into account resource constraints, it seems like we could be getting a lot more epistemic bang for our evidential buck. To get a realistic picture of how and why our minds execute their biological functions, we need to crack open the hood and see how evolution works, and how our brains work, with more precision. These three sequences illustrate how even philosophers and scientists can be led astray when they rely on intuitive, non-technical evolutionary or psychological accounts. By locating our minds within a larger space of goal-directed systems, we can identify some of the peculiarities of human reasoning and appreciate how such systems can “lose their purpose”.

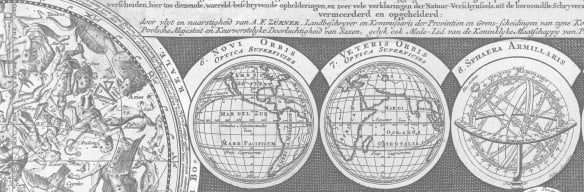

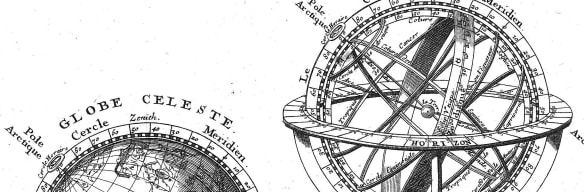

What kind of world do we live in? What is our place in that world? Building on the previous sequences’ examples of how evolutionary and cognitive models work, these six sequences explore the nature of mind and the character of physical law. In addition to applying and generalizing past lessons on scientific mysteries and parsimony, these essays raise new questions about the role science should play in individual rationality.

What makes something valuable—morally, or aesthetically, or prudentially? These three sequences ask how we can justify, revise, and naturalize our values and desires. The aim will be to find a way to understand our goals without compromising our efforts to actually achieve them. Here the biggest challenge is knowing when to trust your messy, complicated case-by-case impulses about what’s right and wrong, and when to replace them with simple exceptionless principles.

How can individuals and communities put all this into practice? These three sequences begin with an autobiographical account of Yudkowsky’s own biggest philosophical blunders, with advice on how he thinks others might do better. The book closes with recommendations for developing evidence-based applied rationality curricula, and for forming groups and institutions to support interested students, educators, researchers, and friends.

This sequence asks whether science resolves the problems raised so far. Scientists base their models on repeatable experiments, not speculation or hearsay. And science has an excellent track record compared to anecdote, religion, and . . . pretty much everything else. Do we still need to worry about “fake” beliefs, confirmation bias, hindsight bias, and the like when we’re working with a community of people who want to explain phenomena, not just tell appealing stories?

An account of irrationality would be incomplete if it provided no theory about how rationality works—or if its “theory” only consisted of vague truisms, with no precise explanatory mechanism. This sequence asks why it’s useful to base one’s behavior on “rational” expectations, and what it feels like to do so.

This, the first book of "Rationality: AI to Zombies" (also known as "The Sequences"), begins with cognitive bias. The rest of the book won’t stick to just this topic; bad habits and bad ideas matter, even when they arise from our minds’ contents as opposed to our minds’ structure.

It is cognitive bias, however, that provides the clearest and most direct glimpse into the stuff of our psychology, into the shape of our heuristics and the logic of our limitations. It is with bias that we will begin.

This sequence focuses on questions that are as probabilistically clear-cut as questions get. The Bayes-optimal answer is often infeasible to compute, but errors like confirmation bias can take root even in cases where the available evidence is overwhelming and we have plenty of time to think things over.

Now we move into a murkier area. Mainstream national politics, as debated by TV pundits, is famous for its angry, unproductive discussions. On the face of it, there’s something surprising about that. Why do we take political disagreements so personally, even when the machinery and effects of national politics are so distant from us in space or in time? For that matter, why do we not become more careful and rigorous with the evidence when we’re dealing with issues we deem important?

Just as it was useful to contrast humans as goal-oriented systems with inhuman processes in evolutionary biology and artificial intelligence, it will be useful in the coming sequences of essays to contrast humans as physical systems with inhuman processes that aren’t mind-like.

We humans are, after all, built out of inhuman parts. The world of atoms looks nothing like the world as we ordinarily think of it, and certainly looks nothing like the world’s conscious denizens as we ordinarily think of them. As Giulio Giorello put the point in an interview with Daniel Dennett: “Yes, we have a soul. But it’s made of lots of tiny robots."

We start with a sequence on the basic links between physics and human cognition.

This sequence abstracts from human cognition and evolution to the idea of minds and goal-directed systems at their most general. These essays serve the secondary purpose of explaining the author’s general approach to philosophy and the science of rationality, which is strongly informed by his work in AI.

This sequence discusses the basic relationship between cognition and concept formation. 37 Ways That Words Can Be Wrong is a guide to the sequence.

The first sequence of The Machine in the Ghost aims to communicate the dissonance and divergence between our hereditary history, our present-day biology, and our ultimate aspirations. This will require digging deeper than is common in introductions to evolution for non-biologists, which often restrict their attention to surface-level features of natural selection.

Leveling up in rationality means encountering a lot of interesting and powerful new ideas. In many cases, it also means making friends who you can bounce ideas off of and finding communities that encourage you to better yourself. This sequence discusses some important hazards that can afflict groups united around common interests and amazing shiny ideas, which will need to be overcome if we’re to get the full benefits out of rationalist communities.

The last sequence focused in on how feeling tribal often distorts our ability to reason. Now we'll explore one particular cognitive mechanism that causes this: much of our reasoning process is really rationalization—story-telling that makes our current beliefs feel more coherent and justified, without necessarily improving their accuracy.

Our natural state isn’t to change our minds like a Bayesian would. Getting the people in opposing tribes to notice what they’re really seeing won’t be as easy as reciting the axioms of probability theory to them. As Luke Muehlhauser writes, in The Power of Agency:

You are not a Bayesian homunculus whose reasoning is “corrupted” by cognitive biases.

You just are cognitive biases.

Confirmation bias, status quo bias, correspondence bias, and the like are not tacked on

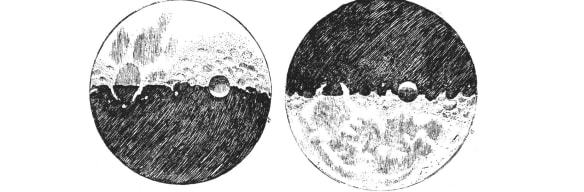

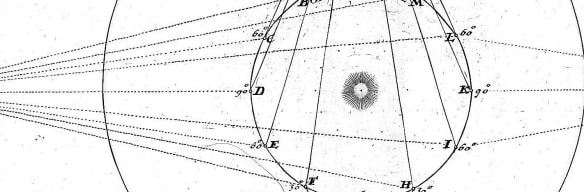

Quantum mechanics is our best mathematical model of the universe to date, powerfully confirmed by a century of tests. However, interpreting what the experimental results mean - how and when the Schrödinger equation and Born's rule interact - is a topic of much contention, with the main disagreement being between the Everett and the Copenhagen interpretations.

Yudkowsky uses this scientific controversy as a proving ground for some central ideas from previous sequences: map-territory distinctions, mysterious answers, Bayesianism, and Occam’s Razor.

Can we ever know what it’s like to be a bat? Traditional dualism, with its immaterial souls freely floating around violating physical laws, may be false; but what about the weaker thesis, that consciousness is a “further fact” not fully explainable by the physical facts? A number of philosophers and scientists have found this line of reasoning persuasive. If we feel this argument’s intuitive force, should we grant its conclusion and ditch physicalism?

We certainly shouldn’t

Discusses rationality groups and group rationality, raising the questions:

How can we be confident we're seeing a real effect in a rationality intervention, and picking out the right cause?

Above all: What’s missing? What should be in the next generation of rationality primers—the ones that replace this text, improve on its style, test its prescriptions, supplement its content, and branch out in altogether new directions?