If the balance of opinion of scientists and policymakers (or those who had briefly heard arguments) was that AI catastrophic risk is high, and that this should be a huge social priority, then you could do a lot of things. For example, you could get budgets of tens of billions of dollars for interpretability research, the way governments already provide tens of billions of dollars of subsidies to strengthen their chip industries. Top AI people would be applying to do safety research in huge numbers. People like Bill Gates and Elon Musk who nominally take AI risk seriously would be doing stuff about it, and Musk could have gotten more traction when he tried to make his case to government.

My perception based on many areas of experience is that policymakers and your AI expert survey respondents on the whole think that these risks are too speculative and not compelling enough to outweigh the gains from advancing AI rapidly (your survey respondents state those are much more likely than the harms). In particular, there is much more enthusiasm for the positive gains from AI than your payoff matrix suggests (particularly among AI researchers), and more mutual fear (e.g. the CCP does not wan...

I think this comment is overstating the case for policymakers and the electorate actually believing that investing in AI is good for the world. I think the answer currently is "we don't know what policymakers and the electorate actually want in relation to AI" as well as "the relationship of policymakers and the electorate is in the middle of shifting quite rapidly, so past actions are not that predictive of future actions".

I really only have anecdata to go on (though I don't think anyone has much better), but my sense from doing informal polls of e.g. Uber drivers, people on Twitter, and perusing a bunch of Subreddits (which, to be clear, is a terrible sample) is that indeed a pretty substantial fraction of the world is now quite afraid of the consequences of AI, both in a "this change is happening far too quickly and we would like it to slow down" sense, and in a "yeah, I am actually worried about killer robots killing everyone" sense. I think both of these positions are quite compatible with pushing for a broad slow down. There is also a very broad and growing "anti-tech" movement that is more broadly interested in giving less resources to the tech sector, whose aims are at leas...

I agree there is some weak public sentiment in this direction (with the fear of AI takeover being weaker). Privacy protections and redistribution don't particularly favor measures to avoid AI apocalypse.

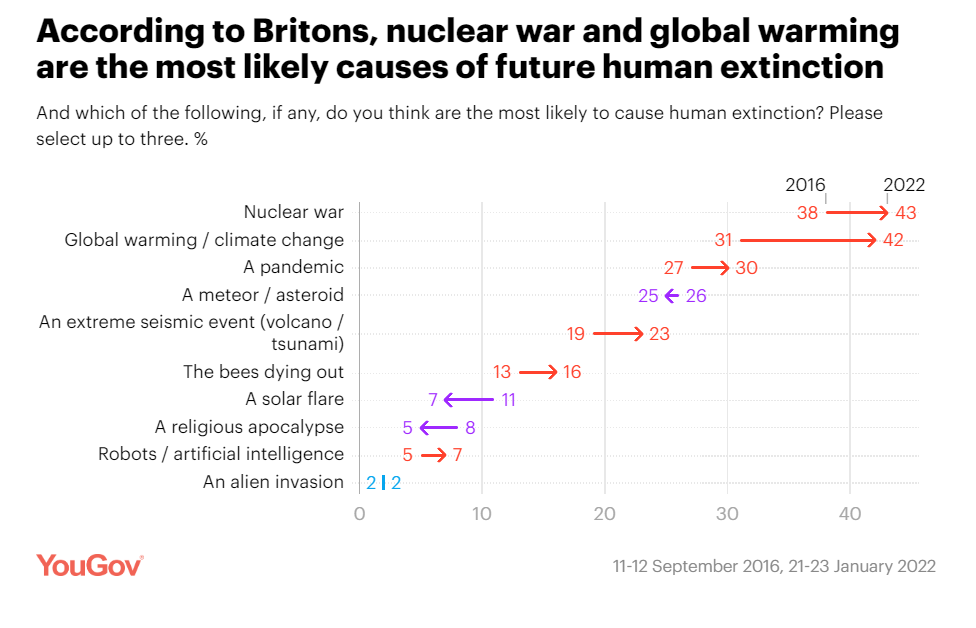

I'd also mention this YouGov survey:

But the sentiment looks weak compared to e.g. climate change and nuclear war, where fossil fuel production and nuclear arsenals continue, although there are significant policy actions taken in hopes of avoiding those problems. The sticking point is policymakers and the scientific community. At the end of the Obama administration the President asked scientific advisors what to make of Bostrom's Superintelligence, and concluded not to pay attention to it because it was not an immediate threat. If policymakers and their advisors and academia and the media think such public concerns are confused, wrongheaded, and not politically powerful they won't work to satisfy them against more pressing concerns like economic growth and national security. This is a lot worse than the situation for climate change, which is why it seems better regulation requires that the expert and elite debate play out differently, or the hope that later circumstances ...

But the sentiment looks weak compared to e.g. climate change and nuclear war, where fossil fuel production and nuclear arsenals continue,

That seems correct to me, but on the other hand, I think the public sentiment against things like GMOs was also weaker than the one that we currently have against climate change, and GMOs got slowed down regardless. Also I'm not sure how strong the sentiment against nuclear power was relative to the one against climate change, but in any case, nuclear power got hindered quite a bit too.

I think one important aspect where fossil fuels are different from GMOs and nuclear power is that fossil fuel usage is firmly entrenched across the economy and it's difficult, costly, and slow to replace it. Whereas GMOs were a novel thing and governments could just decide to regulate them and slow them down without incurring major immediate costs. As for nuclear power, it was somewhat entrenched in that there were many existing plants, but society could make the choice to drastically reduce the progress of building new ones - which it did.

Nuclear arsenals don't quite fit this model - in principle, one could have stopped expanding them, but they did keep growi...

I'll shill here and say that Rethink Priorities is pretty good at running polls of the electorate if anyone wants to know what a representative sample of Americans think about a particular issue such as this one. No need to poll Uber drivers or Twitter when you can do the real thing!

I'd very much like to see this done with standard high-quality polling techniques, e.g. while airing counterarguments (like support for expensive programs that looks like majority but collapses if higher taxes to pay for them is mentioned). In particular, how the public would react given different views coming from computer scientists/government commissions/panels.

That makes a lot of sense. We can definitely test a lot of different framings. I think the problem with a lot of these kinds of problems is that they are low saliency, and thus people tend not to have opinions already, and thus they tend to generate an opinion on the spot. We have a lot of experience polling on low saliency issues though because we've done a lot of polling on animal farming policy which has similar framing effects.

I think I would have totally agreed in 2016. One update since then is that I think progress scales way less than resources than I used to think it did. In many historical cases, a core component of progress driven by a small number of people (which is reflected in citation counts, who is actually taught in textbooks), and introducing lots of funding and scaling too fast can disrupt that by increasing the amount of fake work.

$1B in safety well-spent is clearly more impactful than $1B less in semiconductors, it's just that "well-spent" is doing a lot of work, someone with a lot of money is going to have lots of people trying to manipulate their information environment to take their stuff.

Reducing especially dangerous tech progress seems more promising than reducing tech broadly, however since these are dual use techs, creating knowledge about which techs are dangerous can accelerate development in these sectors (especially the more vice signalling / conflict orientation is going on). This suggests that perhaps an effective way to apply this strategy is to recruit especially productive researchers (identified using asymmetric info) to labs where they work on something less dangerous.

In gain of function research and nuclear research, progress requires large expensive laboratories; AI theory progress doesn't require that, although large scale training does (though, to a lesser extent than GOF or nuclear).

There are plenty of movements out there (ethics & inclusion, digital democracy, privacy, etc.) who are against current directions of AI developments, and they don’t need the AGI risk argument to be convinced that current corporate scale-up of AI models is harmful.

Working with them, redirecting AI developments away from more power-consolidating/general AI may not be that much harder than investing in supposedly “risk-mitigating” safety research.

Do you think there is a large risk of AI systems killing or subjugating humanity autonomously related to scale-up of AI models?

A movement pursuing antidiscrimination or privacy protections for applications of AI that thinks the risk of AI autonomously destroying humanity is nonsense seems like it will mainly demand things like the EU privacy regulations, not bans on using $10B of GPUs instead of $10M in a model. It also seems like it wouldn't pursue measures targeted at the kind of disaster it denies, and might actively discourage them (this sometimes happens already). With a threat model of privacy violations restrictions on model size would be a huge lift and the remedy wouldn't fit the diagnosis in a way that made sense to policymakers. So I wouldn't expect privacy advocates to bring them about based on their past track record, particularly in China where privacy and digital democracy have not had great success.

If it in fact is true that there is a large risk of almost everyone alive today being killed or subjugated by AI, then establishing that as scientific consensus seems like it would supercharge a response dwarfing current efforts for things like privacy rules, which would ...

A movement pursuing antidiscrimination or privacy protections for applications of AI that thinks the risk of AI autonomously destroying humanity is nonsense seems like it will mainly demand things like the EU privacy regulations, not bans on using $10B of GPUs instead of $10M in a model".

I can imagine there being movements that fit this description, in which case I would not focus on talking with them or talking about them.

But I have not been in touch with any movements matching this description. Perhaps you could share specific examples of actions from specific movements you have in mind?

For the movements I have in mind (and am talking with), the description does not match at all:

I agree that some specific leaders you cite have expressed distaste for model scaling, but it seems not to be a core concern. In a choice between more politically feasible measures that target concerns they believe are real vs concerns they believe are imaginary and bad, I don't think you get the latter. And I think arguments based on those concerns get traction on measures addressing the concerns, but less so on secondary wishlist items of leaders .

I think that's the reason privacy advocacy in legislation and the like hasn't focused on banning computers in the past (and would have failed if they tried). For example:

If privacy and data ownership movements take their own claims seriously (and some do), they would push for banning the training of ML models on human-generated data or any sensor-based surveillance that can be used to track humans' activities.

AGI working with AI generated data or data shared under the terms and conditions of web services can power the development of highly intelligent catastrophically dangerous systems, and preventing AI from reading published content doesn't seem close to the core motives there, especially for public support on privacy. So ...

This is like saying there's no value to learning about and stopping a nuclear attack from killing you because you might get absolutely no benefit from not being killed then, and being tipped off about a threat trying to kill you, because later the opponent might kill you with nanotechnology before you can prevent it.

Removing intentional deception or harm greatly increases the capability of AIs that can be worked with without getting killed, to further improve safety measures. And as I said actually being able to show a threat to skeptics is immensely better for all solutions, including relinquishment, than controversial speculation.

As requested by Remmelt I'll make some comments on the track record of privacy advocates, and their relevance to alignment.

I did some active privacy advocacy in the context of the early Internet in the 1990s, and have been following the field ever since. Overall, my assessment is that the privacy advocacy/digital civil rights community has had both failures and successes. It has not succeeded (yet) in its aim to stop large companies and governments from having all your data. On the other hand, it has been more successful in its policy advocacy towards limiting what large companies and governments are actually allowed to do with all that data.

The digital civil rights community has long promoted the idea that Internet based platforms and other computer systems must be designed and run in a way that is aligned with human values. In the context of AI and ML based computer systems, this has led to demands for AI fairness and transparency/explainability that have also found their way into policy like the GDPR, legislation in California, and the upcoming EU AI Act. AI fairness demands have influenced the course of AI research being done, e.g. there has been research on defining i...

A movement pursuing antidiscrimination or privacy protections for applications of AI that thinks the risk of AI autonomously destroying humanity is nonsense seems like it will mainly demand things like the EU privacy regulations, not bans on using $10B of GPUs instead of $10M in a model.

This is a very spicy take, but I would (weakly) guess that a hypothetical ban on ML trainings that cost more than $10M would make AGI timelines marginally shorter rather than longer, via shifting attention and energy away from scaling and towards algorithm innovation.

Very interesting! Recently, US started to regulate export of computing power to China. Do you expect this to speed up AGI timeline in China, or do you expect regulation to be ineffective, or something else?

Reportedly, NVIDIA developed A800, which is just A100, to keep the letter but probably not the spirit of the regulation. I am trying to follow closely how A800 fares, because it seems to be an important data point on feasibility of regulating computing power.

Most AI companies and most employees there seem not to buy risk much, and to assign virtually no resources to address those issues. Unilaterally holding back from highly profitable AI when they won't put a tiny portion of those profits into safety mitigation again looks like an ask out of line with their weak interest. Even at the few significant companies with higher percentages of safety effort, it still looks to me like the power-weighted average of staff is extremely into racing to the front, at least to near the brink of catastrophe or until governments buy risks enough to coordinate slowdown.

So asks like investing in research that could demonstrate problems with higher confidence, or making models available for safety testing, or similar still seem much easier to get from those companies than stopping (and they have reasonable concerns that their unilateral decision might make the situation worse by reducing their ability to do helpful things, while regulatory industry-wide action requires broad support).

As with government, generating evidence and arguments that are more compelling could be super valuable, but pretending you have more support than you do yields incorrect recommendations about what to try.

looks to me like the power-weighted average of staff is extremely into racing to the front, at least to near the brink of catastrophe or until governments buy risks enough to coordinate slowdown.

Can anyone say confident why? Is there one reason that predominates, or several? Like it's vaguely something about status, money, power, acquisitive mimesis, having a seat at the table... but these hypotheses are all weirdly dismissive of the epistemics of these high-powered people, so either we're talking about people who are high-powered because of the managerial revolution (or politics or something), or we're talking about researchers who are high-powered because they're given power because they're good at research. If it's the former, politics, then it makes sense to strongly doubt their epistemics on priors, but we have to ask, why can they meaningfully direct the researchers who are actually good at advancing capabilities? If it's the latter, good researchers have power, then why are their epistemics suddenly out the window here? I'm not saying their epistemics are actually good, I'm saying we have to understand why they're bad if we're going to slow down AI through this central route.

There are a lot of pretty credible arguments for them to try, especially with low risk estimates for AI disempowering humanity, and if their percentile of responsibility looks high within the industry.

One view is that the risk of AI turning against humanity is less than the risk of a nasty eternal CCP dictatorship if democracies relinquish AI unilaterally. You see this sort of argument made publicly by people like Eric Schmidt, and 'the real risk isn't AGI revolt, it's bad humans' is almost a reflexive take for many in online discussion of AI risk. That view can easily combine with the observation that there has been even less takeup of AI safety in China thus far than in liberal democracies, and mistrust of CCP decision-making and honesty, so it also reduces accident risk.

With respect to competition with other companies in democracies, some labs can correctly say that they have taken action that signals they are more into taking actions towards safety or altruistic values (including based on features like control by non-profit boards or % of staff working on alignment), and will have vastly more AI expertise, money, and other resources to promote those goals in the future by...

Thank you, this is a good post.

My main point of disagreement is that you point to successful coordination in things like not eating sand, or not wearing weird clothing. The upside of these things is limited, but you say the upside of superintelligence is also limited because it could kill us.

But rephrase the question to "Should we create an AI that's 1% better than the current best AI?" Most of the time this goes well - you get prettier artwork or better protein folding prediction, and it doesn't kill you. So there's strong upside to building slightly better AIs, as long as you don't cross the "kills everyone" level. Which nobody knows the location of. And which (LW conventional wisdom says) most people will be wrong about.

We successfully coordinate a halt to AI advancement at the first point where more than half of the relevant coordination power agrees that the next 1% step forward is in expectation bad rather than good. But "relevant" is a tough qualifier, because if 99 labs think it's bad, and one lab thinks it's good, then unless there's some centralizing force, the one lab can go ahead and take the step. So "half the relevant coordination power" has to include either every la...

I loved the link to the "Resisted Technological Temptations Project", for a bunch of examples of resisted/slowed technologies that are not "eating sand", and have an enormous upside: https://wiki.aiimpacts.org/doku.php?id=responses_to_ai:technological_inevitability:incentivized_technologies_not_pursued:start

I would tentatively add:

Katja, many thanks for writing this, and Oliver, thanks for this comment pointing out that everyday people are in fact worried about AI x-risk. Since around 2017 when I left MIRI to rejoin academia, I have been trying continually to point out that everyday people are able to easily understand the case for AI x-risk, and that it's incorrect to assume the existence of AI x-risk can only be understood by a very small and select group of people. My arguments have often been basically the same as yours here: in my case, informal conversations with Uber drivers, random academics, and people at random public social events. Plus, the argument is very simple: If things are smarter than us, they can outsmart us and cause us trouble. It's always seemed strange to say there's an "inferential gap" of substance here.

However, for some reason, the idea that people outside the LessWrong community might recognize the existence of AI x-risk — and therefore be worth coordinating with on the issue — has felt not only poorly received on LessWrong, but also fraught to even suggest. For instance, I tried to point it out in this previous post:

The question feels leading enough that I don't really know how to respond. Many of these sentences sound pretty crazy to me, so I feel like I primarily want to express frustration and confusion that you assign those sentences to me or "most of the LessWrong community".

However, for some reason, the idea that people outside the LessWrong community might recognize the existence of AI x-risk — and therefore be worth coordinating with on the issue — has felt not only poorly received on LessWrong, but also fraught to even suggest. For instance, I tried to point it out in this previous post:

I think John Wentworth's question is indeed the obvious question to ask. It does really seem like our prior should be that the world will not react particularly sanely here.

I also think it's really not true that coordination has been "fraught to even suggest". I think it's been suggested all the time, and certain coordination plans seem more promising than others. Like, even Eliezer was for a long time apparently thinking that Deepmind having a monopoly on AGI development was great and something to be protected, which very much involves coordinating with people outside of the LessWrong community.

T...

I think mostly I expect us to continue to overestimate the sanity and integrity of most of the world, then get fucked over like we got fucked over by OpenAI or FTX. I think there are ways to relating to the rest of the world that would be much better, but a naive update in the direction of "just trust other people more" would likely make things worse.

[...]

Again, I think the question you are raising is crucial, and I have giant warning flags about a bunch of the things that are going on (the foremost one is that it sure really is a time to reflect on your relation to the world when a very prominent member of your community just stole 8 billion dollars of innocent people's money and committed the largest fraud since Enron), [...]

I very much agree with the sentiment of the second paragraph.

Regarding the first paragraph, my own take is that (many) EAs and rationalists might be wise to trust themselves and their allies less.[1]

The main update of the FTX fiasco (and other events I'll describe later) I'd make is that perhaps many/most EAs and rationalists aren't very good at character judgment. They probably trust other EAs and rationalists too readily because they are part of...

Critch, I agree it’s easy for most people to understand the case for AI being risky. I think the core argument for concern—that it seems plausibly unsafe to build something far smarter than us—is simple and intuitive, and personally, that simple argument in fact motivates a plurality of my concern. That said:

However, for some reason, the idea that people outside the LessWrong community might recognize the existence of AI x-risk — and therefore be worth coordinating with on the issue — has felt not only poorly received on LessWrong, but also fraught to even suggest.

I object to this hyperbolic and unfair accusation. The entire AI Governance field is founded on this idea; this idea is not only fine to suggest, but completely uncontroversial accepted wisdom. That is, if by "this idea" you really mean literally what you said -- "people outside the LW community might recognize the existence of AI x-risk and be worth coordinating with on the issue." Come on.

I am frustated by what appears to me to be constant straw-manning of those who disagree with you on these matters. Just because people disagree with you doesn't mean there's a sinister bias at play. I mean, there's usually all sorts of sinister biases at play at all sides of every dispute, but the way to cut through them isn't to go around slinging insults at each other about who might be biased, it's to stay on the object level and sort through the arguments.

It would help if you specified which subset of "the community" you're arguing against. I had a similar reaction to your comment as Daniel did, since in my circles (AI safety researchers in Berkeley), governance tends to be well-respected, and I'd be shocked to encounter the sentiment that working for OpenAI is a "betrayal of allegiance to 'the community'".

To be clear, I do think most people who have historically worked on "alignment" at OpenAI have probably caused great harm! And I do think I am broadly in favor of stronger community norms against working at AI capability companies, even in so called "safety positions". So I do think there is something to the sentiment that Critch is describing.

That particular statement was very poorly received, with a 139-karma retort from John Wentworth arguing,

What exactly is the model by which some AI organization demonstrating AI capabilities will lead to world governments jointly preventing scary AI from being built, in a world which does not actually ban gain-of-function research?

I’m not sure what’s going on here

So, wait, what’s actually the answer to this question? I read that entire comment thread and didn’t find one. The question seems to me to be a good one!

The GoF analogy is quite weak.

As in my comment here, if you have a model that simultaneously both explains the fact that governments are funding GoF research right now, and predicts that governments would nevertheless react helpfully to AGI, I’m very interested to hear it. It seems to me that defunding GoF is a dramatically easier problem in practically every way.

The only responses I can think of right now are (1) “Basically nobody in or near government is working hard to defund GoF but people in or near government will be working hard to spur on a helpful response to AGI” (really? if so, what’s upstream of that supposed difference?) or (2) “It’s all very random—who happens to be in what position of power and when, etc.—and GoF is just one example, so we shouldn’t generalize too far from it” (OK maybe, but if so, then can we pile up more examples into a reference class to get a base rate or something? and what are the interventions to improve the odds, and can we also try those same interventions on GoF?)

I think it’s worth updating on the fact that the US government has already launched a massive, disruptive, costly, unprecedented policy of denying AI-training chips to China. I’m not aware of any similar-magnitude measure happening in the GoF domain.

IMO that should end the debate about whether the government will treat AI dev the way it has GoF - it already has moved it to a different reference class.

Some wild speculation on upstream attributes of advanced AI’s reference class that might explain the difference in the USG’s approach:

a perception of new AI as geoeconomically disruptive; that new AI has more obvious natsec-relevant use-cases than GoF; that powerful AI is more culturally salient than powerful bio (“evil robots are scarier than evil germs”).

Not all of these are cause for optimism re: a global ASI ban, but (by selection) they point to governments treating AI “seriously”.

I think it's uncharitable to psychoanalyze why people upvoted John's comment; his object-level point about GoF seems good and merits an upvote IMO. Really, I don't know what to make of GoF. It's not just that governments have failed to ban it, they haven't even stopped funding it, or in the USA case they stopped funding it and then restarted I think. My mental models can't explain that. Anyone on the street can immediately understand why GoF is dangerous. GoF is a threat to politicians and national security. GoF has no upsides that stand up to scrutiny, and has no politically-powerful advocates AFAIK. And we’re just getting over a pandemic which consumed an extraordinary amount of money, comfort, lives, and attention for the past couple years, and which was either a direct consequence of GoF research, or at the very least the kind of thing that GoF research could have led to. And yet, here we are, with governments funding GoF research right now. Again, I can’t explain this, and pending a detailed model that can, the best I can do right now is say “Gee I guess I should just be way more cynical about pretty much everything.”

Anyway, back to your post, if Option 1 is unilateral pivotal...

Survey about this question (I have a hypothesis, but I don't want to say what it is yet): https://forms.gle/1R74tPc7kUgqwd3GA

'Nuclear power' seems to me like a weird example because we selectively halted the development of productive use of nuclear power while having comparatively little standing in the way of development of destructive use of nuclear power. If a similar story holds, then we'll still see militarily relevant AIs (deliberately doing adversarial planning of the sort that could lead to human extinction) while not getting many of the benefits along the way.

That... doesn't seem like much of a coordination success story, to me.

Yes, because the standards for success for nuclear are much lower than they are for AI. Not only did 5 states acquire weapons before the treaty was signed, around four have acquired them since, and this didn't stop the arms race accumulation of thousands of weapons. This turned out to be enough (so far).

In worlds where nukes ignite the atmosphere the first time you use them, there would have been a different standard of coordination necessary to count as 'success'. (Or in worlds where we counted the non-signatory states, many of which have nuclear weapons, as failures.)

The main concrete proposals / ideas that are mentioned here or I can think of are:

This was counter to the prevailing narrative at the time, and I think did some of the work of changing the narrative. It's of historical significance, if nothing else.

you are underestimating the degree of unilateralist's curse driving ai progress by quite a bit. it looks like scaling is what does it, but that isn't actually true, the biggest-deal capabilities improvements come from basic algorithms research that is somewhat serially bottlenecked until we improve on a scaling law, and then the scaling is what makes a difference. progress towards dethroning google by strengthening underlying algorithms until they work on individual machines has been swift, and the next generations of advanced basic algorithms are already here, eg https://github.com/BlinkDL/RWKV-LM - most likely, a single 3090 can train a GPT3 level model in a practical amount of time. the illusion that only large agents can train ai is a falsehood resulting from how much easier the current generation of models are to train than previous ones, such that simply scaling them up works at all. but it is incredibly inefficient - transformers are a bad architecture, and the next things after them are shockingly stronger. if your safety plan doesn't take this into account, it won't work.

that said - there's no reason to think we're doomed just because we can't slow down. we need simply speed up safety until it has caught up with the leading edge of capabilities. safety should always be thinking first about how to make the very strongest model's architecture fit in with existing attempts at safety, and safety should take care not to overfit on individual model architectures.

there's no reason to think we're doomed just because we can't slow down. we need simply speed up safety until it has caught up with the leading edge of capabilities.

I want to emphasize this; if doubling the speed of safety is cheaper than halving the rate of progress, and you have limited resources, then you always pick doubling the speed of safety in that scenario.

In the current scenario on earth, trying to slow the rate of progress makes you an enemy of the entire AI industry, whereas increasing the rate of safety does not. Therefore, increasing the rate of safety is the default strategy, because it's the option that won't get you an enemy of a powerful military-adjacent industry (which already has/had other enemies, and plenty of experience building its own strategies for dealing with them).

I want to emphasize this; if doubling the speed of safety is cheaper than halving the rate of progress, and you have limited resources, then you always pick doubling the speed of safety in that scenario.

Sure.

In the current scenario on earth, trying to slow the rate of progress makes you an enemy of the entire AI industry, whereas increasing the rate of safety does not. Therefore, increasing the rate of safety is the default strategy, because it's the option that won't get you an enemy of a powerful military-adjacent industry (which already has/had other enemies, and plenty of experience building its own strategies for dealing with them).

You're going to have be less vague in order for me to take you seriously. I understand that you apparently have private information, but I genuinely can't figure out what you'd have me believe the CIA is constantly doing to people who oppose its charter in extraordinarily indirect ways like this. If I organize a bunch of protests outside DeepMind headquarters, is the IC going to have me arrested? Stazi-like gaslighting? Pay a bunch of NYT reporters to write mean articles about me and my friends?

My experience in talking about slowing down AI with people is that they seem to have a can’t do attitude. They don’t want it to be a reasonable course: they want to write it off.

There are a lot of really good reasons why someone would avoid touching the concept of "suppressing AI research" with a ten-foot pole; depending on who they are and where they work, it's tantamount to advocating for treason. Literal treason, with some people. It's the kind of thing where merely associating with people who advocate for it can cost you your job, and certainly promotions.

A lot of this is considered infohazardous, and frankly, I've already said too much here. But it's very legitimate, and even sensible, to have very, very strong and unquestioned misgivings about large numbers of people they're associated with being persuaded to do something as radical as playing a zero-sum game against the entire AI industry.

I think it's a bit hard to tell how influential this post has been, though my best guess is "very". It's clear that sometime around when this post was published there was a pretty large shift in the strategies that I and a lot of other people pursued, with "slowing down AI" becoming a much more common goal for people to pursue.

I think (most of) the arguments in this post are good. I also think that when I read an initial draft of this post (around 1.5 years ago or so), and had a very hesitant reaction to the core strategy it proposes, that I was picking up on something important, and that I do also want to award Bayes points to that part of me given how things have been playing out so far.

I do think that since I've seen people around me adopt strategies to slow down AI, I've seen it done on a basis that feels much more rhetorical, and often directly violates virtues and perspectives that I hold very dearly. I think it's really important to understand that technological progress has been the central driving force behind humanity's success, and that indeed this should establish a huge prior against stopping almost any kind of technological development.

In contrast to that, the m...

There are things that are robustly good in the world, and things that are good on highly specific inside-view models and terrible if those models are wrong. Slowing dangerous tech development seems like the former, whereas forwarding arms races for dangerous tech between world superpowers seems more like the latter.

It may seem the opposite to some people. For instance, my impression is that for many adjacent to the US government, "being ahead of China in every technology" would be widely considered robustly good, and nobody would question you at all if you said that was robustly good. Under this perspective the idea that AI could pose an existential risk is a "highly specific inside-view model" and it would be terrible if we acted on the model and it is wrong.

I don't think your readers will mostly think this, but I actually think a lot of people would, which for me makes this particular argument seem entirely subjective and thus suspect.

Thanks for writing!

I want to push back a bit on the framing used here. Instead of the framing "slowing down AI," another framing we could use is, "lay the groundwork for slowing down in the future, when extra time is most needed." I prefer this latter framing/emphasis because:

I’ve copied over and lightly edited some comments I left on a draft. Note I haven’t reread the post in detail; sorry if these were addressed somewhere.

Writing down quick thoughts after reading the intro and before reading the rest:

I have two major reasons to be skeptical of actively slowing down AI (setting aside feasibility):

1. It makes it easier for a future misaligned AI to take over by increasing overhangs, both via compute progress and algorithmic efficiency progress. (This is basically the same sort of argument as "Every 18 months, the minimum IQ necessary to destroy the world drops by one point.")

2. Such strategies are likely to disproportionately penalize safety-conscious actors.

(As a concrete example of (2), if you build public support, maybe the public calls for compute restrictions on AGI companies and this ends up binding the companies with AGI safety teams but not the various AI companies that are skeptical of “AGI” and “AI x-risk” and say they are just building powerful AI tools without calling it AGI.)

For me personally there's a third reason, which is that (to first approximation) I have a limited amount of resources and it seems better to spend that on the "use good...

The conversation near me over the years has felt a bit like this:

Some people: AI might kill everyone. We should design a godlike super-AI of perfect goodness to prevent that.

Others: wow that sounds extremely ambitious

Some people: yeah but it’s very important and also we are extremely smart so idk it could work

[Work on it for a decade and a half]

Some people: ok that’s pretty hard, we give up

Others: oh huh shouldn’t we maybe try to stop the building of this dangerous AI?

Some people: hmm, that would involve coordinating numerous people—we may be arrogant enough to think that we might build a god-machine that can take over the world and remake it as a paradise, but we aren’t delusional

There's a sleight of hand going on with the "we" here. "We" as in LessWrong are not building the godlike AI, the trillion dollar technocapitalist machine is doing that. "We" are a bunch of nerds off to the side, some of whom are researching ways to point said AIs at specific targets. If "we" tried to start an AGI company we'd indeed end up in fifth place and speed up timelines by six weeks (generously).

I think there's a major internal tension in the picture you present (though the tension is only there with further assumptions). You write:

Obstruction doesn’t need discernment

[...]

I don’t buy it. If all you want is to slow down a broad area of activity, my guess is that ignorant regulations do just fine at that every day (usually unintentionally). In particular, my impression is that if you mess up regulating things, a usual outcome is that many things are randomly slower than hoped. If you wanted to speed a specific thing up, that’s a very different story, and might require understanding the thing in question.The same goes for social opposition. Nobody need understand the details of how genetic engineering works for its ascendancy to be seriously impaired by people not liking it. Maybe by their lights it still isn’t optimally undermined yet, but just not liking anything in the vicinity does go a long way.

And you write:

...Technological choice is not luddism

Some technologies are better than others [citation not needed]. The best pro-technology visions should disproportionately involve awesome technologies and avoid shitty technologies, I claim. If you think AGI is highly likely to dest

Curated. I am broadly skeptical of existing "coordination"-flavored efforts, but this post prompted several thoughts:

I think this post does a good job of highlighting representative objections to various proposed strategies and then demonstrating why those objections should not be considered decisive (or even relevant). It is true that we will not solve the problem of AI killing everyone by slowing it down, but that does not mean we should give up on trying to find +EV strategies for slowing it down, since a successful slowdown, all else equal, is good.

The world has a lot of experience slowing down technological progress already, no need to invent new ways.

Fusion research slowed to a crawl in the 1970s and so we don't have fusion power. Electric cars have been delayed by a century. IRB is successful at preventing many promising avenues. The FDA/CDC killed most of the novel drug and pandemic prevention research. The space industry is only now catching up to where it was 50 years ago.

Basically, stigma/cost/red tape reliably and provably does the trick.

As a datapoint, I remember briefly talking with Eliezer in July 2021, where I said "If only we could make it really cringe to do capabilities/gain-of-function work..." (I don't remember which one I said). To which, I think he replied "That's not how human psychology works."

I now disagree with this response. I think it's less "human psychology" and more "our current sociocultural environment around these specific areas of research." EG genetically engineering humans seems like a thing which, in some alternate branches, is considered "cool" and "exciting", while being cringe in our branch. It doesn't seem like a predestined fact of human psychology that that field had to end up being considered cringe.

It seems very odd to have a discussion of arms race dynamics that is purely theoretical exploration of possible payoff matrices, and does not include a historically informed discussion of what seems like the obviously most analogous case, namely nuclear weapons research during the Second World War.

US nuclear researchers famously (IIRC, pls correct me if wrong!) thought there was a nontrivial chance their research would lead to human extinction, not just because nuclear war might do so but because e.g. a nuclear test explosion might ignite the atmosphere. T...

The world has actually coordinated on some impressive things, e.g. nuclear non-proliferation

Given the success of North Korea, I am both impressed by the world's coordination on nuclear weapon and depressed that even the impressive coordination is not enough. I feel similarly for the topic the nuclear weapon is metaphor for.

Continuing to think about the metaphor: in terms of existential risk, North Korean nuclear weapon is probably less damaging than United States missile defense, due to reasons related to second strike. It is probable people feel bad about...

I suspect that part of what is going on is that many in the AI safety community are inexperienced with and uncomfortable with politics and have highly negative views about government capabilities.

Another potential (and related) issue, is that people in the AI safety community think that their comparative advantage doesn't lie in political action (which is likely true) and therefore believe they are better off pursuing their comparative advantage (which is likely false).

Your own examples of technologies that aren't currently pursued but have huge upsides are a strong case against this proposition. These lines of research have some risks, but if there was sufficient funding and coordination, they could be tremendously valuable. Yet the status quo is to simply ban them without investing much at all in building a safe infrastructure to pursue them.

If you should succeed in achieving the political will needed to "slow down tech," it will come from idiots, fundamentalists, people with useless jobs, etc. It will not be a coaliti...

Someone used CRISPR on babies in China

Nitpick: the CRISPR was on the embryos, not the babies.

Although I don't think what He Jiankui did was scientifically justified (the benefit of HIV resistance wasn't worth the risk – he should have chosen PCSK9 instead of CCR5), I think the current norms against human genetic enhancement really are stifling a lot of progress.

(1) The framing of all this as military technology (and the DoD is the single largest purchasing agent on earth) reminds me of nuclear power development. Molten Salt reactors and Pellet Bed reactors are both old tech which would have created the dream of safe, small-scale nuclear power. However, in addition to not melting down and working at relatively small scales, they also don't make weapons-usable materials. Thus they were shunted in favor of the kinds of reactors we mostly have now. In an alternative past without the cold war driving us to make ne...

Thank you for writing this post! I agree completely, which is perhaps unsurprising given my position stated back in 2020. Essentially, I think we should apply the precautionary principle for existentially risky technologies: do not build unless safety is proven.

A few words on where that position has brought me since then.

First, I concluded back then that there was little support for this position in rationalist or EA circles. I concluded as you did, that this had mostly to do with what people wanted (subjective techno-futurist desires), and less with what ...

Broadly agree, but: I think we're very confused about the social situation. (Again, I agree this argues to work on deconfusing, not to give up!) For example, one interpretation of the propositions claimed in this thread

https://twitter.com/soniajoseph_/status/1597735163936768000

if they are true, is that AI being dangerous is more powerful in terms of moving money and problem-solving juice as a recruitment tool rather than a dissuasion tool. I.e. in certain contexts, it's beneficial towards the goal of getting funding to include in your pitch "th...

This post seems like it was quite influential. This is basically a trivial review to allow the post to be voted on.

I see one critical flaw here.

Why does anyone assume ANY progress will be made on alignment if we don't have potentially dangerous AGIs in existence to experiment with?

A second issue is that at least the current model for chatGPT REQUIRES human feedback to get smarter, and the greater the scale of userbase the smarter it can potentially become.

Other systems designed to scale to AGI may have to be trained this way: initial training from test environments and static human text, but refinement from interaction with live humans, where the company with the most ...

we have lots of ideas how to do AGI control mechanisms and no doubt some of them do work

I think AGI safety is in a worse place than you do.

It seems that you think that we already have at least one plan for Safe & Beneficial AGI that has no problems that are foreseeable at this point, they’ve been red-teamed to death and emerged unscathed with the information available, and we’re not going to get any further until we’re deeper into the implementation.

Whereas I think that we have zero plans for which we can say “given what we know now, we have strong reason to believe that successfully implementing / following this plan would give us Safe & Beneficial AGI”.

I also think that, just because you have code that reliably trains a deceptive power-seeking AGI, sitting right in front of you and available to test, doesn’t mean that you know how to write code that reliably trains a non-deceptive corrigible AGI. Especially when one of the problems we’re trying to solve right now is the issue that it seems very hard to know whether an AGI is deceptive / corrigible / etc.

Maybe the analogy for me would be that Fermi has a vague idea “What if we use a rod made of neutron-absorbing material?”...

One big obstacle you didn't mention: you can make porn with that thing. It's too late to stop it.

More seriously, I think this cat may already be out of the bag. Even if the scientific community and the american military-industrial complex and the chinese military-industrial complex agreed to stop AI research, existing models and techniques are already widely available on the internet.

Even if there is no official AI lab anywhere doing AI research, you will still have internet communities pooling compute together for their own research projects (especially i...

I'm not convinced this line of thinking works from the perspective of the structure of the international system. For example, not once are international security concerns mentioned in this post.

My post here draws out some fundamental flaws in this thinking:

https://www.lesswrong.com/posts/dKFRinvMAHwvRmnzb/issues-with-uneven-ai-resource-distribution

I very strongly agree with this post. Thank you very much for writing it!

I think to reach a general agreement on not doing certain stupid things, we need to better understand and define what exactly those things are that we shouldn't do. For example, instead of talking about slowing down the development of AGI, which is a quite fuzzy term, we could talk about preventing uncontrollable AI. Superintelligent self-improving AGI would very likely be uncontrollable, but there could be lesser forms of AI that could be uncontrollable, and thus very dangerous, as w...

Bravo! I especially agree wrt people giving too many galaxy brain takes for why current ML labs speeding along is good.

I believe this post is one of the best to grace the front page of the LW forum this year. It provides a reasoned counterargument to prevailing wisdom in an accessible way, and has the potential to significantly update views. I would strongly support pinning this post to the front page to increase critical engagement.

Thank you for calling attention to this. It always seems uncontroversial that some things could speed up AGI timelines, yet it is assumed that very little can be done to slow them down. The actual hard part is figuring out what could, in practice, slow down timelines with certainty.

Finding ways to slow down timelines is exactly why I wrote this post on Foresight for AGI Safety Strategy: Mitigating Risks and Identifying Golden Opportunities.

...

- AI is pretty safe: unaligned AGI has a mere 7% chance of causing doom, plus a further 7% chance of causing short term lock-in of something mediocre

- Your opponent risks bad lock-in: If there’s a ‘lock-in’ of something mediocre, your opponent has a 5% chance of locking in something actively terrible, whereas you’ll always pick good mediocre lock-in world (and mediocre lock-ins are either 5% as good as utopia, -5% as good)

- Your opponent risks messing up utopia: In the event of aligned AGI, you will reliably achieve the best outcome, whereas your opponent has a

So, a number of issues stand out to me, some have been noted by others already, but:

My impression is that there are also less endorsable or less altruistic or more silly motives floating around for this attention allocation.

A lot of this list looks to me like the sort of heuristics where, societies that don't follow them inevitably crash, burn and become awful. A list of famous questions where the obvious answer is horribly wrong, and there's a long list of groups who came to the obvious conclusion and became awful, and it's become accepted wisdom to not d...

I could be wrong, but I’d guess convincing the ten most relevant leaders of AI labs that this is a massive deal, worth prioritizing, actually gets you a decent slow-down. I don’t have much evidence for this.

…

Delay is probably finite by default

Convincing OpenAI, Anthropic, and Google seems moderately hard - they've all clearly already considered the matter carefully, and it's fairly apparent from their actions what conclusions they've reached about pausing now. Then convincing Meta, Mistral, Cohere, and the next dozen-or-so would-be OpenAI replacement...

"I arrogantly think I could write a broadly compelling and accessible case for AI risk"

Please do so. Your current essay is very good, so chances are your "arrogant" thought is correct.

Edit: I think this is too pessimistic about human nature, but maybe we should think about this more before publishing a "broadly compelling and accessible case for AI risk".

Thank you for writing this. On your section 'Obstruction doesn't need discernment' - see also this post that went up on LW a while back called The Regulatory Option: A response to near 0% survival odds. I thought it was an excellent post, and it didn't get anywhere near the attention it deserved, in my view.

People have been writing stories about the dangers of artificial intelligences arguably since Ancient Greek time (Hephaistos built artificial people, including Pandora), certainly since Frankenstein. There are dozens of SF movies on the theme (and in the Hollywood ones, the hero always wins, of course). Artificial intelligence trying to take over the world isn't a new idea, by scriptwriter standard it's a tired trope. Getting AI as tightly controlled as nuclear power or genetic engineering would not, politically, be that hard -- it might take a decade or t...

Very useful post and discussion! Let's ignore the issue that someone in capabilities research might be underestimating the risk and assume they have appropriately assessed the risk. Let's also simplify to two outcomes of bliss expanding in our lightcone and extinction (no value). Let's also assume that very low values of risk are possible but we have to wait a long time. It would be very interesting to me to hear how different people (maybe with a poll) would want the probability of extinction to be below before activating the AGI. Below are my super rough...

Chiming in on toy models of research incentives: Seems to me like a key feature is that you start with an Arms Race then, after some amount of capabilities accumulate, transitions to the Suicide Race. But players have only vague estimates of where that threshold is, have widely varying estimates, and may not be able to communicate estimates effectively or persuasively. Players have a strong incentive to push right up to the line where things get obviously (to them) dangerous, and with enough players, somebody's estimate is going to be wro...

I'd like to ask a few questions about slowing down AGI as they may turn out to be cruxes for me.

How popular/unpopular is AI slowdown? Ideally, we'd get AI slowdown/AI progress/Neutral as choices in a poll. I also ideally would like different framings of the problem, to test how well frames affect people's choices. But I do want at least a poll on how popular/unpopular AI slowdown is.

How much does the government want AI to be slowed down? Is Trevor's story about the US government not willing to countenance AI slowdown correct, and instead speed it up

I am interested in getting feedback on whether it seems worthwhile to advocate for better governance mechanisms (like prediction markets) in the hopes that this might help civilization build common knowledge about AI risk more quickly, or might help civilization do a more "adequate" job of slowing AI progress by, restricting unauthorized access to compute resources. Is this a good cause for me to work on, or is it too indirect and it would be better to try and convince people about AI risk directly? See a more detailed comment here: https://www.lesswrong...

But empirically, the world doesn’t pursue every technology—it barely pursues any technologies.

While it's true that not all technologies are pursued, history shows that humans have actively sought out transformative technologies, such as fire, swords, longbows, and nuclear energy. The driving factors have often been novelty and utility. I expect the same will happen with AI.

Very nice exploration of this idea. But I don't see mention, in the main post, and couldn't find by simple search, in the comments, any mention of my biggest concern about this notion of an AI development slowdown (or complete halt, as EY recently called for in Time):

Years of delay would put a significant kink in the smooth exponential progress charts of e.g. Ray Kurzweil. Which is a big deal, in my opinion, because:

(1) They're not recognised as laws of nature. But these trends, across so many technologies and the entire history of complexity, in the unive...

So, a couple general thoughts.

-In the 80s/90s we were obsessing over potential atom-splitting devastation and the resulting nuclear winter. Before that there were plagues wiping out huge swaths of humanity.

-Once a technology genie is out of the bottle you can't stop it. Human nature. -In spite of the 'miracles' of science there's A LOT humanity does not know. "There are know unknowns and unknown unknowns" D.R.

-In a few billion years our sun will extinguish and all remaining life on space rock Earth will perish. All material concerns are t...

Averting doom by not building the doom machine

If you fear that someone will build a machine that will seize control of the world and annihilate humanity, then one kind of response is to try to build further machines that will seize control of the world even earlier without destroying it, forestalling the ruinous machine’s conquest. An alternative or complementary kind of response is to try to avert such machines being built at all, at least while the degree of their apocalyptic tendencies is ambiguous.

The latter approach seems to me like the kind of basic and obvious thing worthy of at least consideration, and also in its favor, fits nicely in the genre ‘stuff that it isn’t that hard to imagine happening in the real world’. Yet my impression is that for people worried about extinction risk from artificial intelligence, strategies under the heading ‘actively slow down AI progress’ have historically been dismissed and ignored (though ‘don’t actively speed up AI progress’ is popular).

The conversation near me over the years has felt a bit like this:

This seems like an error to me. (And lately, to a bunch of other people.)

I don’t have a strong view on whether anything in the space of ‘try to slow down some AI research’ should be done. But I think a) the naive first-pass guess should be a strong ‘probably’, and b) a decent amount of thinking should happen before writing off everything in this large space of interventions. Whereas customarily the tentative answer seems to be, ‘of course not’ and then the topic seems to be avoided for further thinking. (At least in my experience—the AI safety community is large, and for most things I say here, different experiences are probably had in different bits of it.)

Maybe my strongest view is that one shouldn’t apply such different standards of ambition to these different classes of intervention. Like: yes, there appear to be substantial difficulties in slowing down AI progress to good effect. But in technical alignment, mountainous challenges are met with enthusiasm for mountainous efforts. And it is very non-obvious that the scale of difficulty here is much larger than that involved in designing acceptably safe versions of machines capable of taking over the world before anyone else in the world designs dangerous versions.

I’ve been talking about this with people over the past many months, and have accumulated an abundance of reasons for not trying to slow down AI, most of which I’d like to argue about at least a bit. My impression is that arguing in real life has coincided with people moving toward my views.

Quick clarifications

First, to fend off misunderstanding—

- reducing the speed at which AI progress is made in general, e.g. as would occur if general funding for AI declined.

- shifting AI efforts from work leading more directly to risky outcomes to other work, e.g. as might occur if there was broadscale concern about very large AI models, and people and funding moved to other projects.

- Halting categories of work until strong confidence in its safety is possible, e.g. as would occur if AI researchers agreed that certain systems posed catastrophic risks and should not be developed until they did not. (This might mean a permanent end to some systems, if they were intrinsically unsafe.)

(So in particular, I’m including both actions whose direct aim is slowness in general, and actions whose aim is requiring safety before specific developments, which implies slower progress.)Why not slow down AI? Why not consider it?

Ok, so if we tentatively suppose that this topic is worth even thinking about, what do we think? Is slowing down AI a good idea at all? Are there great reasons for dismissing it?

Scott Alexander wrote a post a little while back raising reasons to dislike the idea, roughly:

Other opinions I’ve heard, some of which I’ll address:

My impression is that there are also less endorsable or less altruistic or more silly motives floating around for this attention allocation. Some things that have come up at least once in talking to people about this, or that seem to be going on:

(Illustration from a co-founder of modern computational reinforcement learning: )

I’m not sure if any of this fully resolves why AI safety people haven’t thought about slowing down AI more, or whether people should try to do it. But my sense is that many of the above reasons are at least somewhat wrong, and motives somewhat misguided, so I want to argue about a lot of them in turn, including both arguments and vague motivational themes.

The mundanity of the proposal

Restraint is not radical

There seems to be a common thought that technology is a kind of inevitable path along which the world must tread, and that trying to slow down or avoid any part of it would be both futile and extreme.2

But empirically, the world doesn’t pursue every technology—it barely pursues any technologies.

Sucky technologies

For a start, there are many machines that there is no pressure to make, because they have no value. Consider a machine that sprays shit in your eyes. We can technologically do that, but probably nobody has ever built that machine.

This might seem like a stupid example, because no serious ‘technology is inevitable’ conjecture is going to claim that totally pointless technologies are inevitable. But if you are sufficiently pessimistic about AI, I think this is the right comparison: if there are kinds of AI that would cause huge net costs to their creators if created, according to our best understanding, then they are at least as useless to make as the ‘spray shit in your eyes’ machine. We might accidentally make them due to error, but there is not some deep economic force pulling us to make them. If unaligned superintelligence destroys the world with high probability when you ask it to do a thing, then this is the category it is in, and it is not strange for its designs to just rot in the scrap-heap, with the machine that sprays shit in your eyes and the machine that spreads caviar on roads.

Ok, but maybe the relevant actors are very committed to being wrong about whether unaligned superintelligence would be a great thing to deploy. Or maybe you think the situation is less immediately dire and building existentially risky AI really would be good for the people making decisions (e.g. because the costs won’t arrive for a while, and the people care a lot about a shot at scientific success relative to a chunk of the future). If the apparent economic incentives are large, are technologies unavoidable?

Extremely valuable technologies

It doesn’t look like it to me. Here are a few technologies which I’d guess have substantial economic value, where research progress or uptake appears to be drastically slower than it could be, for reasons of concern about safety or ethics3:

It seems to me that intentionally slowing down progress in technologies to give time for even probably-excessive caution is commonplace. (And this is just looking at things slowed down over caution or ethics specifically—probably there are also other reasons things get slowed down.)

Furthermore, among valuable technologies that nobody is especially trying to slow down, it seems common enough for progress to be massively slowed by relatively minor obstacles, which is further evidence for a lack of overpowering strength of the economic forces at play. For instance, Fleming first took notice of mold’s effect on bacteria in 1928, but nobody took a serious, high-effort shot at developing it as a drug until 1939.4 Furthermore, in the thousands of years preceding these events, various people noticed numerous times that mold, other fungi or plants inhibited bacterial growth, but didn’t exploit this observation even enough for it not to be considered a new discovery in the 1920s. Meanwhile, people dying of infection was quite a thing. In 1930 about 300,000 Americans died of bacterial illnesses per year (around 250/100k).

My guess is that people make real choices about technology, and they do so in the face of economic forces that are feebler than commonly thought.

Restraint is not terrorism, usually

I think people have historically imagined weird things when they think of ‘slowing down AI’. I posit that their central image is sometimes terrorism (which understandably they don’t want to think about for very long), and sometimes some sort of implausibly utopian global agreement.

Here are some other things that ‘slow down AI capabilities’ could look like (where the best positioned person to carry out each one differs, but if you are not that person, you could e.g. talk to someone who is):

Coordination is not miraculous world government, usually

The common image of coordination seems to be explicit, centralized, involving of every party in the world, and something like cooperating on a prisoners’ dilemma: incentives push every rational party toward defection at all times, yet maybe through deontological virtues or sophisticated decision theories or strong international treaties, everyone manages to not defect for enough teetering moments to find another solution.

That is a possible way coordination could be. (And I think one that shouldn’t be seen as so hopeless—the world has actually coordinated on some impressive things, e.g. nuclear non-proliferation.) But if what you want is for lots of people to coincide in doing one thing when they might have done another, then there are quite a few ways of achieving that.

Consider some other case studies of coordinated behavior:

These are all cases of very broadscale coordination of behavior, none of which involve prisoners’ dilemma type situations, or people making explicit agreements which they then have an incentive to break. They do not involve centralized organization of huge multilateral agreements. Coordinated behavior can come from everyone individually wanting to make a certain choice for correlated reasons, or from people wanting to do things that those around them are doing, or from distributed behavioral dynamics such as punishment of violations, or from collaboration in thinking about a topic.

You might think they are weird examples that aren’t very related to AI. I think, a) it’s important to remember the plethora of weird dynamics that actually arise in human group behavior and not get carried away theorizing about AI in a world drained of everything but prisoners’ dilemmas and binding commitments, and b) the above are actually all potentially relevant dynamics here.

If AI in fact poses a large existential risk within our lifetimes, such that it is net bad for any particular individual, then the situation in theory looks a lot like that in the ‘avoiding eating sand’ case. It’s an option that a rational person wouldn’t want to take if they were just alone and not facing any kind of multi-agent situation. If AI is that dangerous, then not taking this inferior option could largely come from a coordination mechanism as simple as distribution of good information. (You still need to deal with irrational people and people with unusual values.)

But even failing coordinated caution from ubiquitous insight into the situation, other models might work. For instance, if there came to be somewhat widespread concern that AI research is bad, that might substantially lessen participation in it, beyond the set of people who are concerned, via mechanisms similar to those described above. Or it might give rise to a wide crop of local regulation, enforcing whatever behavior is deemed acceptable. Such regulation need not be centrally organized across the world to serve the purpose of coordinating the world, as long as it grew up in different places similarly. Which might happen because different locales have similar interests (all rational governments should be similarly concerned about losing power to automated power-seeking systems with unverifiable goals), or because—as with individuals—there are social dynamics which support norms arising in a non-centralized way.

The arms race model and its alternatives

Ok, maybe in principle you might hope to coordinate to not do self-destructive things, but realistically, if the US tries to slow down, won’t China or Facebook or someone less cautious take over the world?

Let’s be more careful about the game we are playing, game-theoretically speaking.

The arms race

What is an arms race, game theoretically? It’s an iterated prisoners’ dilemma, seems to me. Each round looks something like this:

In this example, building weapons costs one unit. If anyone ends the round with more weapons than anyone else, they take all of their stuff (ten units).

In a single round of the game it’s always better to build weapons than not (assuming your actions are devoid of implications about your opponent’s actions). And it’s always better to get the hell out of this game.

This is not much like what the current AI situation looks like, if you think AI poses a substantial risk of destroying the world.

The suicide race

A closer model: as above except if anyone chooses to build, everything is destroyed (everyone loses all their stuff—ten units of value—as well as one unit if they built).

This is importantly different from the classic ‘arms race’ in that pressing the ‘everyone loses now’ button isn’t an equilibrium strategy.

That is: for anyone who thinks powerful misaligned AI represents near-certain death, the existence of other possible AI builders is not any reason to ‘race’.

But few people are that pessimistic. How about a milder version where there’s a good chance that the players ‘align the AI’?

The safety-or-suicide race

Ok, let’s do a game like the last but where if anyone builds, everything is only maybe destroyed (minus ten to all), and in the case of survival, everyone returns to the original arms race fun of redistributing stuff based on who built more than whom (+10 to a builder and -10 to a non-builder if there is one of each). So if you build AI alone, and get lucky on the probabilistic apocalypse, can still win big.

Let’s take 50% as the chance of doom if any building happens. Then we have a game whose expected payoffs are half way between those in the last two games:

Now you want to do whatever the other player is doing: build if they’ll build, pass if they’ll pass.

If the odds of destroying the world were very low, this would become the original arms race, and you’d always want to build. If very high, it would become the suicide race, and you’d never want to build. What the probabilities have to be in the real world to get you into something like these different phases is going to be different, because all these parameters are made up (the downside of human extinction is not 10x the research costs of building powerful AI, for instance).

But my point stands: even in terms of simplish models, it’s very non-obvious that we are in or near an arms race. And therefore, very non-obvious that racing to build advanced AI faster is even promising at a first pass.

In less game-theoretic terms: if you don’t seem anywhere near solving alignment, then racing as hard as you can to be the one who it falls upon to have solved alignment—especially if that means having less time to do so, though I haven’t discussed that here—is probably unstrategic. Having more ideologically pro-safety AI designers win an ‘arms race’ against less concerned teams is futile if you don’t have a way for such people to implement enough safety to actually not die, which seems like a very live possibility. (Robby Bensinger and maybe Andrew Critch somewhere make similar points.)

Conversations with my friends on this kind of topic can go like this:

The complicated race/anti-race

Here is a spreadsheet of models you can make a copy of and play with.

The first model is like this:

The second model is the same except that instead of dividing effort between safety and capabilities, you choose a speed, and the amount of alignment being done by each party is an exogenous parameter.

These models probably aren’t very good, but so far support a key claim I want to make here: it’s pretty non-obvious whether one should go faster or slower in this kind of scenario—it’s sensitive to a lot of different parameters in plausible ranges.

Furthermore, I don’t think the results of quantitative analysis match people’s intuitions here.

For example, here’s a situation which I think sounds intuitively like a you-should-race world, but where in the first model above, you should actually go as slowly as possible (this should be the one plugged into the spreadsheet now):

Your best bet here (on this model) is still to maximize safety investment. Why? Because by aggressively pursuing safety, you can get the other side half way to full safety, which is worth a lot more than than the lost chance of winning. Especially since if you ‘win’, you do so without much safety, and your victory without safety is worse than your opponent’s victory with safety, even if that too is far from perfect.

So if you are in a situation in this space, and the other party is racing, it’s not obvious if it is even in your narrow interests within the game to go faster at the expense of safety, though it may be.

These models are flawed in many ways, but I think they are better than the intuitive models that support arms-racing. My guess is that the next better still models remain nuanced.

Other equilibria and other games

Even if it would be in your interests to race if the other person were racing, ‘(do nothing, do nothing)’ is often an equilibrium too in these games. At least for various settings of the parameters. It doesn’t necessarily make sense to do nothing in the hope of getting to that equilibrium if you know your opponent to be mistaken about that and racing anyway, but in conjunction with communicating with your ‘opponent’, it seems like a theoretically good strategy.

This has all been assuming the structure of the game. I think the traditional response to an arms race situation is to remember that you are in a more elaborate world with all kinds of unmodeled affordances, and try to get out of the arms race.

Being friends with risk-takers

Caution is cooperative

Another big concern is that pushing for slower AI progress is ‘defecting’ against AI researchers who are friends of the AI safety community.

For instance Steven Byrnes:

(Also a good example of the view criticized earlier, that regulation of things that create jobs and cure diseases just doesn’t happen.)

Or Eliezer Yudkowsky, on worry that spreading fear about AI would alienate top AI labs:

I don’t think this is a natural or reasonable way to see things, because:

It could be that people in control of AI capabilities would respond negatively to AI safety people pushing for slower progress. But that should be called ‘we might get punished’ not ‘we shouldn’t defect’. ‘Defection’ has moral connotations that are not due. Calling one side pushing for their preferred outcome ‘defection’ unfairly disempowers them by wrongly setting commonsense morality against them.

At least if it is the safety side. If any of the available actions are ‘defection’ that the world in general should condemn, I claim that it is probably ‘building machines that will plausibly destroy the world, or standing by while it happens’.

(This would be more complicated if the people involved were confident that they wouldn’t destroy the world and I merely disagreed with them. But about half of surveyed researchers are actually more pessimistic than me. And in a situation where the median AI researcher thinks the field has a 5-10% chance of causing human extinction, how confident can any responsible person be in their own judgment that it is safe?)

On top of all that, I worry that highlighting the narrative that wanting more cautious progress is defection is further destructive, because it makes it more likely that AI capabilities people see AI safety people as thinking of themselves as betraying AI researchers, if anyone engages in any such efforts. Which makes the efforts more aggressive. Like, if every time you see friends, you refer to it as ‘cheating on my partner’, your partner may reasonably feel hurt by your continual desire to see friends, even though the activity itself is innocuous.

‘We’ are not the US, ‘we’ are not the AI safety community

“If ‘we’ try to slow down AI, then the other side might win.” “If ‘we’ ask for regulation, then it might harm ‘our’ relationships with AI capabilities companies.” Who are these ‘we’s? Why are people strategizing for those groups in particular?

Even if slowing AI were uncooperative, and it were important for the AI Safety community to cooperate with the AI capabilities community, couldn’t one of the many people not in the AI Safety community work on it?

I have a longstanding irritation with thoughtless talk about what ‘we’ should do, without regard for what collective one is speaking for. So I may be too sensitive about it here. But I think confusions arising from this have genuine consequences.