> I'm just saying apriori, do you think we should be 50/50 on whether the ASI ends up spending its time building catgirls? Or are you advocating for some kind of uncertainty we can't assign numbers to?

Yes, I am saying precisely that we shouldn't assign 50/50 when faced with deep ignorance about the nature of the generating process. Maybe I am advocating for a stance of Knightian uncertainty or maybe just saying that the standard Bayesian approach can plausibly lead you to be much too overconfident in your views. I think reasoning about ASI a priori does...

This exact point is raised and made in the original post, no?

The type of batteries used for grid-scale energy storage are not important to drones. FPV drones use high density, high power batteries that owe their development to mobile phones. And Shaheds, powered by piston engines, don't have batteries at all.

Red has functional part and qualitative part, reddnes. (...) E is energy in E=mc2. Its functional role is defined by the equation, but the variable itself can be denoted by a different letter, like W.

No, that's not "two different roles of the same thing", that's "two entirely different things":

- Energy is the thing described by E=mc^2

- The letter "E" is an arbitrary representation of the concept of the energy

These are not remotely the same thing. They are not two aspects of same thing. They are not two facets of the same thing. They are two entirely distinct t...

Episode #2 of the AI industrialisation of math series: GDM's best Gemini 3.1 Pro-based agent autonomously resolved 9 open Erdős problems out of the full set of 353 in the open-source Formal Conjectures repo at a few hundred dollars per problem. (They added that "we do not intend USD as a comparison of AI and human mathematical labor.") I've previously joked that the current SOTA on the "Erdos problems benchmark" is Terry Tao's guess of 1-2%; now we can say that with 3.1 Pro it's 9/353 = 2.5% (Tao's disclaimer #1 notwithstanding).

Outside of Erdős problems, ...

"What were these starting hypotheses and prior probabilities, before I had any evidence at all?" This maps on nicely to the classic Zen koan, "What was your original face before your parents were born?".

I have similar thoughts on this and I appreciate that you articulated these things clearly. And I look forward to reading about your proposed solutions!

Especially agree with this: "For philosophically confusing questions involving anthropics and the simulation hypothesis, I refuse to answer with probabilities and instead ask what exact bet we are hypothetically making, or what action we need to decide on. " I have found myself saying something like "I don't want to give an answer to P(doom), because I think answering this question ends getting into things ...

Where in the essay did I give that impression?

Yes. This perspective is also behind some intuitions for gradual disempowerment: Even if you have an aligned AI, if you specialize it into a billion contexts, each individual AI may try to do good while the collective still destroys the world.

I think AI risk from misaligned superintelligence and AI risk from specialized AIs are different categories and should be considered separately. On my model the former is much more likely.

"they are Level 6 at standard task X" what does Level mean exactly? Are you saying that they can complete tasks up to a certain difficulty cutoff?

Relatedly, Scott Alexander's ACX Grants 1-3 Year Updates:

...Someone (I think it might be Paul Graham) once said that they were always surprised how quickly destined-to-be-successful startup founders responded to emails - sometimes within a single-digit number of minutes regardless of time of day. I used to think of this as mysterious - some sort of psychological trait? Working with these grants has made me think of it as just a straightforward fact of life: some people operate an order of magnitude faster than others. The Manifold team created something like

It can be useful to communicate about credences with other people who believe in them. But ideally everyone should move on to better approaches and choose between competing ideas for qualitative reasons not quantitative credence differences.

Consistency matters more than peak quality

Don't think this particular bit follows from your other points, and think it's contradicted by other anecdotal evidence about top performers in winner take all fields. If anything making 80/20 decisions increases the number of bangers at the expense of average quality.

Rest seems accurate though.

Negatively-framed instructions increase salience of the prohibited action.

This is the Waluigi Effect.

Thanks for this! Do you have any recommendations for potentially more reliable news outlets?

Huh, when I was a child and did not have male adult levels of strength yet, I always jammed a kitchen knife in the cap and wiggled it until I did something that let air in. That usually solved it.

Sun-synchronous orbits are already very popular, have you considered how large a constellation can they safely sustain before everyone placing satellites would have to pay some kind of congestion fees?

Total non-expert on LLM training here (on the details, anyway). My recent thinking about alignment (as a technical, mechanistic step in producing a finished LLM product) is that it "feels" to me akin to the "schooling" (right/wrong instructional teaching) of humans. It produces mixed results. One of the main quirks in my mind is the funny observation that behavior will have a surface and a subtext intent (trying to please the master enough to be left alone, and keeping one's job, while also sticking it to the man behind his back).

So, for me, the Goodhart r...

Where does one get ~90T of non-useless text tokens?

Disagree that improving batteries was ever popular vs wonkish. Definitely disagree that average climate change advocates discussed this.

I went to college in 2012-2016 at a liberal arts college (emphasis on liberal, folks certainly cared about climate change). I can't recall a single conversation about working on battery tech. I can recall several on geoengineering & pumping white sulfur into the atmosphere.

That is a useful thing if implemented well, and indeed it is a thing I use (from OpenAI and Anthropic) more often than I use Google Search. But that thing is not Google Search.

Several hours ago I googled an uncommon steel grade (an alphanumeric designation with the word steel). In the late 2010s Google would have given me search results in milliseconds and at least one of the first two links would have had the specs I needed.

Today I got a page of garbage links which happened to have same number in different contexts, and then 30 seconds later after a lot o...

look for the blue hat and acx sign.

Very interesting report!

I think the assumptions regarding vulnerability to debris are questionable, I assume that the Starlink "zero confirmed failures due to debris strikes" thing probably means complete failure of the satellite, not some sort of degradation?

Also, Starlinks don't (afaik) use radiators with coolant for heat rejection, so loss of coolant due to a radiator being hit by debris is a risk that ODCs would face and Starlinks don't. I think mitigating that would be a barrier to getting really low mass radiators, though I don't expect it's a showst...

Maybe we should expect first AI-enabled takeover of rogue countries.

I think you are conflating capabilities with context. Yes you may have a superpowerful AGI but you do need to giveit the relevant context for a specific job, which is essentially turning it into a specialised AI. Wether that context is given to it through training or prompts or something else, you are still sepcialising it. That's why agentic AI is so powerful: you take a powerful base model and make it more useful by giving it the context. No matter how powerufl your AI gets, it will still be more effective when given specific context.

That doesn't seem like an analogous case to me. In the case you describe, it's ex-ante optimal to commit to betting at 9999:1 odds. In the original case described in the post, it's ex-ante optimal to commit to betting at 99:1 odds. That's a big important difference.

But that's not what is going on here since mrcSSA does not mean "be updateless"/"use your prior in EU calculations", it just means don't anthropically update your prior based on how many observers are in different worlds.

I don't think there's any kind of empirical update that's not an anthropic ...

No idea how many tokens this was or what the token spend was, just jotting down an interesting example of the use of AI agents to turn around a dying traditional business.

Petaluma Creamery resurrected by Salesforce AI agents + other biz transformation stuff

Daniel had spent 17 years deep in the Salesforce ecosystem. He’d built manufacturing enterprise resource planning systems for companies like Del Monte. He’d run Salesforce consulting practices. But when Larry finally came calling, Daniel happened to be on sabbatical. “I actually got a little burned out.”

Relevant PSA: crossref.org has an API where you can post a DOI and it will return the full citation.

...Economists studying AGI like a market phenomenon may be akin to biologists studying computers through the lens of evolution - technically possible, occasionally insightful, but probably fundamentally missing the point. The economic frame persists not because it's accurate but because it's comfortable. It allows experts to feel relevant without confronting the possibility that their expertise might become obsolete.

The economists' frame may be precisely inverted. They're trying to understand a unified intelligence through the lens of coordination mechanisms

I think this debate would become more interesting if equality-focused people really engaged with the IABIED argument, and alignment-focused people really engaged with the "alignment to whom" argument. As it stands the debate is stuck on both sides.

This is the main driving case for orbital data centers. Space development timelines are long: the irreplaceability of hardware means that as compute efficiency increases, ground based systems can swap out compute but space based ones cannot.

Datacenters are immensely unpopular on the ground and AI companies are seeking alternate places to build them.

As far as power costs go, the real tradeoff is energy storage; if batteries are cheaper than launch costs, terrestrial will beat SSO. (But realistically, everyone will keep using the short-lead, cheap and scala...

You're absolutely right that maritime law works this way - but actual shipping companies manage to get around it all the time.

1) Poorer nations compete with one another to have the absolute most permissive maritime regulations they possibly can so as to attract shipping companies registering with them as a flag state. (The money from such registry ain't great but it makes a significant difference to certain economies).

2) The shipping companies register ships under one flag state then, if they're ever forced to submit to regulations or go to court or anythi...

Increasingly, though, new data center capacity is bottlenecked by multi-year queues to connect to the power grid.[3]

- Interconnection timelines have lengthened substantially in recent years. Lawrence Berkeley National Laboratory reports that projects built in 2023 waited a median of roughly five years from interconnection request to commercial operation.

You appear to have relied upon a hallucination. Reading the chatgpt-provided citation here reveals this to be a report about connecting power plants to the grid. Interconnection for power consuming pr...

As an aside: if you have strong enough priors that the real cosmology will be dominated by Boltzmann Brains, then observing that you indeed seem to be in a cosmology that will be BB dominated should increase your probability of being a real observer.

The arguments for external reality seem rather poor.

The inference from "I am having an experience" to "there is something out there causing that experience" is invalid. I've suggested elsewhere that the latter may be meaningless.

I wonder how it varies between people — for me, abstaining from sweets leads to not really wanting them. My desire for sweets after a month of no sweets is lower than it is after a few days of no sweets. (But lower than it is a day after sweets... I think?)

Obviously it isn't perfect because I always return at some point haha (although I've never made a total effort to completely cut sweets out of my life)

Executive Clock Speed

A concept I've found useful: executive clock speed — the rate at which the leadership of an organization can make decent ("80/20") decisions that actually get executed.

Sam Altman used to compare how quickly YC's best founders answered his emails versus the mediocre ones: "It was a difference of minutes versus days on average response times." Email latency is a proxy for the founder's OODA loop, the externally visible signature of internal decisionmaking.

As organizations scale, this becomes the binding constraint. Most decisions are 8...

For a while, working on Improving Batteries to tackle the Climate Crisis was one of the coolest, technically interesting & (seemingly) moral calling for a young lad with a technical bent. The CO2 is still being pumped into the air by the gigatons but meanwhile the battery revolution has given way to the drone revolution which is quickly obsoleting all non-drone militaries, handing power to China over NATO, and opening the way for unprecented terrorist attacks.

Unforeseen consequences are the norm. A lesson for EAs in there.

Wow, I did not expect those ODC cost per GW numbers to end up as close to Earth-based DCs as that graph shows. Even if the launch costs stayed above $500/kg, there would still be an argument for the right buyer to pay it, if other options are sufficiently land and energy constrained.

Various illustrative examples of metascience ideas from Michael Nielsen and Kanjun Qiu's 2022 essay A Vision of Metascience: An Engine of Improvement for the Social Processes of Science, for my own reference:

Unusual social processes that could be (or are being) trialled today by adventurous funders or research organizations

- Fund-by-variance: Instead of funding grants that get the highest average score from reviewers, a funder should use the variance (or kurtosis or some similar measurement of disagreement5) in reviewer scores as a primary signal: only fund

I think this would make a good 'top level post', as they say

That's not true. We have evidence that after agriculture, individuals were materially less well off, eating not only less food but lower quality food. Even once populations reached their new carrying capacity, this effect persisted, even though (just?) as many children were dying in infancy.

That's of very little comfort to presently-existing humans who are staring down the barrel of QoL reductions.

One quick note I forgot to mention! I purposefully avoided talking when these fields are going to be automated, that is a pretty big question and worth it’s own discussion. This is more so, if we accelerating towards automated research, which fields do I expect to get automated and which will require more radical human-engineered breakthroughs.

Human "intelligence" is unsatisfyingly vague

Intuitively what I care about is how <well> can <person> do <set of tasks>.

Thinking about it that way, 3 dimensions immediately stand out:

- the type of task

- the difficulty of the task

- the time to complete the task

You could imagine characterizing people's "intelligence" like so: they are Level 6 at standard task X with a Total Time to Completion of 7 minutes, and show a graph for completion time on each level for more details.

Current IQ tests don't cleanly separate time and level, so it's an i...

Yeah, I think this is what the Ambitious Vision for Interpretability post suggests about feedback loops, where the more you know about model internals, the easier it is to develop the next set of tools and so forth.

I predict we'll need lots of human brainstorming and engineering to get a breakthrough on mech interp which makes other tasks on mech interp easier to verify and less fuzzy and therefore easier to automate.

Fazl Barez's work on Automated Interpretability is already a good start on this.

Ah I see, you're thinking of core set of research skills -> domain specific knowledge. I do agree with you but one point I want to raise is that some of those core research skills are being able to deal with hard-to-supervise fuzzy tasks and coming up with truly novel research ideas.

While on the way to an fully automated researcher, I believe models are going to pick up some of the research skills faster than others (e.g implementation, review) and the fields that require more of these tasks are the ones (easy-to-verify tasks) that will get automated fi...

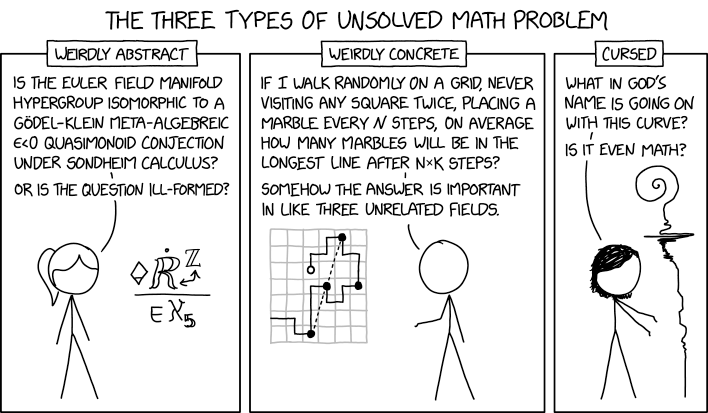

what I find interesting is that so far AI is successfully attacking mostly the concrete problems in this meme, and often even more specifically mostly revolving around geometry, combinatorics, and finding bounds, or like the faster matrix multiplication result

its for sure in big part about the nature of the problems being a better fit for current systems, but at the same time i wonder if this somehow points to the types of generalizations that the current models can do

It's striking to me that every authority quoted is Western. It brings to mind that "Alexis de Tocquevill, who I'm sure every American has read" - meme

I was researching ontology of mental states. Gestalt therapy distinguishing mental process between figure and ground where both as part of the conscious landscape. Freudian models distinguish between conscious, pre-conscious and unconscious.

I had a previous chat about the gestalt therapy model and was asking a model (I think 5.0) for the Freudian categories. On it's own it suggested to have a figure / ground / pre-conscious / unconscious model for my work, without me even explicitely asking it to do a synthesis. It did seem to understand where my research...

I agree with something like this, with caveats. Sure, unless other actors negotiate for control over an ODC before you launch it, then they need anti-satellite capability to forcibly shut it down. But presumably there are plenty of standard sources of leverage the ODC operator, e.g. fines or sanctions on the company, or legal action against individual principals.

In fact, there's a sense in which ODCs (unlike castles) are extremely vulnerable: you can't afford to reinforce them, you can't easily repair them, and (importantly) the human and political costs o...

That's not true if you and your employees are on earth. It's like saying, "I'm building data centers in neutral waters, and no one can manage me." Nope. It doesn't work that way. You're subject to the jurisdiction of the country whose flag your ship flies

The reason why I prefer butter knifes is that they are much easier to get under the lid. They are only sharp enough to cut butter so you can also grab it close to the tip if you need to.

Yes, don't use anything that can stab or cut you even if for some weird reason you call that a butter knife.

My point about apples was an illustration that’s some properties remains in possible things, this includes both shape and numbers, but not the first step of generalisation.

We are far from enumerating the qualitative aspect of qualia and may be it is even not possible in a provable way. Maybe because enumerating assumes that we can predict qualitative property of qualia which contradict idea that qualia depends only on itself.

Ok, it seems the American butter knife is a deadly weapon. The butter knife I mean has a rounded tip usually not even a serrated edge and it's basically impossible to cut anything with it. Except butter.

I always get mad when my wife mutilates a bread roll trying to cut it with a butter knife. That's what a bread knife is for.

Red has functional part and qualitative part, reddnes. I tried to explain this in my previous post about qualia as internal variables. For example E is energy in E=mc2. Its functional role is defined by the equation, but the variable itself can be denoted by a different letter, like W. Letters can also exist not related to the equations, that is free-floating letters.

Only if we assume that unobserved qualia do not reddness, when speaking about possible qualia's color become meaningless, as by our assumption unobserved qualia don't have redness and possibl...

I agree, and I noticed a few months back that actually, LLMs more often than not annoy me with their styling nowadays, while a year ago or so they were excellent (ChatGPT being the worst offender). There's two pressures that come to mind:

1) Public data corruption. Linkedin, substack, and reddit are all plagued with high-signal LLM idiosyncrasies, and I assume many of these are not cleaned out of training data. So new models learn to strengthen those same patterns, and all converge on the same sort of phrasing.

2) RLVR is a flawed reward system for language....

To add to the confusion, it appears that China really is building underwater data centers. If it wasn’t true, it would be pretty much the kind of thing that R1 likes to hallucinate. But us seems that also, it’s actually true.

The distribution of characters that (e.g.) R1 plays seems much more constrained than the distribution of all characters in text on the Internet, though. Although there’s some unpredictability in which persona you get each time, there are patterns that emerge. We can recognize Claude, or DeepSeek, or ChatGPT even when they’re not playing the exact same character.

I have no idea what going on with R1’s obsession with bioluminescence, but it’s one of many tells for which LLM generated the text.

We’ve had the Waluigi effect all along. That is, the LLM is not playing a single character but a statistical distribution of characters, and suitable prompts (or random chance of which token the sampler picks) can bring one of the other characters to the fore.

I mostly do experiments with DeepSeek (for reasons such as, theyre open weights models) and with that family of models there’s a chance you’ll be talking to a trickster archetype.

Why? 2lb seems way too low, and hand grippers are extremely easy to use (and for some people fun). You can just gradually increase the resistance to get a sense of progress

I genuinely believe that it's a good thing for the majority religion of the country to have at least one representative on the Supreme Court who understands it in the way that it can be difficult for a non-native. I think it's particularly bad for legitimacy to have an entirely Catholic and Jewish court. I agree the statement is structured as a joke, but I believe there should ideally be at least one man, at least one woman, at least one Protestant, and I know that this one is more of a stretch for lifecycle and class reasons but I'd like to have at least one renter (one third of American households), at least not a geographic mono-culture, etc.

Yeah, so the world model thing was actually from the realisation that the term AI includes old-fashioned AI like DeepBlue.

Dangers from systems which have an idea what they're doing seem qualitatively different from those which don't (e.g missile detection systems).

For the timelines, the idea is that you can change the timeframe you're talking about as a meta-label. If you're discussing with a friend, hopefully this choice should make it somewhat clear? Hard to do that with the structure we have otherwise.

The main case for ODCs is the cost of energy:

I suspect one motivation for orbital datacenters is that you get to do whatever business you want with anyone on earth, and nobody can stop you without a military strike (and the risk of Kessler syndrome). This is a position of significant political power; it is a castle on top of the biggest hill. It is a position that allows the operator to circumvent quite a lot of earthly laws — including AI regulation.

Surely there are third parties with authority over the labs who would not permit this scenario to occur?

You presume too much.

I'm a man with amateur-athletic grip strength, like I dead lift and rock climb, and I can't open many jars unassisted. Note the trope of a man opening a pickle jar for a woman often involves the man handing it to a second man, who opens it, then the first man says "I loosened it for you."

Jelly jars, and those small jars of ginger paste require breaking the air seal by banging it with an implement. Probably there's a better way, I learned banging.

I can think of some containers designed to require an implement - wine bottles, paint cans, beer bottles.

It seem...

Unrelated: the "AI knows x-risk is possible" node seems intuitively related to strategic competence (though in this map, higher probability increases final p(doom))

The thing that stood out to me was the sheer number of subquestions. I think including too many is counterproductive because of the conjunction fallacy.

Context: I did the full version of the sim. I ran the AI 2027 tabletop wargames in 2025, and they have since updated the material. So, some points I make here may not carry over.

As far as I know, Conclave 1492 is different & similar in the following ways to the AI 2027 tabletop wargames:

- Just like in the wargames, your goal is to be true to your character, not win (though indeed many characters will want to win in some subgame by e.g. becoming pope). You are given a rich enough character sheet (mine was 30+ pages) that you will know your background, you

It's possible that I'm missing something, but the last two nodes (the AI knows that x-risk is possible, and what the AI's beliefs are about) don't seem like they are at all relevant to x-risk forecasting.

For me specifically, the beliefs that are the most entangled with x-risk probability are whether a sufficiently strong ban treaty (e.g.) will be put into place[1], since it gives time for more safety research and potentially human intelligence amplification, and the difficulty of the alignment problem (although this is kind of hard to estimate).

Other facto...

So I tried having a conversation with 4.7 Opus about this and it cut me off (transcript available). So, an Anthropic model doesn't like me thinking about Anthropic policy. I'm sure that's fine and not a step on a road to somewhere stupid.

The longer they keep Mythos locked up, the more unstable and coup-promoting the situation becomes. By keeping a powerful asset to themselves, it becomes unique (like say, a radio station in 1969 Libya). This can go in three ways, and only three ways (Naunihal Singh's coup-as-coordination-game framework applies here):

- Thei...

I was making a more general point that the brain's "alignment" is fragile and circumstance-dependent (training environment, capability level, architecture, etc).

I'm pessimistic about the effectiveness of proposals which aren't robust this sense. E.g. a full solution to the diamond maximizer problem would fit this criterion, while e.g. the proposal you linked wouldn't.

What would you or the OP's authors say about the AI futures map by swante? Does anyone remember other such flowcharts?

Curated. I think it's good to notice when things are happening in the world that provide evidence about various models people had of the kinds of problems we might run into with AI alignment. This post notices a thing happening, stops to look around, tries to connect it to mechanistic models, and prompts me to think about how all of this feeds back into earlier theories about how things might (or might not) go wrong in the future.

This particular bit of evidence isn't evidentially good news, but noticing it now is better than not noticing it now.

interesting. what model(s) did you try on? I vaguely remember seeing identical DPO cook gpt-4.1-mini but cause more interesting behavior in gpt-4.1.

There are gestures toward the need for contemplation, but no direct acknowledgement of the assumptions made about AGI and the possibility that they're wrong. Why?

Once I read this and learned about the Wiliams syndrome, my first idea was that it originates from the brain's failure to remove any unused connections, which negatively affects its ability to learn. People affected with such a syndrome tend to become more altruistic, not less.

Additionally, I wonder how the brain is supposed to be reverse-engineered and tested. Cannell's proposal to generate many independent brainlike AIs incapable of telepathic communication would at least have a fair chance to detect the misaligned brains.

Finally, I suspect that the way...

What service did you use for this?

This is a nice exercise! Pretty clean and understandable UX too.

On the object level, I think some of the nodes don't capture distinctions that I consider important. Like the question of whether ASI has a world model of existential catastrophe seems basically irrelevant to me. It looks to me like you added that node as a response to things Yann LeCun has said about why his P(doom) is low?

It's also missing some question that seem pretty important, like, will takeoff be slow or fast? How likely are extrapolations of current alignment techniques to work? How likely are governments to start taking AI extinction risk seriously? (Of course you can't add too many questions or it gets unwieldy.)

I anecdotally had the sense that this was a deeply unpopular opinion; certainly many of the people I talked about these results with thought it would be deeply unpopular.

Comment to post your guesses.

I thought 10% yes, 10% no, 80% not sure.

This varies a lot with what kind of discussion is going on, but many times where it's OK to call someone stubborn, it's ALSO ok to defend stubborn-ness as a positive trait.

Scream! This feels like an extremely rude thing to do... then again, I guess the person making the accusation is probably being pretty rude to begin with... maybe it works?

This user is banned. There was substantive feedback in Gordon's top-level comment (that this dating post didn't give much of a sense of you as a person), and also honestly described how it landed with him. In response, Xger31 didn't engage with the former, said he didn't agree with the latter, then insulted Gordon a bunch. This isn't good discourse, and is far below the standard for first-time contributors to LessWrong, so I have removed his account's permissions to submit content to the site.

fwiw, the original Death of Ivan Ilyich describes a well-off man who, up until his illness, hadn't really faced many serious hardships.

You can dispense with having beliefs at all as well, but by that point we're essentially dispensing with being a mind at all

To some extent yeah, but for example, think of a corporation or a state government or something. In some narrow-ish senses it has greater power / knowledge / ability / skill / capability compared to an individual human; and that's not entirely just due to having collected several humans, but also partly due to some narrow usefulness of the corporate structures themselves, so this entity has some degree of its own reality if you see...

The Owners are weak-men in that they are not the strongest possible defenders of a slavery setup broadly similar to this. Nonetheless it good to get on the same page that this setup would be morally atrocious. That common ground is helpful for figuring out whether the real-life situation is.

In a sense, yes- of course you can construct something without any given characteristic of a mind, such as an inert rock or a pair of dice.

That said, I'd argue the presence of something like a simplicity prior- not necessarily something that fits the formal definition of a prior, but some sort of tendency for simple beliefs over complicated ones- is a necessity of having beliefs of any kind at all.

For instance- you might have no prior belief, but whenever you generate a belief of any kind, assign it 50% probability. This isn't a prior(it's more like a chan...

It seems to me that the hardest parts of alignment to automate are also the most important. The one exception I can think of is comprehensive reverse-engineering, which seems like it would be slightly more tractable. Still, I don't think LLMs are currently capable of making novel advances there.

Test-time compute / amplification for anti-sandbagging / exploration hacking

One worry with using weak off-model SFT for sandbagging elicitation is that, if the adversarial strong model is sandbagging in a sophisticated way, matching the weak model performance would be consistent with sandbagging (i.e. simulating a worse model's performance).

But if test-time scaling continuous to hold reasonably well, we could potentially just ask models to think for a very long time on tasks where the strong model might be sandbagging, and use SFT (or on-policy distillati...

I feel like you are just describing life right now for anyone who moves from a developing country to a developed country.

When I first came from India to US, another Indian told me "to learn to pass time". Because the many challenges of daily life in India don't exist in US. So one has to figure out joys of life in US. For many places, it is enough to just be able to survive. If you are stuck in a war-torn place, every new day is an achievement.

You are correct, butter knives are not steak knives.

Let me put it this way, butter knives are sharper than a teaspoon, they are also long implements so your hand is further from the tip. Both things mean a slip (which inevitably happens when interacting with a lid on glass), can result in a cut or stab.

Why risk this? Especially as general advice, maybe you happen to have the perfect butter knife for the job, but other butter knives may be lethal.

Every teaspoon will get the job done safely.

If the problem is purely grip strength then the observed results should be that when women try opening the jar their hand slip before the cap starts turning. Is that the case? Not sure myself.

For example, I suspect many more women moisturize their hands than men. That is going to change the adhesion of skin to cap producing the "grip" to turn the cap. (Here the analogy might be comparing tire compounds for long lasting tires and performance tires and cornering speeds of the same car on a test track.) What might happen when that is controlled for -- assumi...

For anyone who hasn’t added me on wechat yet please do so at dlwjiangg to be added to the group chat.

Some notes and charts from Forethought's report (model). Man, their reports are so aesthetic.

... (read more)